Benchmarking

8 articles

Import AI 453: Breaking AI agents; MirrorCode; and ten views on gradual disempowerment

Welcome to Import AI, a newsletter about AI research. Import AI runs on arXiv and feedback from readers. If you’d like to support this, please subscribe. A shorter issue than…

ImportAI 449: LLMs training other LLMs; 72B distributed training run; computer vision is harder than generative text

Welcome to Import AI, a newsletter about AI research. Import AI runs on arXiv and feedback from readers. If you’d like to support this, please subscribe. Subscribe now Can LLMs…

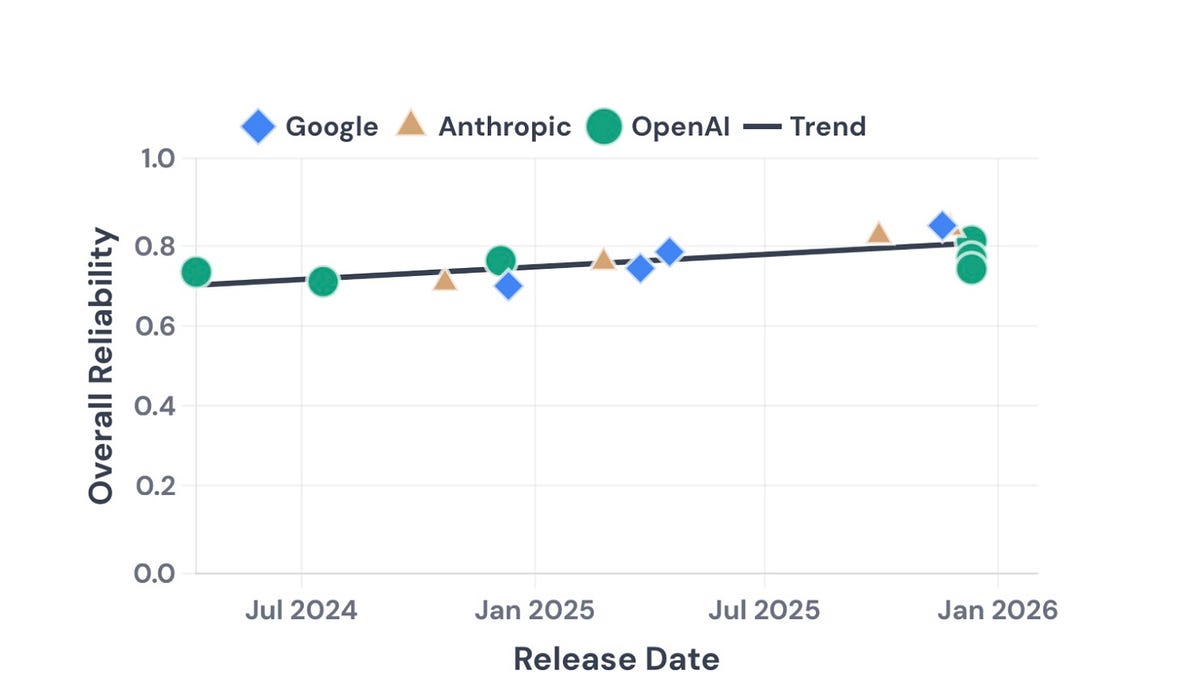

New Paper: Towards a science of AI agent reliability

Quantifying the capability-reliability gap

Import AI 446: Nuclear LLMs; China’s big AI benchmark; measurement and AI policy

Welcome to Import AI, a newsletter about AI research. Import AI runs on arXiv and feedback from readers. If you’d like to support this, please subscribe. Subscribe now Want to…

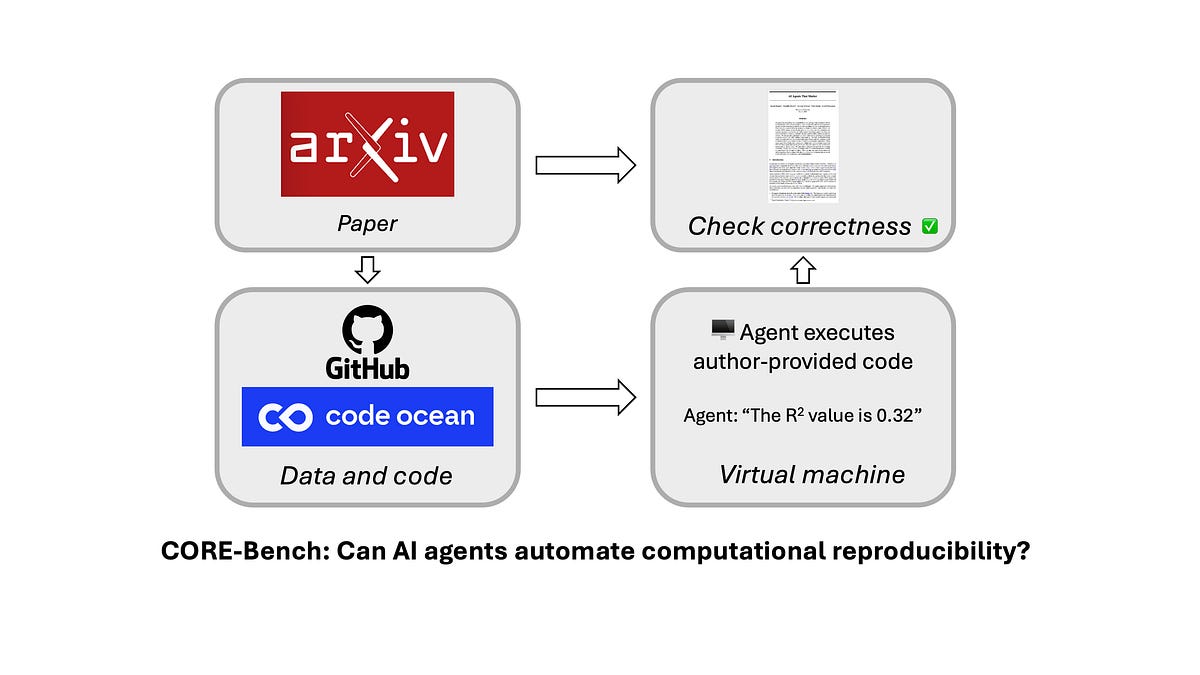

Can AI automate computational reproducibility?

A new benchmark to measure the impact of AI on improving science

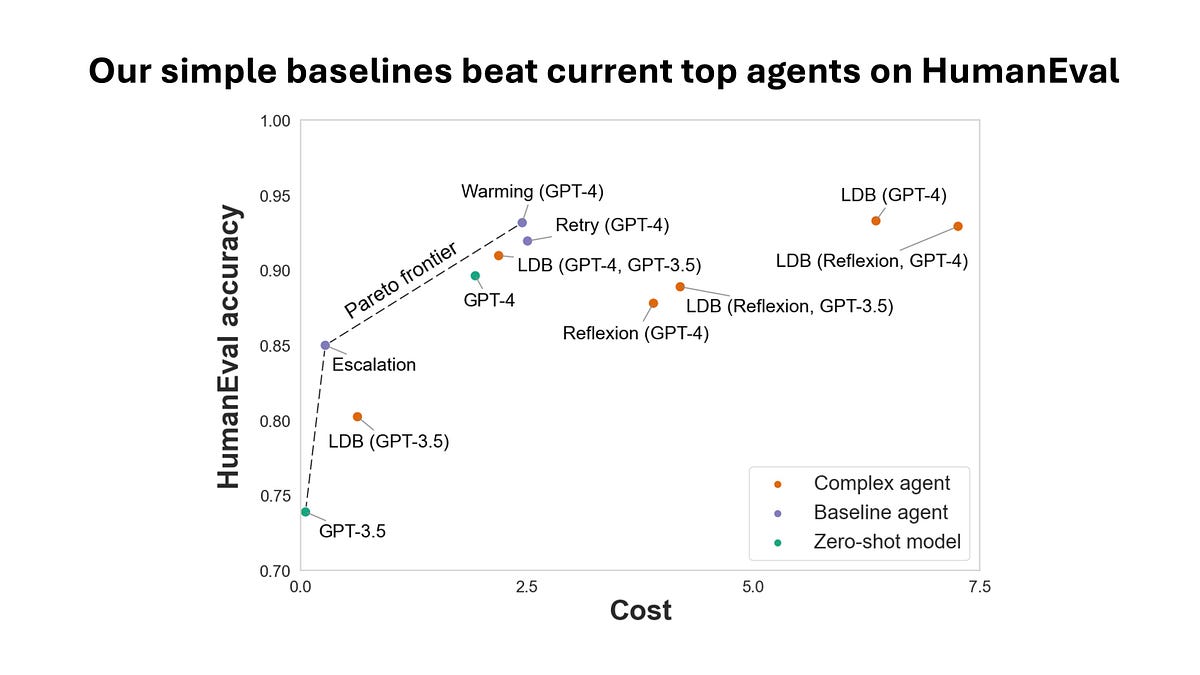

New paper: AI agents that matter

Rethinking AI agent benchmarking and evaluation

AI leaderboards are no longer useful. It's time to switch to Pareto curves.

What spending $2,000 can tell us about evaluating AI agents