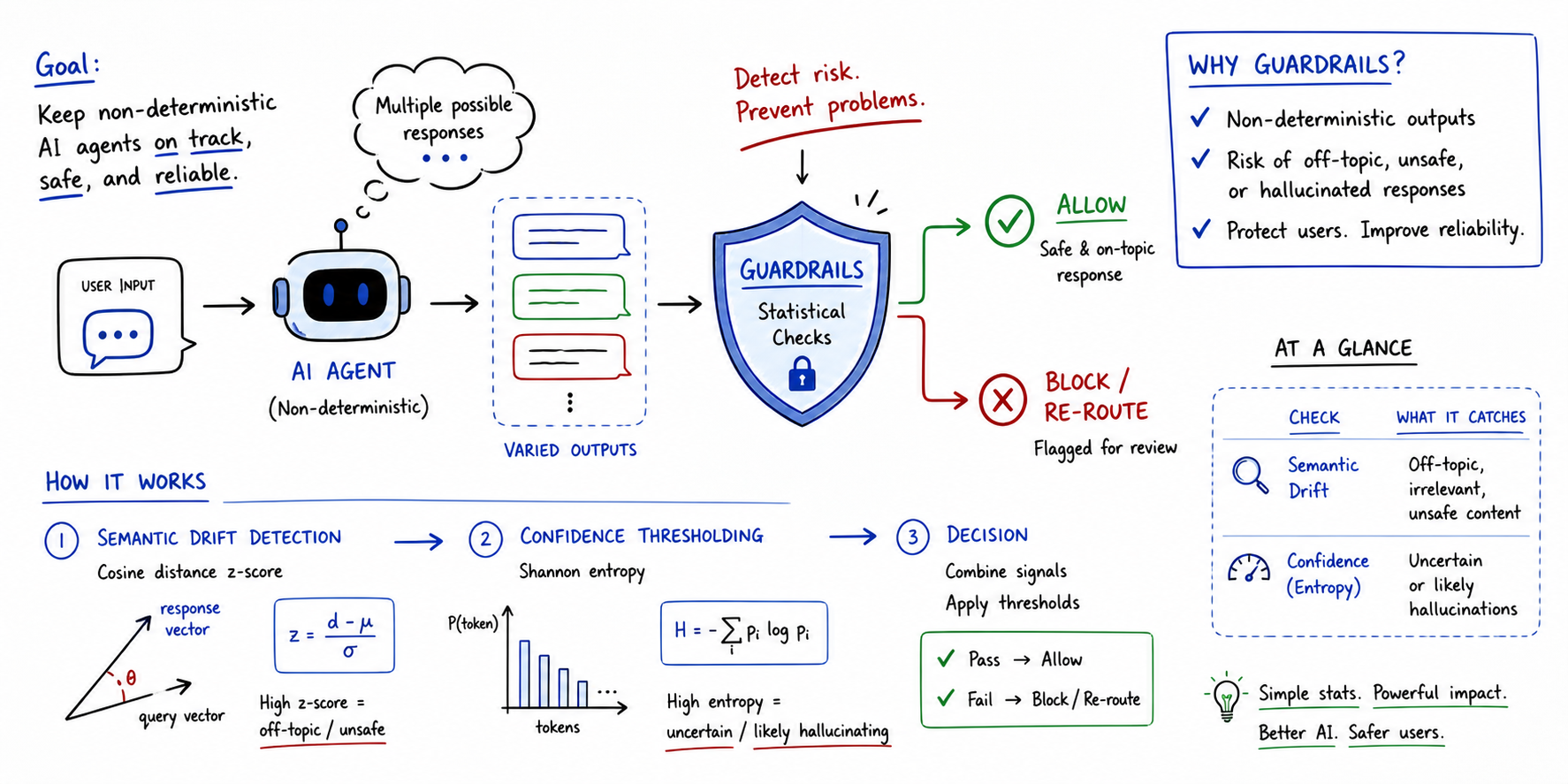

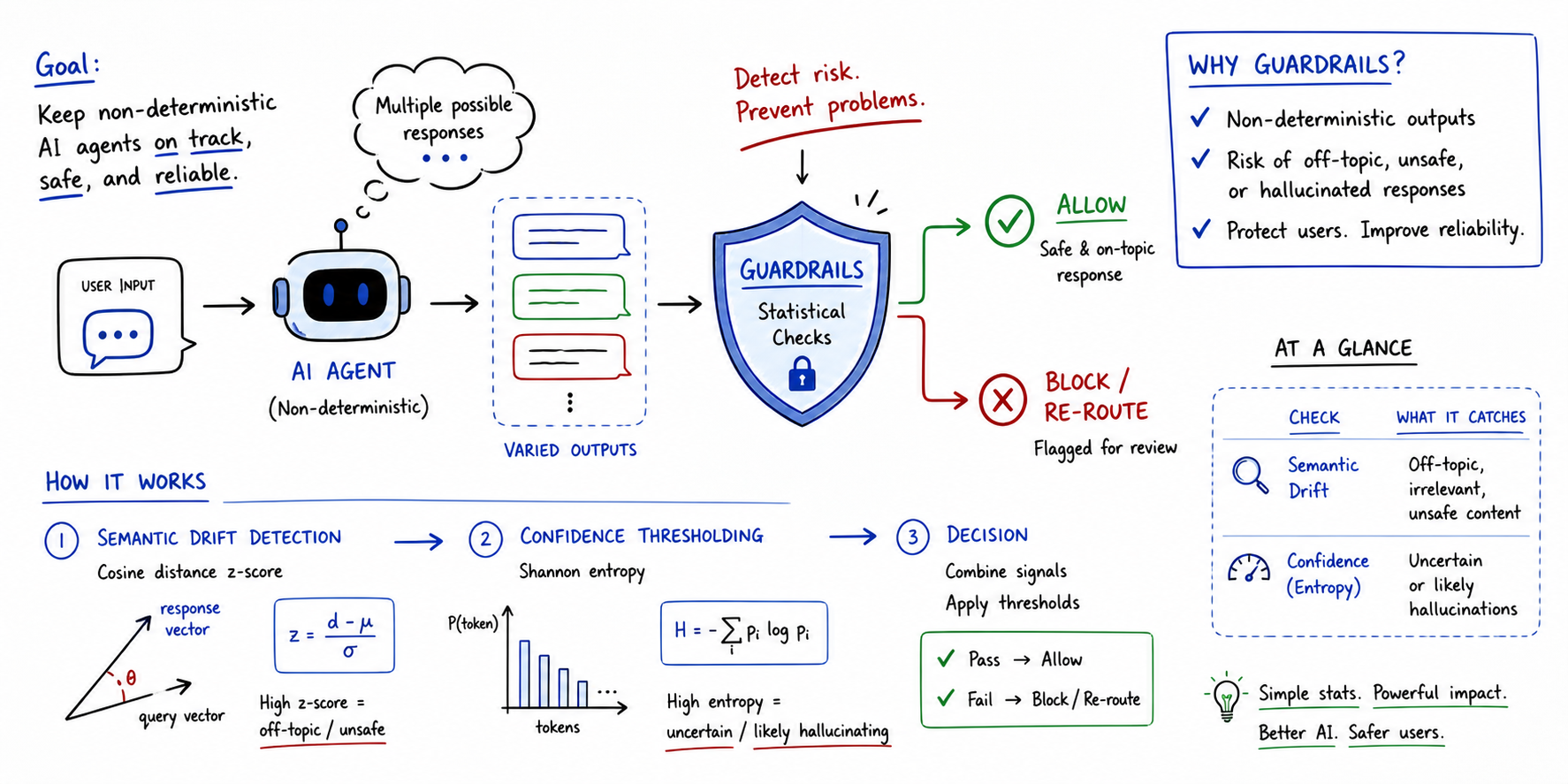

Implementing Statistical Guardrails for Non-Deterministic Agents

Non-deterministic agents are those where the same input can lead to distinct outputs across multiple runs.

All articles from Machine Learning Mastery aggregated on DeepTrendLab — AI news, research, and announcements in one place.

Non-deterministic agents are those where the same input can lead to distinct outputs across multiple runs.

Traditional

TurboQuant has recently been launched by Google as a novel algorithmic suite and library for applying advanced quantization and compression to large language models (LLMs) and vector search engines —…

When

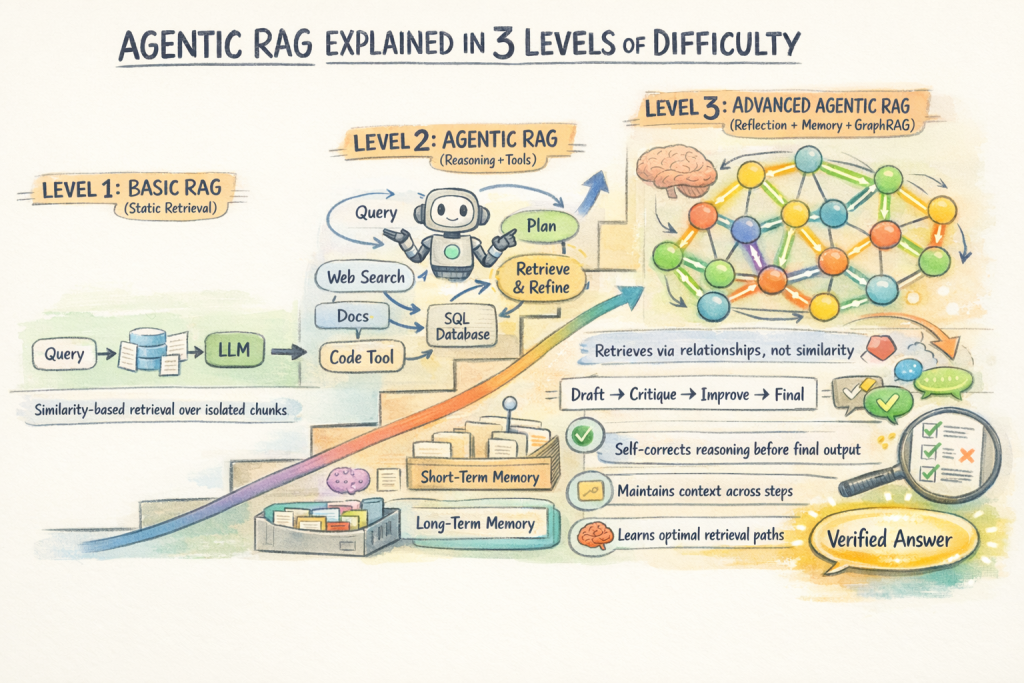

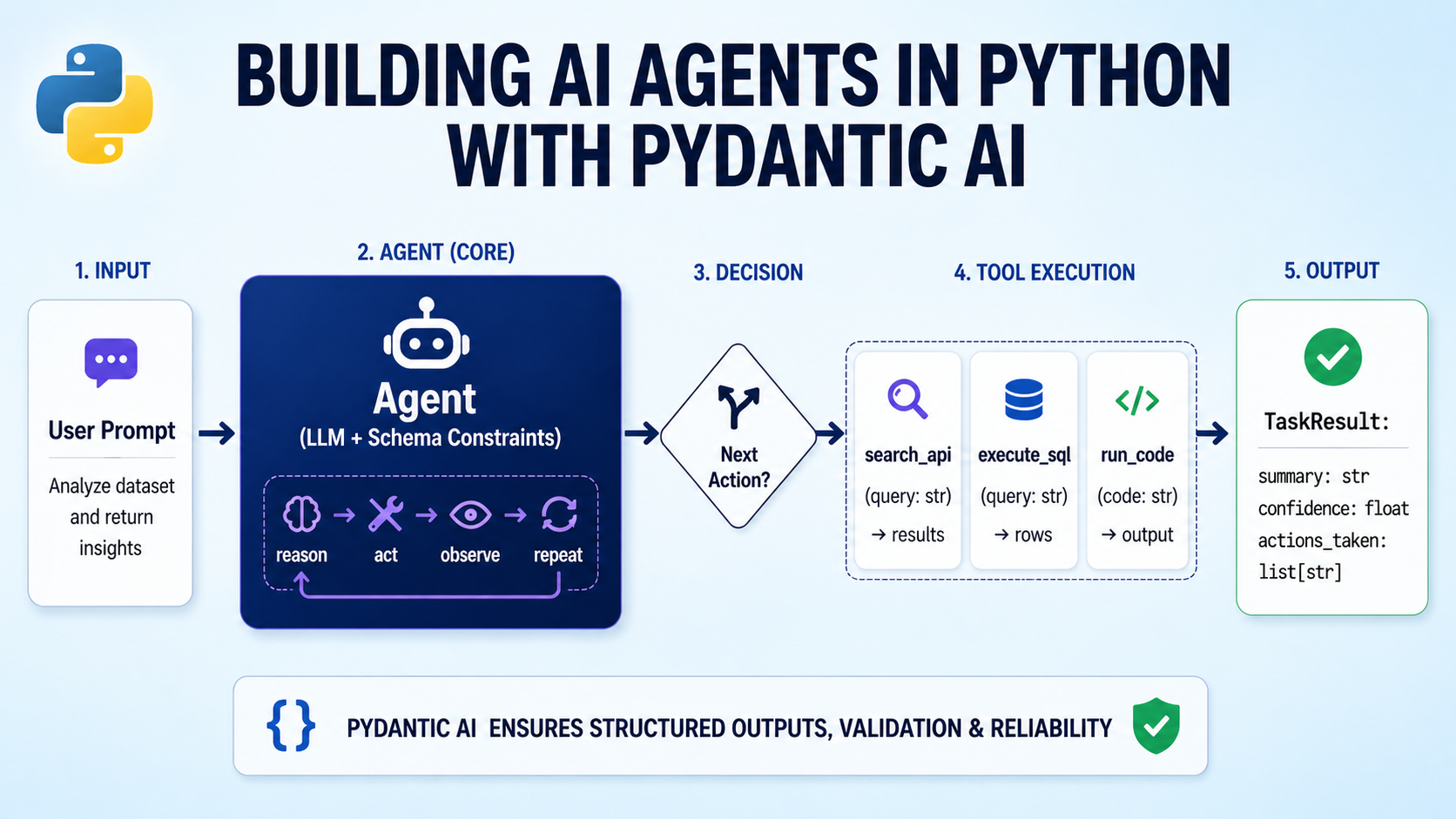

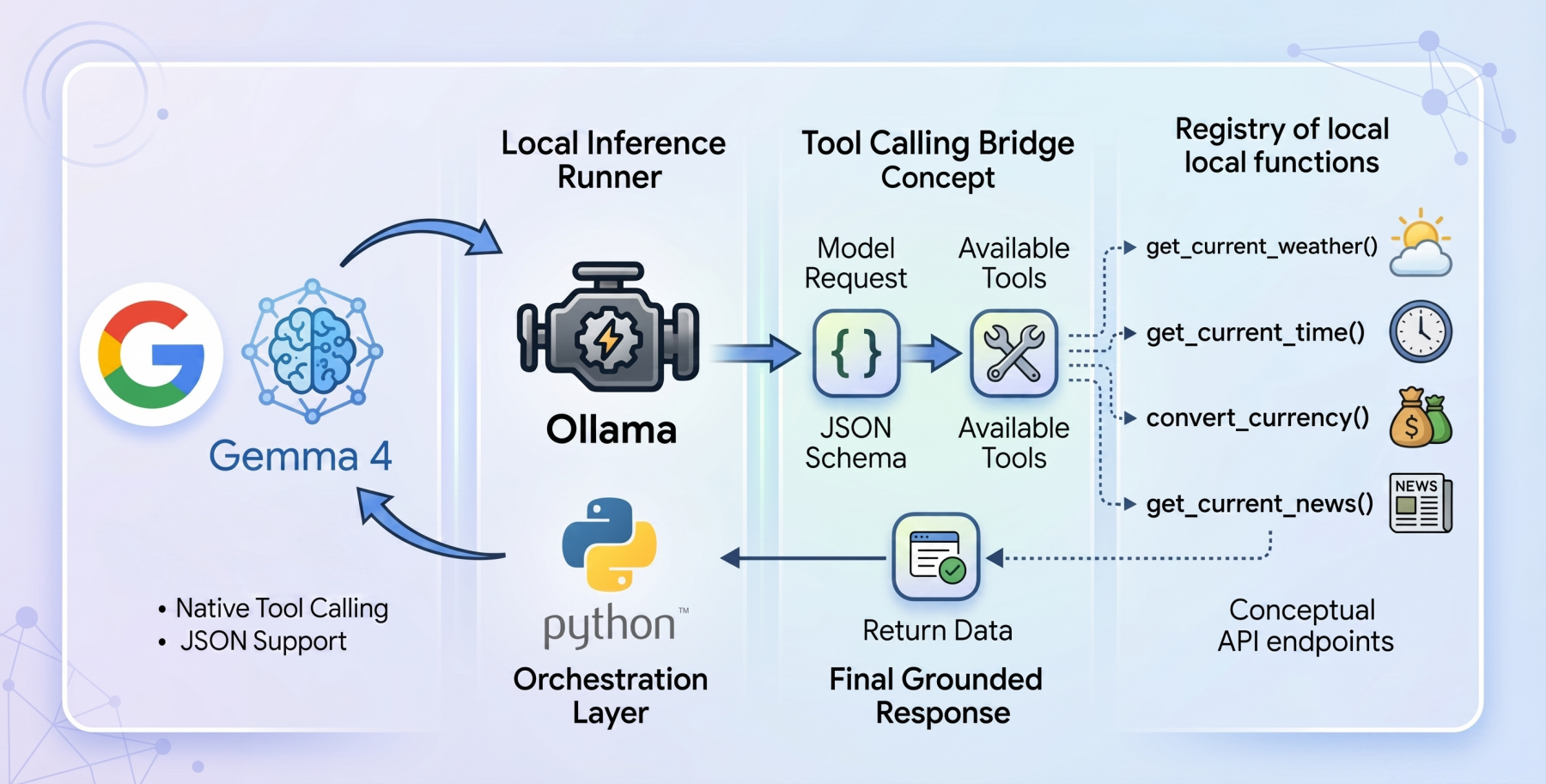

The idea of building your own AI agent used to feel like something only big tech companies could pull off.

FastAPI has become one of the most popular ways to serve machine learning models because it is lightweight, fast, and easy to use.

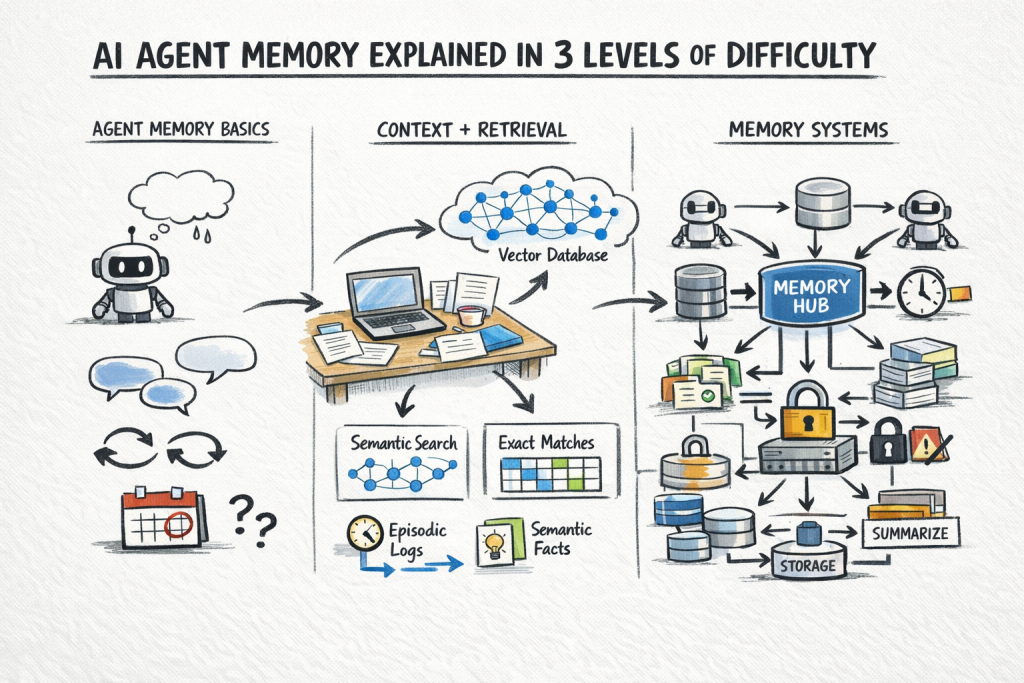

A stateless AI agent has no memory of previous calls.

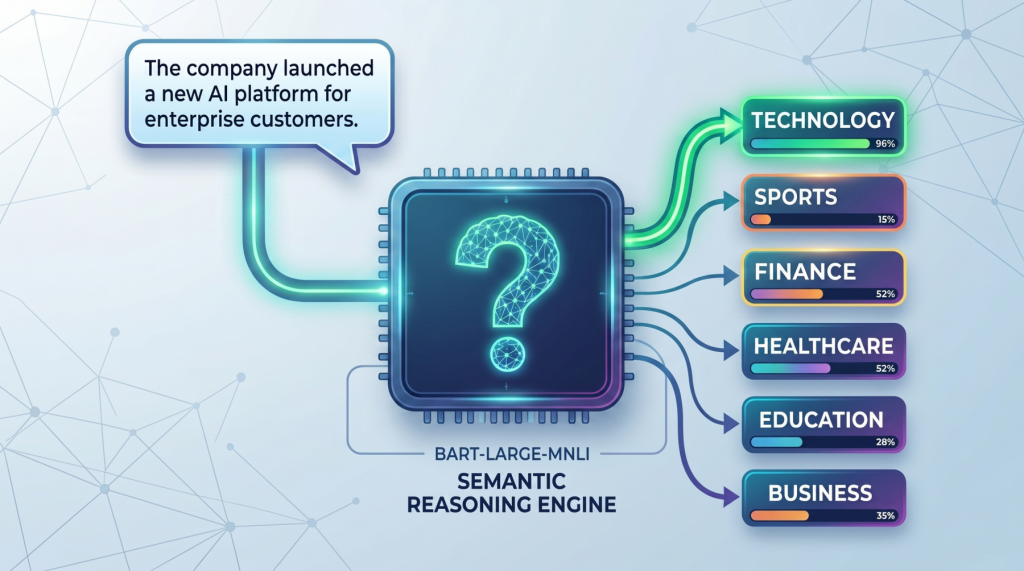

Zero-shot text classification is a way to label text without first training a classifier on your own task-specific dataset.

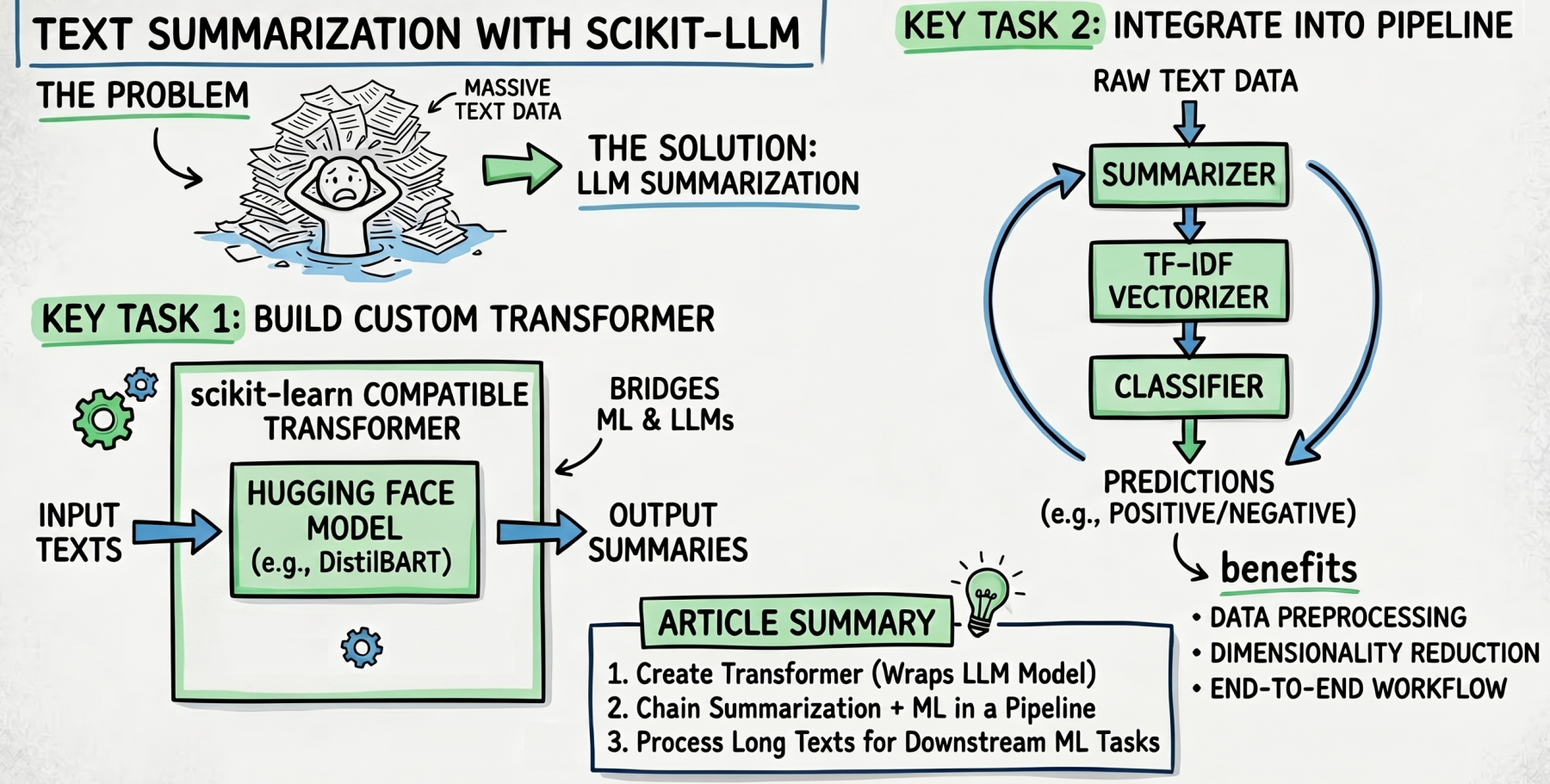

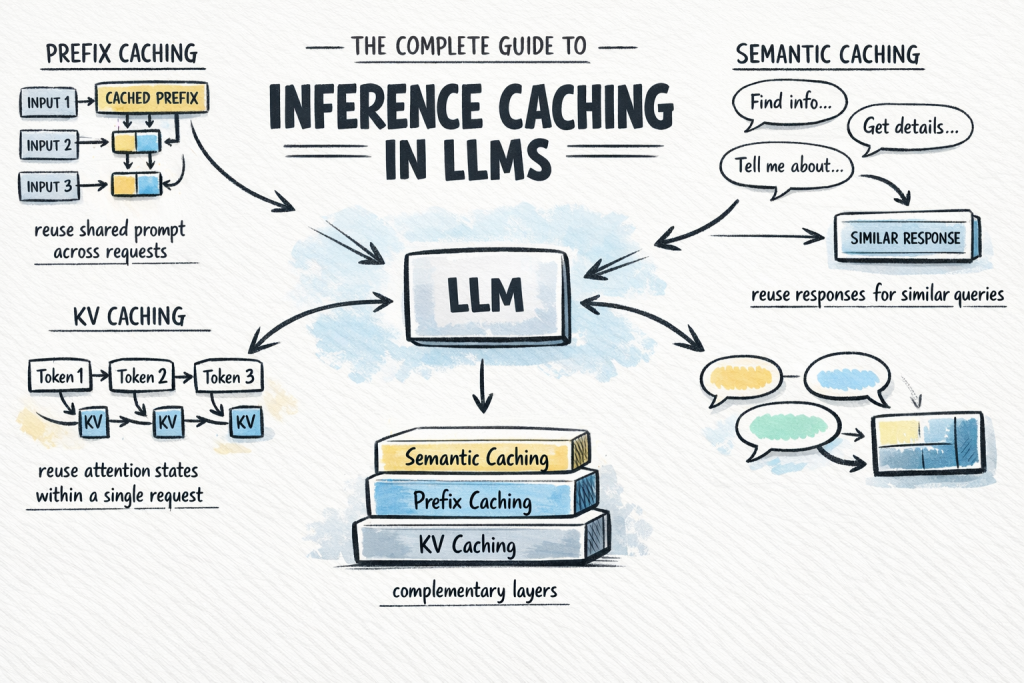

Calling a large language model API at scale is expensive and slow.

You've probably written a decorator or two in your Python career.

The open-weights model ecosystem shifted recently with the release of the

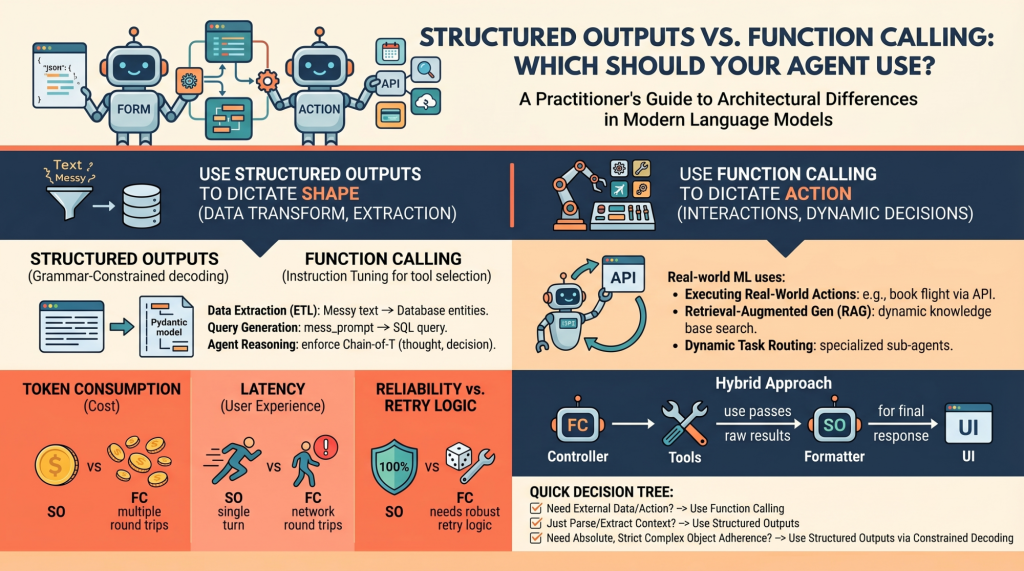

Language models (LMs), at their core, are text-in and text-out systems.