Explore the latest AI news and research tagged #language-models — curated from top sources including OpenAI, Anthropic, Google DeepMind, and more.

#language-models

10 articles

🍎 AI Labs

Apple ML Research

2 min read

Mixture-of-Experts (MoE) models enable sparse expert activation, meaning that only a subset of the model’s parameters is used during each inference. However, to translate this sparsity into practical performance, an expert caching mechanism is required. Previous works have proposed hardware-centric caching policies, but how these various caching policies interact with each other and different hardware specification remains poorly understood. To…

🚀 News

TechCrunch AI

2 min read

The company said the model reduces hallucination in sensitive areas such as law, medicine, and finance, while maintaining the low latency of its predecessor.

💹 News

AI Business

4 min read

Mistral's approach prioritizes natural language interactions, making coding more accessible while allowing for integration with existing code repositories.

💎 Tools

KDNuggets

4 min read

These ares seven unconventional uses of LLMs that go far beyond usual chat interface and conversations.

🤗 AI Labs

Hugging Face Blog

8 min read

💎 Tools

KDNuggets

8 min read

Merge LLMs easily with Unsloth Studio's no-code GUI and combine models without retraining.

🧐 Safety

LessWrong

1 min read

Many people—especially AI company employees [1] —believe current AI systems are well-aligned in the sense of genuinely trying to do what they're supposed to do (e.g., following their spec or…

🤗 AI Labs

Hugging Face Blog

12 min read

🎯 Newsletters

Machine Learning Mastery

7 min read

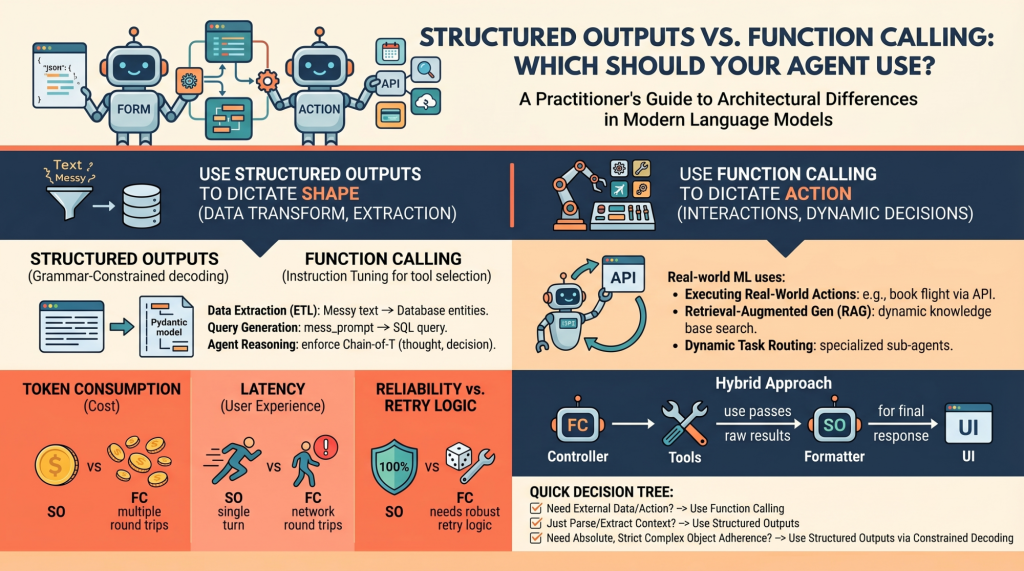

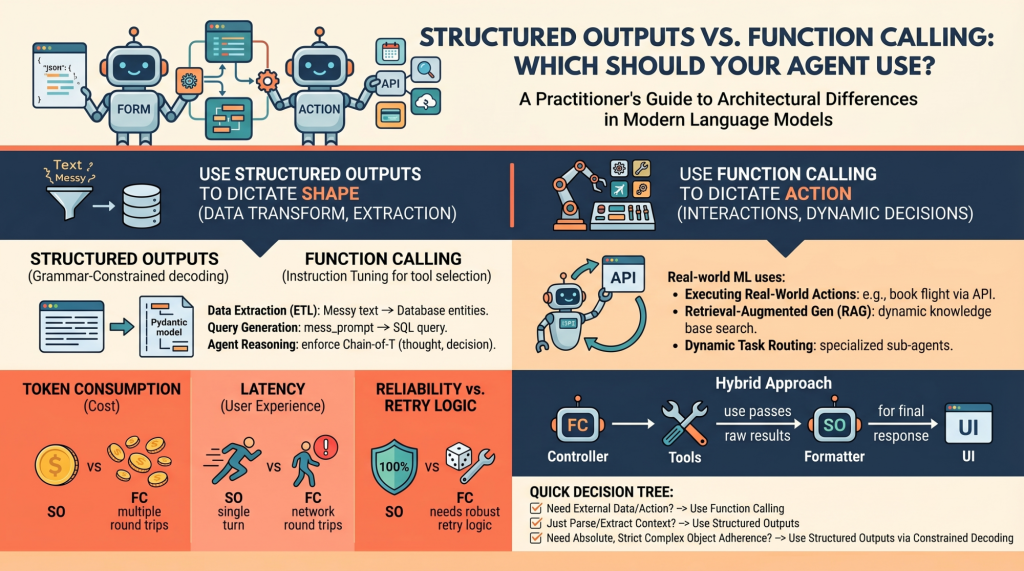

Language models (LMs), at their core, are text-in and text-out systems.

🤗 AI Labs

Hugging Face Blog

32 min read