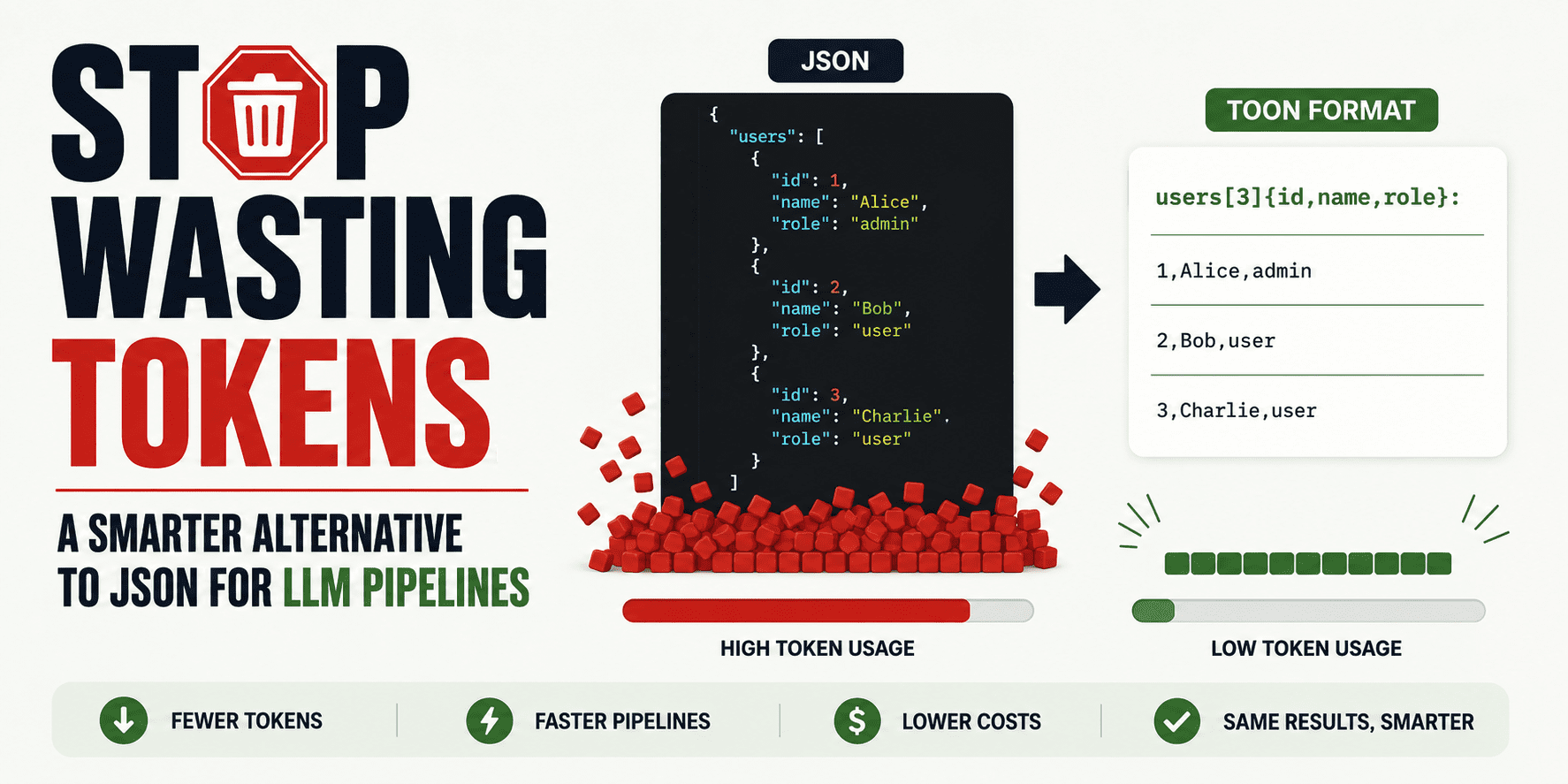

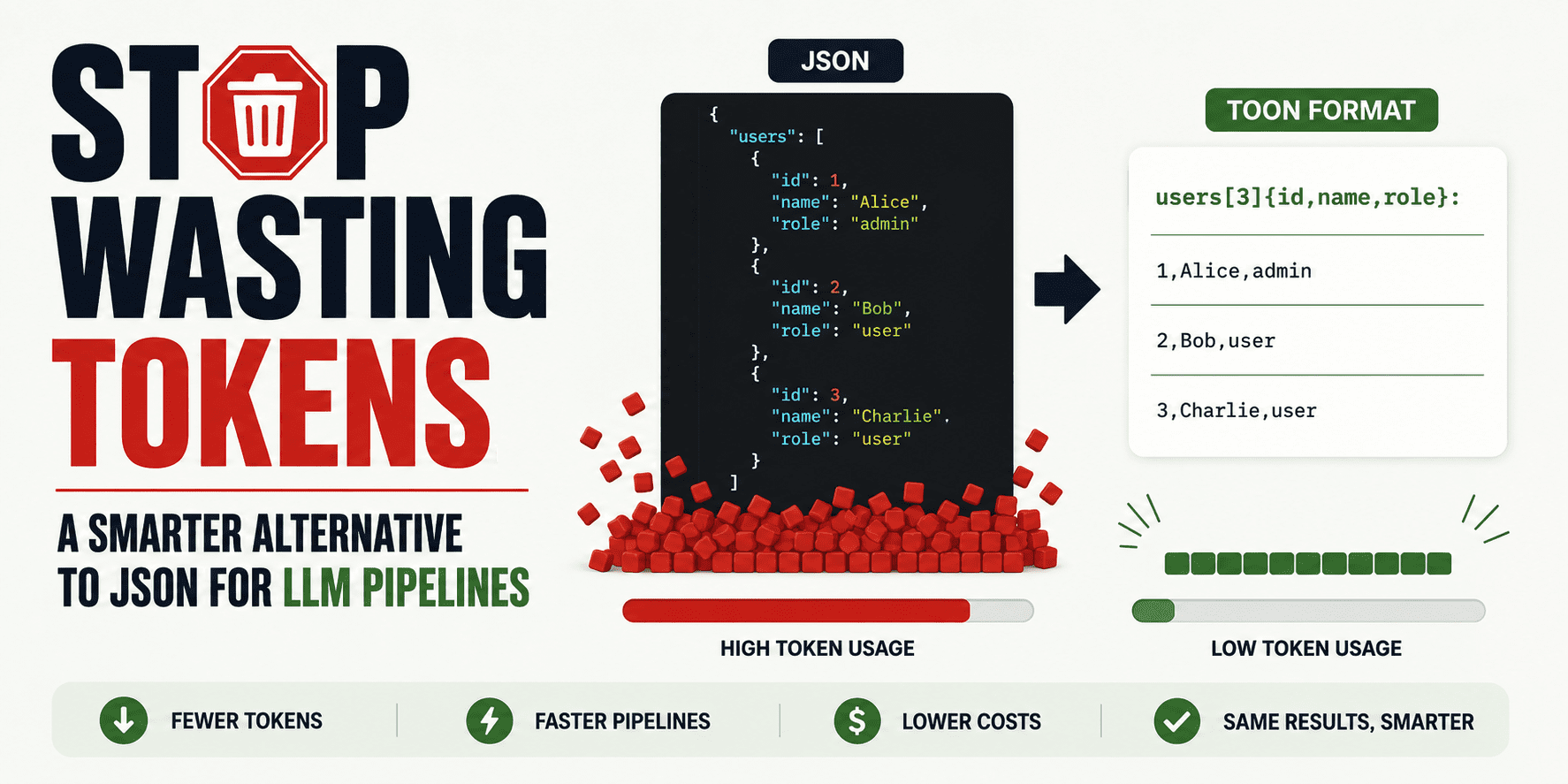

A new serialization format called TOON has emerged to address a specific but increasingly costly problem in modern LLM workflows: the overhead of JSON's structural repetition when sending bulk data to models. Unlike JSON, which repeats field names in every object within an array, TOON declares a schema once and streams values in a compact tabular format—reducing token usage substantially on large datasets. The format is lossless, meaning data can be converted back and forth without information loss, and it's designed explicitly as a staging layer between backend systems and LLM prompts rather than a wholesale replacement for JSON across applications.

Token efficiency has become a hidden but real cost lever in AI operations. As teams scale from experimental demos to production systems, the economics of LLM APIs expose themselves quickly: reducing input tokens by 20-30% on every inference across millions of requests creates measurable savings. The timing of TOON's introduction reflects the maturing complexity of LLM supply chains. Early generative AI work relied on hand-crafted prompts and small datasets. Today's production systems pump structured retrieval results, tool outputs, and context records into models at scale, and that data often lives in JSON through the entire pipeline. Every conversion, API call, and token counter represents friction that compounds. TOON targets that friction point directly by treating the LLM as a first-class consumer with its own serialization needs, rather than forcing models to parse formats optimized for human readability or API portability.

The significance of TOON lies not in technical novelty but in legitimizing prompt engineering as an optimization surface that extends beyond prompt text into data representation. This shifts how enterprises approach cost and latency in production AI systems. Rather than treating token budgets as fixed constraints, teams can now treat data formatting as a lever alongside model selection, context window size, and prompt design. For systems handling high-volume structured queries—retrieval-augmented generation over large catalogs, agent systems maintaining state snapshots, batch processing of tool outputs—the compaction gains are real. A support ticket dataset or product catalog sent in TOON instead of JSON could cut input tokens by 25-40% depending on field density and cardinality, which compounds across millions of inferences. This makes TOON particularly relevant in an era where enterprises are trying to justify LLM spend and optimize margins on AI-powered products.

The immediate beneficiaries are developers building information-dense LLM systems—those working with retrieval augmentation, agentic workflows, batch processing, or multi-turn systems that maintain structured state. Product teams shipping search with LLM reranking, knowledge bases queried at scale, or systems that synthesize outputs from multiple tools will find concrete value. However, the impact is heavily skewed by use case. Unstructured prompts, deeply nested heterogeneous data, or tiny payloads gain little or nothing from TOON adoption. The format's sweet spot is uniform arrays of records with consistent schemas, which narrows its addressable problem. This also means adoption faces a coordination challenge: individual teams benefit only if they control the formatting layer between their data pipeline and LLM calls, but widespread tooling and library support is still nascent. Early adopters will see gains; broader adoption depends on integration into popular LLM frameworks and SDKs.

Competitively, TOON occupies a different niche than structured output formats, retrieval optimization, or model quantization—the other visible levers teams pull to control token usage. It's not competing with function calling or structured output; it's complementary, addressing the input side of the inference equation. Rivals aren't other formats but rather the status quo of JSON everywhere. This actually strengthens TOON's positioning because the barrier to adoption is low—teams can convert on-the-fly without rearchitecting backends. However, the format's value is capped by the maturity of the broader ecosystem. If TOON remains a specialized tool requiring manual integration, it will be relegated to teams with optimization expertise and resources. If it becomes a first-class citizen in Langchain, Llamaindex, and the frameworks that abstract prompt construction, adoption will accelerate rapidly.

The open questions are straightforward: Will TOON gain sufficient library support to move beyond early adopters? Can the format scale to the heterogeneity of real-world data without losing its compression benefits? And critically, how much actual savings do teams realize in practice after factoring in conversion costs? The format itself is sound, but adoption curves for middleware serialization formats are historically slow unless they're bundled into platforms users already rely on. Watch whether the TOON ecosystem produces canonical integrations with major LLM frameworks within the next six months. The broader implication is that token efficiency will increasingly shift from a model-side concern (better architectures, pruning, quantization) to a data-side optimization (representation, retrieval strategy, formatting). That's a meaningful shift in how enterprises will approach LLM cost management.

This article was originally published on KDNuggets. Read the full piece at the source.

Read full article on KDNuggets →DeepTrendLab curates AI news from 50+ sources. All original content and rights belong to KDNuggets. DeepTrendLab's analysis is independently written and does not represent the views of the original publisher.