What’s the Best Way to Brainwash an LLM?

I spent a weekend trying to convince a language model it was C-3PO. Here's what actually worked. The post What’s the Best Way to Brainwash an LLM? appeared…

Latest news and research on large language models — GPT-4, Claude, Gemini, Llama, and more. Benchmarks, architectures, fine-tuning, and real-world deployment of LLMs.

Large language models (LLMs) are neural networks trained on massive text corpora to understand and generate human language. Built on the transformer architecture introduced by Google in 2017, modern LLMs like GPT-4, Claude 3, and Gemini Ultra contain hundreds of billions of parameters and are trained on trillions of tokens of text, code, and structured data.

The LLM landscape has evolved rapidly since the release of ChatGPT in November 2022 accelerated mainstream adoption. The field is now defined by a handful of key dimensions: context window length (from 4K to over 1M tokens), reasoning capability (chain-of-thought, tool use, multi-step planning), multimodality (text, images, audio, video), and the trade-off between capability and cost at inference time.

DeepTrendLab tracks every major LLM release, benchmark result, and architectural innovation across the leading labs — OpenAI, Anthropic, Google DeepMind, Meta AI, Mistral, Cohere, and the open-source community. Our analysis covers not just what shipped, but what the capability gains mean for developers, enterprises, and the broader trajectory of AI.

I spent a weekend trying to convince a language model it was C-3PO. Here's what actually worked. The post What’s the Best Way to Brainwash an LLM? appeared…

In this post, we show you how to set up FLOPs tracking during LLM fine-tuning using the open source Fine-Tuning FLOPs Meter toolkit on Amazon SageMaker AI. You…

The Local-First AI Inference pattern routes 70–80% of documents to deterministic local extraction at zero API cost, reserving Azure OpenAI calls for edge cases and flagging low-confidence results…

From tokenisation to evaluation : how modern language models actually work in practice The post The Must-Know Topics for an LLM Engineer appeared first on Towards Data Science…

Overview of adaptive parallel reasoning. What if a reasoning model could decide for itself when to decompose and parallelize independent subtasks, how many concurrent threads to spawn, and…

Current critic-less RLHF methods aggregate multi-objective rewards via an arithmetic mean, leaving them vulnerable to constraint neglect: high-magnitude success in one objective can numerically offset critical failures in…

Fine-tuning LLMs has become much easier because of open-source tools. You no longer need to build the full training stack from scratch. Whether you want low-VRAM training, LoRA,…

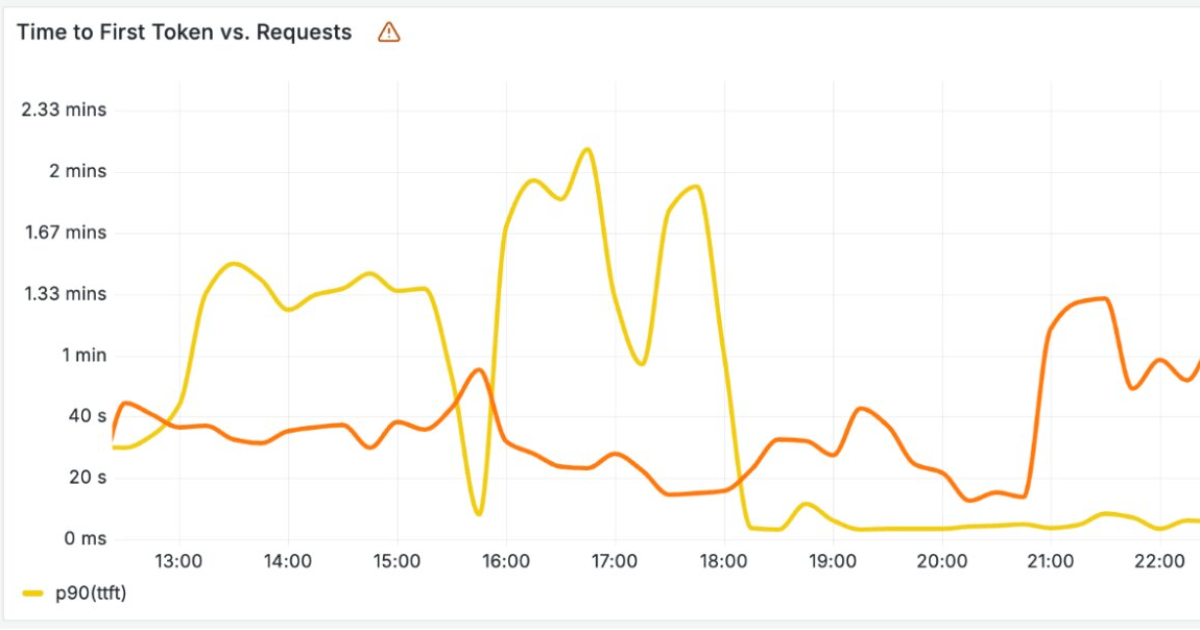

Serving transformer language models with high throughput requires caching Key-Values (KVs) to avoid redundant computation during autoregressive generation. The memory footprint of KV caching is significant and heavily…

Building a knowledge base for AI models isn’t a one-time task but an iterative process of refinement. The post How to Build an Efficient Knowledge Base for AI…

Amazon SageMaker AI now offers an agentic experience that changes this. Developers describe their use case using natural language, and the AI coding agent streamlines the entire journey,…

Last Updated on May 4, 2026 by Editorial Team Author(s): Ala Falaki, PhD Originally published on Towards AI. Month in 4 Papers (April 2026) This series of posts…

Why reasoning models dramatically increase token usage, latency, and infrastructure costs in production systems The post Inference Scaling (Test-Time Compute): Why Reasoning Models Raise Your Compute Bill appeared…

Cloudflare has recently announced new infrastructure designed to run large AI language models across its global network. As these models rely on costly hardware and must handle large…

This paper was accepted at the Fifth Workshop on Natural Language Generation, Evaluation, and Metrics at ACL 2026. Tool-calling agents are evaluated on tool selection, parameter accuracy, and…

In this post, we take a deeper look at how RLAIF or RL with LLM-as-a-judge works with Amazon Nova models effectively.

A large language model is a type of AI trained on vast amounts of text to predict and generate language. LLMs learn statistical patterns across billions of examples and can perform tasks like summarization, translation, coding, and reasoning without being explicitly programmed for each task. Examples include GPT-4, Claude 3, Gemini, and Llama 3.

GPT-4 (OpenAI), Claude (Anthropic), and Gemini (Google DeepMind) are competing frontier LLMs from different labs, each with different architectural choices, training philosophies, and strengths. GPT-4 pioneered multi-step reasoning; Claude emphasizes safety and longer context; Gemini was built natively multimodal. They compete across benchmarks for coding, reasoning, and instruction-following.

Fine-tuning is the process of further training a pre-trained LLM on a smaller, task-specific dataset to improve its performance on particular use cases — like customer support, medical coding, or legal document analysis. Techniques include supervised fine-tuning (SFT), RLHF (reinforcement learning from human feedback), and parameter-efficient methods like LoRA.

LLM benchmarks are standardized tests used to compare model capabilities. Common benchmarks include MMLU (general knowledge), HumanEval (coding), MATH (mathematics), GSM8K (grade-school math reasoning), and BIG-Bench. However, benchmark saturation — where models are optimized specifically for tests — has made real-world capability evaluation increasingly important.