Inference

15 articles

Article: Local-First AI Inference: A Cloud Architecture Pattern for Cost-Effective Document Processing

The Local-First AI Inference pattern routes 70–80% of documents to deterministic local extraction at zero API cost, reserving Azure OpenAI calls for edge cases and flagging low-confidence results for human…

Adaptive Parallel Reasoning: The Next Paradigm in Efficient Inference Scaling

Overview of adaptive parallel reasoning. What if a reasoning model could decide for itself when to decompose and parallelize independent subtasks, how many concurrent threads to spawn, and how to…

vLLM V0 to V1: Correctness Before Corrections in RL

Google New TPU Generation is Specifically Designed for Agents and SOTA Model Training

Google has unvelied a new generation of Tensor Processing Units (TPUs), featuring two specialized chips designed to accelerate model training and agent workflows, which require continuous, multi-step reasoning, and action…

SpecMD: A Comprehensive Study on Speculative Expert Prefetching

Mixture-of-Experts (MoE) models enable sparse expert activation, meaning that only a subset of the model’s parameters is used during each inference. However, to translate this sparsity into practical performance, an…

Stochastic KV Routing: Enabling Adaptive Depth-Wise Cache Sharing

Serving transformer language models with high throughput requires caching Key-Values (KVs) to avoid redundant computation during autoregressive generation. The memory footprint of KV caching is significant and heavily impacts serving…

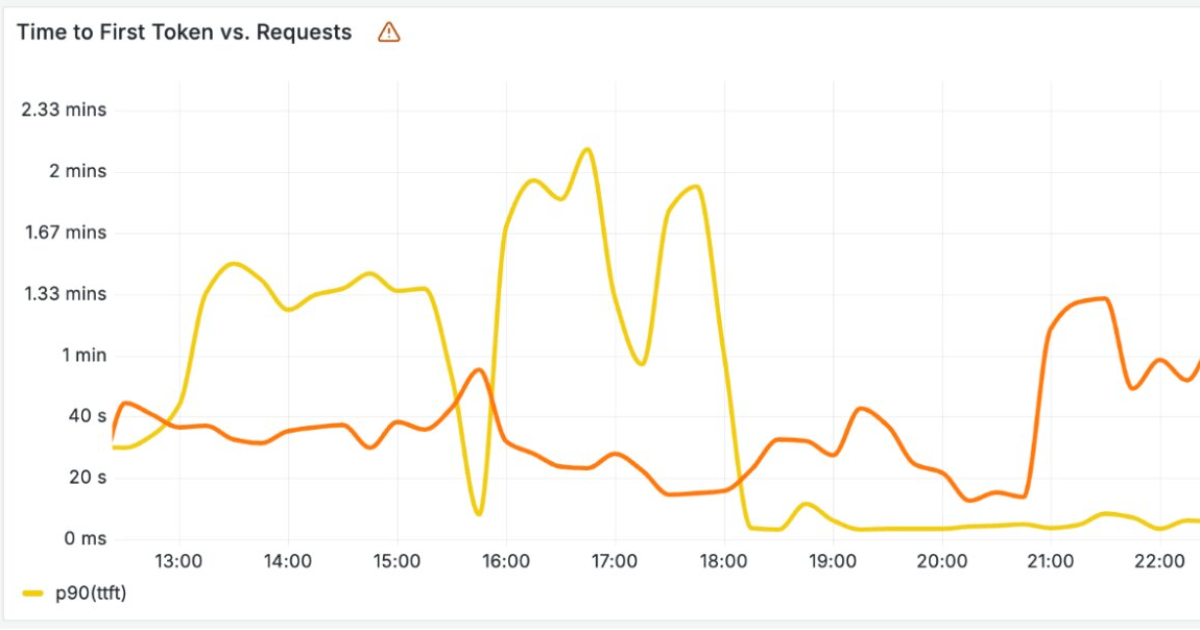

Capacity-aware inference: Automatic instance fallback for SageMaker AI endpoints

Today, Amazon SageMaker AI introduces capacity aware instance pool for new and existing inference endpoints. You define a prioritized list of instance types, and SageMaker AI automatically works through your…

Inference Scaling (Test-Time Compute): Why Reasoning Models Raise Your Compute Bill

Why reasoning models dramatically increase token usage, latency, and infrastructure costs in production systems The post Inference Scaling (Test-Time Compute): Why Reasoning Models Raise Your Compute Bill appeared first on…

Cloudflare Builds High-Performance Infrastructure for Running LLMs

Cloudflare has recently announced new infrastructure designed to run large AI language models across its global network. As these models rely on costly hardware and must handle large volumes of…

Reinforced Agent: Inference-Time Feedback for Tool-Calling Agents

This paper was accepted at the Fifth Workshop on Natural Language Generation, Evaluation, and Metrics at ACL 2026. Tool-calling agents are evaluated on tool selection, parameter accuracy, and scope recognition,…

DeepInfra on Hugging Face Inference Providers 🔥

With Nvidia Groq 3, the Era of AI Inference Is (Probably) Here

This week, over 30,000 people are descending upon San Jose, Calif., to attend Nvidia GTC , the so-called Superbowl of AI—a nickname that may or may not have been coined…

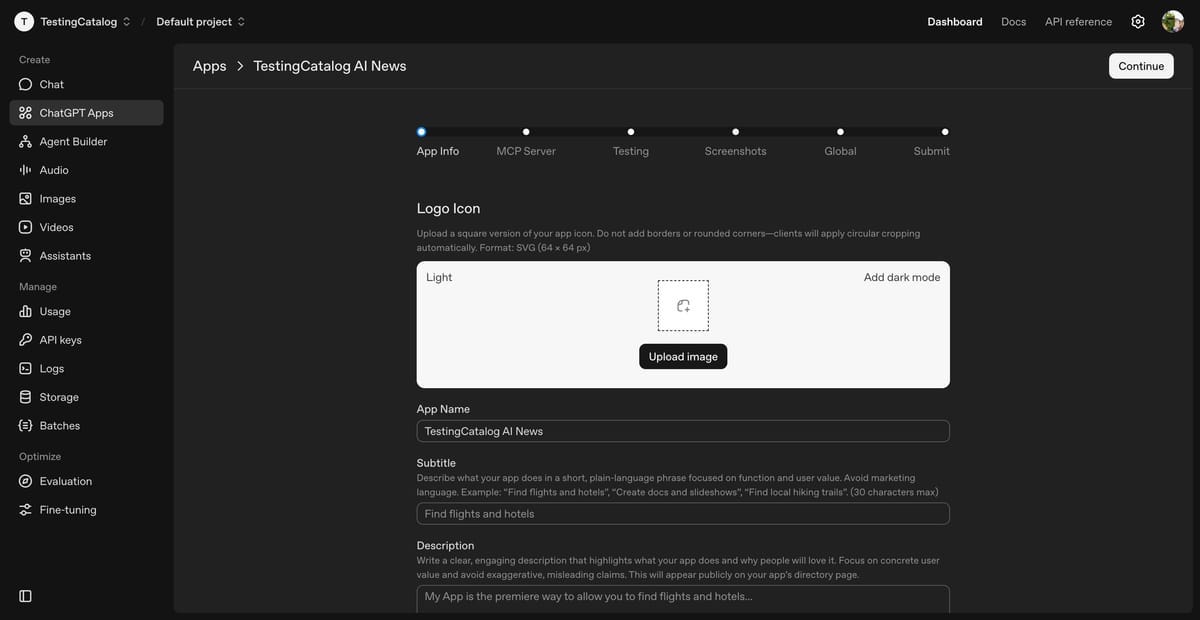

Last Week in AI #330 - Groq->Nvidia , ChatGPT Apps, US AI Genesis Mission

Nvidia buying AI chip startup Groq’s assets for about $20 billion in largest deal on record, OpenAI opens ChatGPT to third-party apps via its Platform, and more!

Torch compile caching for inference speed

Cache your compiled models for faster boot and inference times