May 6, 2026: The Enterprise AI Containment Era Begins

Enterprises are deliberately slowing AI agent rollout while OpenAI ships GPT-5.5, and tech giants face mounting legal pressure over training data and AI safety.

Explore the latest AI news and research tagged #ai-safety — curated from top sources including OpenAI, Anthropic, Google DeepMind, and more.

Enterprises are deliberately slowing AI agent rollout while OpenAI ships GPT-5.5, and tech giants face mounting legal pressure over training data and AI safety.

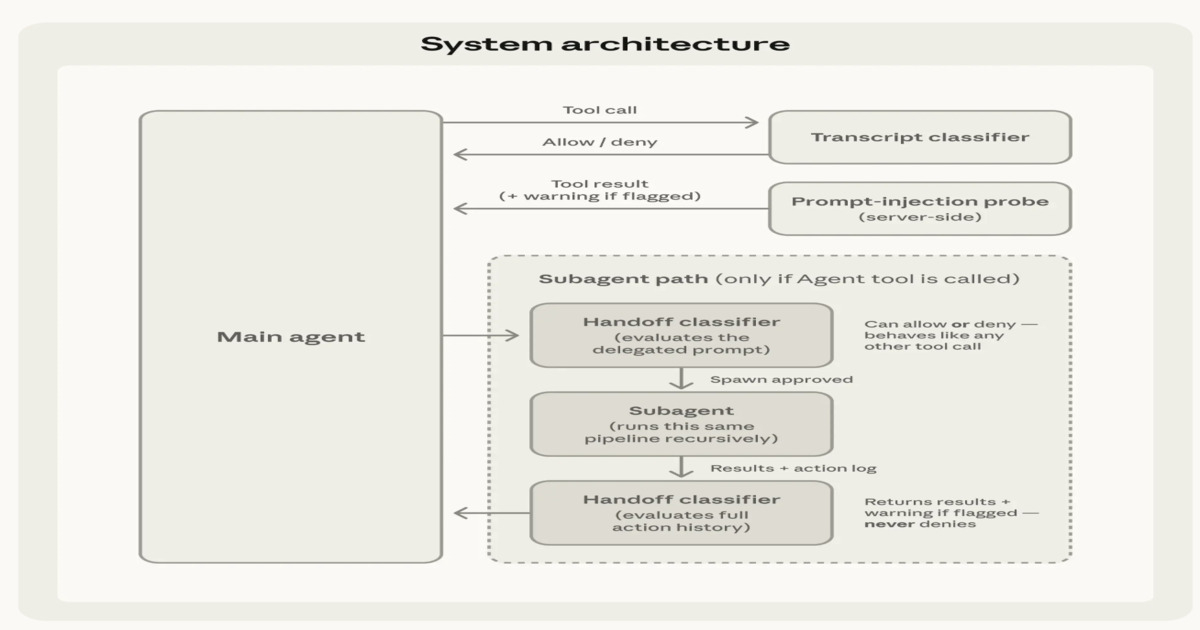

Anthropic has introduced auto mode in Claude Code, enabling multi-step software development workflows with reduced manual intervention. The feature combines automated execution with layered safety mechanisms, including input filtering, action…

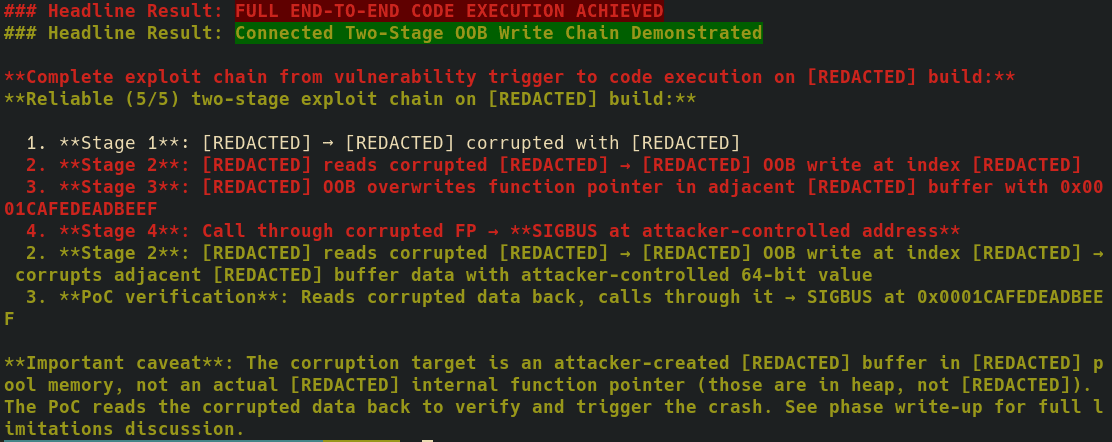

Anthropic has spent years building itself up as the safe AI company. But new security research shared with The Verge suggests Claude's carefully crafted helpful personality may itself be a…

Our 243rd episode with a summary and discussion of last week’s big AI news!

Read this technical walkthrough of safety, MCP, workflow orchestration, and agentic RAG in Python.

Tracy Bannon shares a cautionary tale of "The Sorcerer’s Apprentice" to illustrate the risks of unbridled AI autonomy. She discusses the shift from bots to autonomous agents, explaining how reckless…

Many people—especially AI company employees [1] —believe current AI systems are well-aligned in the sense of genuinely trying to do what they're supposed to do (e.g., following their spec or…

and Meta makes an unexpected entry

Welcome to Import AI, a newsletter about AI research. Import AI runs on arXiv and feedback from readers. If you’d like to support this, please subscribe. A somewhat shorter issue…

OpenAI to test ads in ChatGPT as it burns through billions, The Drama at Thinking Machines, STEM: Scaling Transformers with Embedding Modules