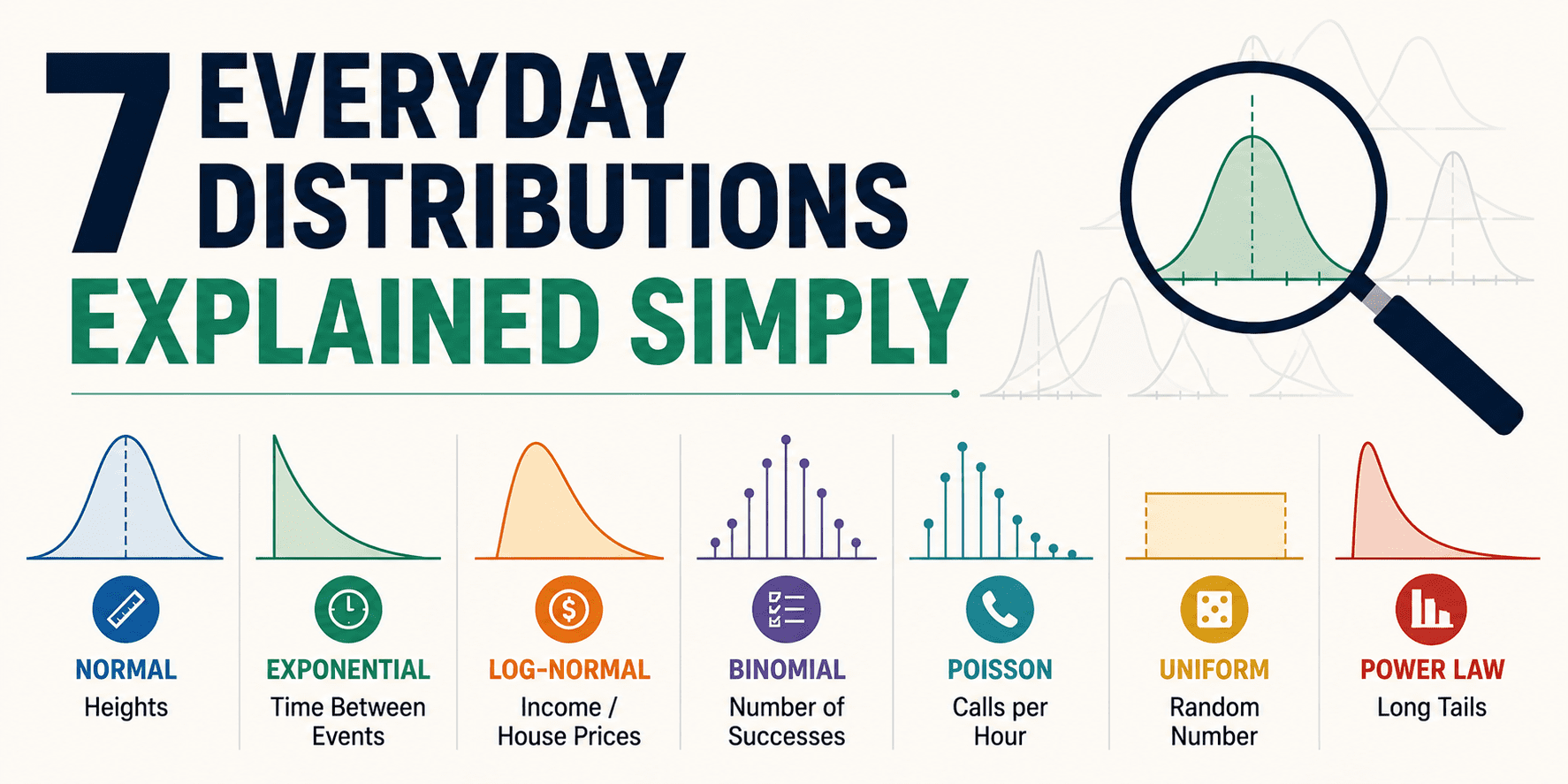

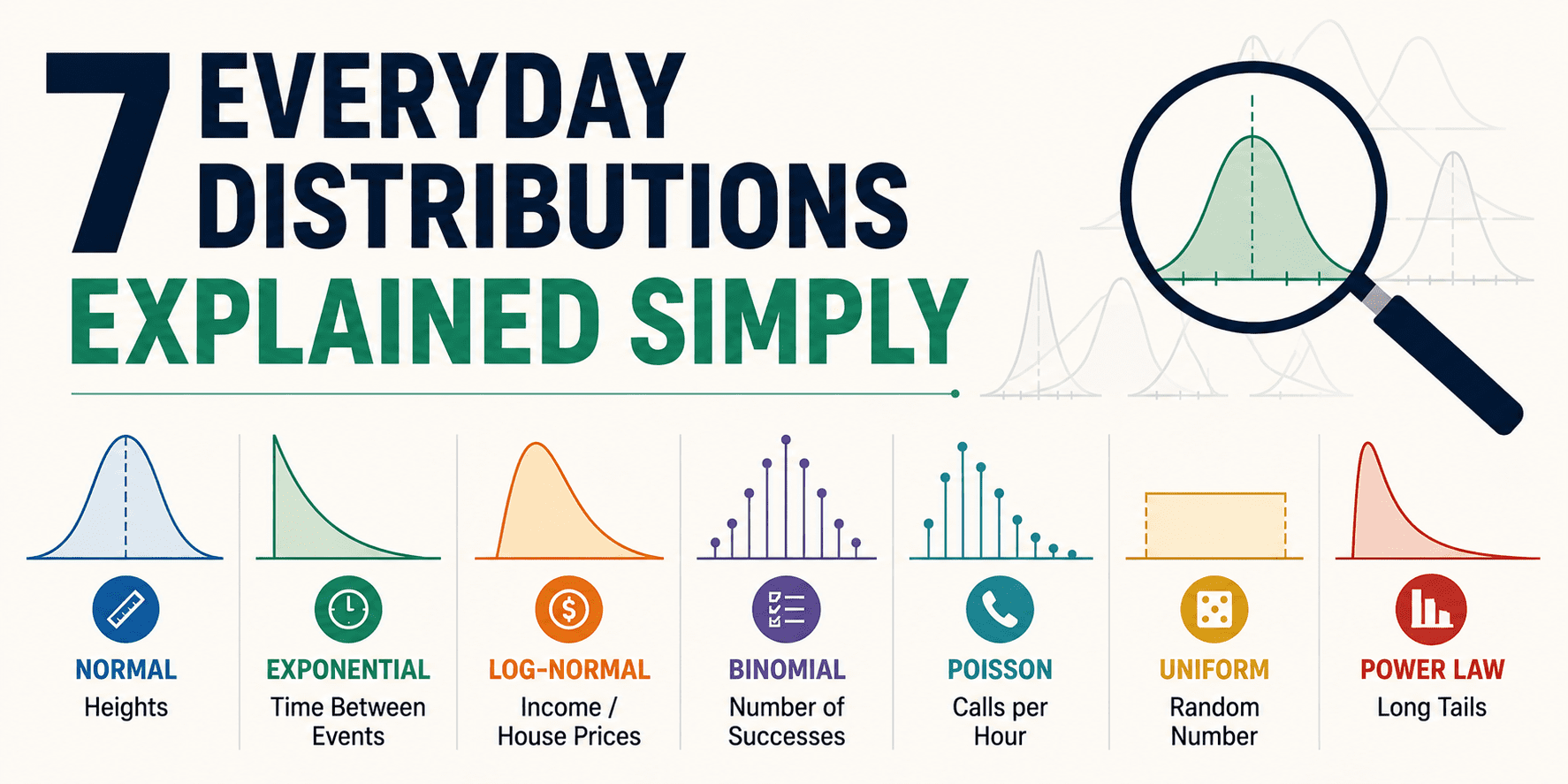

KDNuggets published a pedagogical primer on statistical distributions framed for accessibility rather than rigor, presenting seven common probability patterns through everyday analogies and concrete use cases. The piece eschews mathematical formalism in favor of narrative intuition, positioning distributions as interpretive lenses for understanding real-world variation rather than abstract theoretical objects. While ostensibly a "tools" piece about statistical literacy, the article reveals something more consequential about the current state of AI infrastructure and the widening gap between who builds ML systems and who deploys them.

The timing reflects a maturing recognition within data science education that traditional gatekeeping around statistics has created a accessibility crisis. As organizations scaled their AI adoption throughout the 2020s, they discovered that most practitioners lacked deep probabilistic grounding—yet still made critical decisions about model selection, uncertainty quantification, and risk assessment. Universities and online platforms have historically taught distributions through calculus-heavy approaches that filtered out curious practitioners who lacked mathematical prerequisites. KDNuggets, as the field's dominant practitioner publication, now addresses this by explicitly inverting pedagogical priority: intuition before formalism, pattern recognition before proofs. This represents a quiet admission that the field's knowledge transmission mechanisms have failed the expanding practitioner base.

The substantive value here lies not in novelty but in democratization timing. Distributions are the conceptual bedrock of everything from generative model sampling to Bayesian inference to uncertainty quantification in LLM outputs. As AI systems proliferate into production environments—recommendation systems, predictive maintenance, hiring tools—the practitioners implementing and monitoring these systems increasingly need distribution literacy without necessarily needing to derive likelihood functions. The article's framing of uniform distributions as a "clean baseline assumption" and binomial distributions as implicit infrastructure behind conversion metrics directly addresses the gap between data practitioners who understand distributions intuitively versus those who inherited statistical knowledge from computer science rather than statistics departments. This distinction has real consequences for how teams validate model assumptions and catch distribution shift.

The article primarily impacts three constituencies. Junior data scientists and self-taught ML engineers represent the largest group—those hired for technical skills in programming or math but who entered the field through accelerated bootcamps rather than degree programs. They benefit immediately from permission to approach distributions through narrative rather than calculus. Analytics practitioners and product managers who increasingly need to interpret model outputs and communicate uncertainty also stand to gain literacy without requiring prerequisite courses. Most significantly, organizations building AI infrastructure urgently need distributed statistical literacy across teams; a data engineer who understands that "normal distribution assumptions don't hold here" catches problems before they cascade into production failures.

This reflects a subtle competitive positioning within the educational AI landscape. ChatGPT and Claude have begun serving as on-demand statistics tutors, but they cannot yet provide the kind of pattern-recognition fluency that comes from guided exploration of canonical examples. Competitors like DataCamp and Coursera offer more structured, paid pathways; KDNuggets provides the free, digestible introduction that creates demand for deeper learning. The implicit market play: establish distribution literacy as table-stakes, then guide practitioners toward more sophisticated inference training where KDNuggets maintains influence through its community and content authority. This positions knowledge democratization as a competitive moat rather than mere altruism.

What matters next is whether this accessibility trend cascades into model evaluation practices. The real question isn't whether practitioners understand what a normal distribution is—it's whether teams building production AI systems actually validate distributional assumptions about their data and alert on distribution shift. The article's success would be measured not in readership but in adoption: do fewer ML systems fail in production because someone recognized that their data violated uniformity assumptions? Does distribution literacy become as baseline as "know your training-test split"? The coming focus should be monitoring whether accessibility to statistical intuition translates into more robust AI system validation or simply creates a larger population of practitioners who recognize a pattern without understanding its implications.

This article was originally published on KDNuggets. Read the full piece at the source.

Read full article on KDNuggets →DeepTrendLab curates AI news from 50+ sources. All original content and rights belong to KDNuggets. DeepTrendLab's analysis is independently written and does not represent the views of the original publisher.