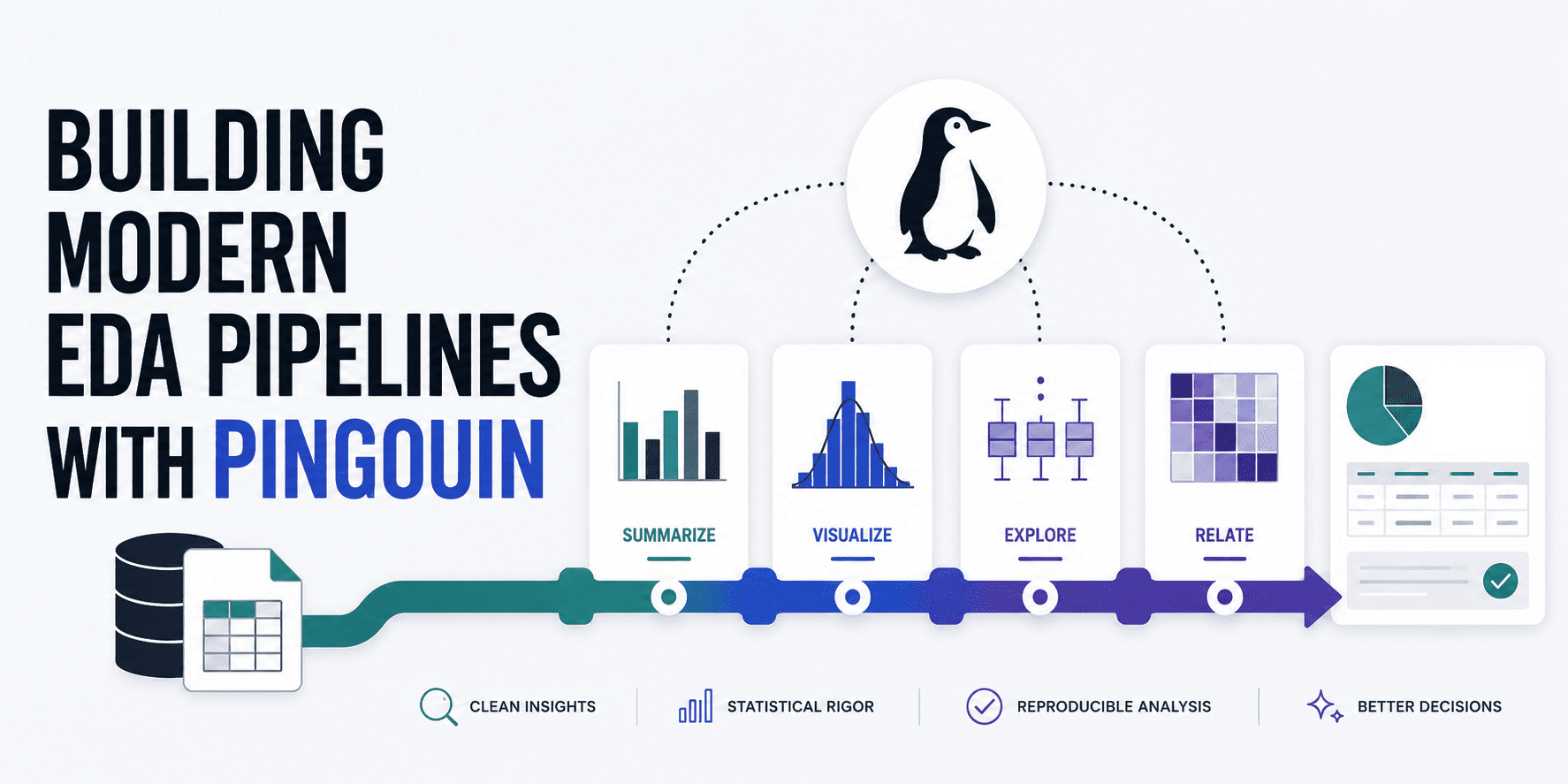

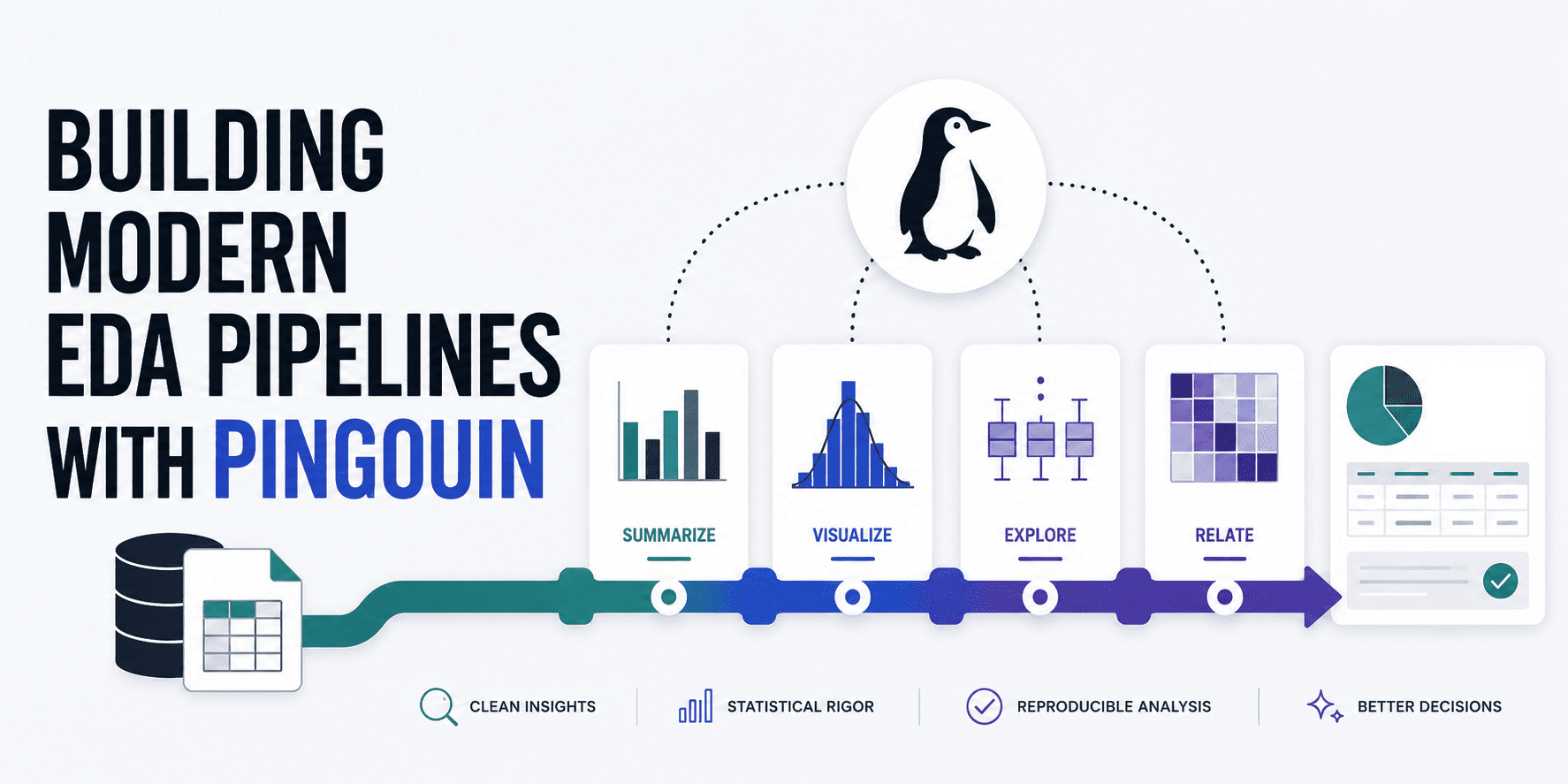

A new technical guide on Pingouin demonstrates how practitioners can construct automated statistical validation pipelines before feeding data to machine learning models. The article showcases a practical workflow using Pingouin as a bridge between SciPy and pandas, specifically highlighting univariate normality testing through Shapiro-Wilk tests on wine quality datasets. The core insight is unglamorous but critical: most real-world datasets fail basic statistical assumptions out of the box, requiring deliberate preprocessing decisions rather than assumption violations masquerading as model problems downstream.

This tutorial arrives at a telling moment in AI development. The industry has spent years optimizing architectures, scaling datasets, and engineering novel loss functions while the foundational practice of rigorous exploratory analysis remains surprisingly underdeveloped in many production pipelines. Pingouin's value proposition—making statistical assumption testing accessible through a single function call rather than piecing together disparate library documentation—reflects a broader maturation phase. The AI ecosystem is finally acknowledging that the deterministic, mathematically grounded prerequisites of classical statistics haven't become obsolete with deep learning; they've simply been neglected. Organizations shipping unreliable models often trace failures back to preventable data issues that proper EDA would have surfaced immediately.

The implications extend beyond tooling. As regulatory scrutiny around AI model transparency intensifies, the ability to demonstrate that data assumptions were explicitly validated before modeling becomes increasingly valuable. Teams that embed statistical rigor into their pipelines now will find themselves better positioned to defend model decisions to auditors and stakeholders. Conversely, practitioners continuing to treat EDA as optional visualization work face accumulating technical debt. Watch for increased adoption of statistical validation frameworks in enterprise ML infrastructure, particularly in risk-sensitive domains where the cost of assumption violations manifests as regulatory violations or financial losses rather than merely accuracy drops.

This article was originally published on KDNuggets. Read the full piece at the source.

Read full article on KDNuggets →DeepTrendLab curates AI news from 50+ sources. All original content and rights belong to KDNuggets. DeepTrendLab's analysis is independently written and does not represent the views of the original publisher.