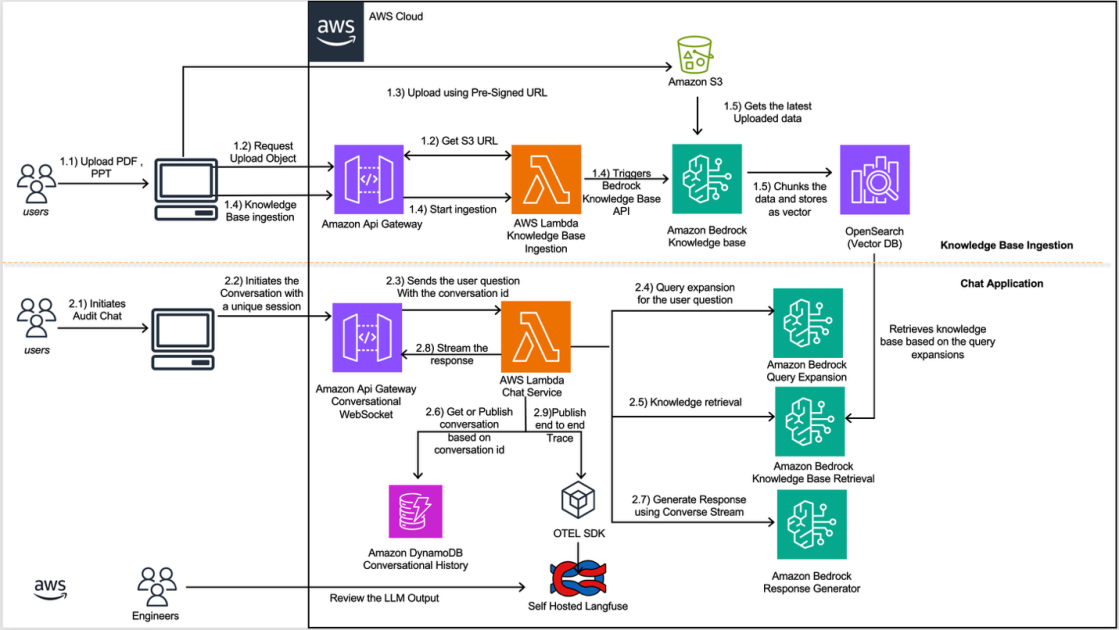

Halliburton and AWS announced a generative AI assistant built on Amazon Bedrock that transforms seismic data processing workflows from manual configuration tasks into conversational interactions. The system, integrated into Halliburton's Seismic Engine, uses Amazon Nova as its language model backbone alongside knowledge bases and DynamoDB to parse natural language requests and translate them into executable processing pipelines. The partnership demonstrated a 95% acceleration in workflow creation time—a dramatic efficiency gain that addresses a longstanding bottleneck where geoscientists previously spent hours manually configuring approximately 100 interdependent specialized tools. The solution employs an intent router powered by Amazon Nova Lite to distinguish between workflow generation requests and documentation queries, then dispatches each to appropriate subsystems. This represents more than an incremental automation gain; it fundamentally restructures how practitioners interact with enterprise software built for highly technical domains.

The move reflects a maturation in enterprise generative AI adoption, where the initial hype around general-purpose chatbots is giving way to vertical applications tailored to specific knowledge domains. Halliburton's seismic processing challenge is emblematic of a broader category of enterprise software: tools so specialized that only experts with years of training can use them effectively. The energy sector has long treated such friction as inevitable—part of the cost of expertise. But conversational AI has reached the point where it can encode deep technical knowledge into a system that translates between expert terminology and the precise procedural steps required for domain software. AWS's Bedrock platform, launched in 2023 but still establishing its enterprise footprint, provides the necessary scaffolding: managed access to language models without the operational burden of self-hosting, integrated knowledge retrieval systems, and the infrastructure to deploy at scale. Halliburton partnering with AWS through its Generative AI Innovation Center signals that the company sees this technology as strategically important enough to warrant deep collaboration with a cloud provider.

What distinguishes this announcement from typical AI productivity gains is its implications for how specialized software gets designed and sold. Enterprise software vendors have historically guarded complexity as a competitive moat—difficult-to-use tools create customer lock-in because the switching cost includes retraining. Lowering that barrier through conversational interfaces threatens that model, but it also creates an opportunity for vendors to expand addressable markets by making their tools accessible to less specialized users. The 95% workflow acceleration claim matters not just for raw productivity, but because it suggests AI-powered interfaces can genuinely represent the problem domain, not just simulate understanding. This is different from using ChatGPT as a search engine for documentation; the system must actually understand the constraints and dependencies between seismic processing tools. That level of domain encoding indicates we're moving beyond novelty AI deployments into systems where the AI becomes integral to how domain experts work.

The practical impact splits across several constituencies. Geoscientists and data scientists gain direct access to tools that previously required hiring specialized workflow engineers or undergoing extensive training. Organizations in energy, mining, and geophysics benefit from reduced project timelines and the ability to deploy personnel more flexibly. But the impact extends to software vendors themselves, who now face pressure to add conversational interfaces or risk user dissatisfaction. Halliburton's own engineering team benefits from customer success metrics—supporting users becomes cheaper when users can self-serve workflow construction. The economics shift fundamentally when software training can be partially replaced by conversational guidance powered by a language model rather than human documentation specialists.

The announcement tilts competitive advantage toward AWS in the enterprise conversational AI space. Azure and Google Cloud offer comparable language models and infrastructure, but AWS has built stronger partnerships with established enterprise vendors and positioned Bedrock as a managed service specifically designed for vertical applications. By having Halliburton—a global energy infrastructure giant—publicly demonstrate 95% efficiency gains using Bedrock, AWS establishes proof points that competitors must match. The architecture here also matters strategically: AWS is not merely providing a language model API but rather a platform layer including knowledge bases, intent routing, and integration pathways that reduce the engineering effort required to deploy domain-specific conversational systems. That platform positioning could translate into higher switching costs than traditional LLM access, particularly if vendors build dependencies on those integrations.

The significant questions going forward concern generalization and sustainability. Can this approach scale beyond energy sector applications to other complex domains—pharmaceutical research, chip design, financial modeling—or does seismic processing occupy a sweet spot of well-documented procedures and relatively structured input that other domains lack? Cost remains largely unaddressed in the announcement; running inference at scale against sophisticated knowledge bases could prove expensive at enterprise volumes. Halliburton's 95% acceleration metric also deserves scrutiny: acceleration compared to what baseline? If it's accelerating experienced experts, that's different than accelerating less skilled users, and the real business value might lie in the latter. Finally, there's the organizational risk that conversational interfaces reduce the tacit knowledge among domain specialists, potentially creating new vulnerabilities if the AI system fails or becomes unavailable. The next 18 months will reveal whether this represents a genuine inflection point in enterprise software design or a compelling but narrowly applicable success story.

This article was originally published on AWS Machine Learning Blog. Read the full piece at the source.

Read full article on AWS Machine Learning Blog →DeepTrendLab curates AI news from 50+ sources. All original content and rights belong to AWS Machine Learning Blog. DeepTrendLab's analysis is independently written and does not represent the views of the original publisher.