Do text embeddings perfectly encode text?

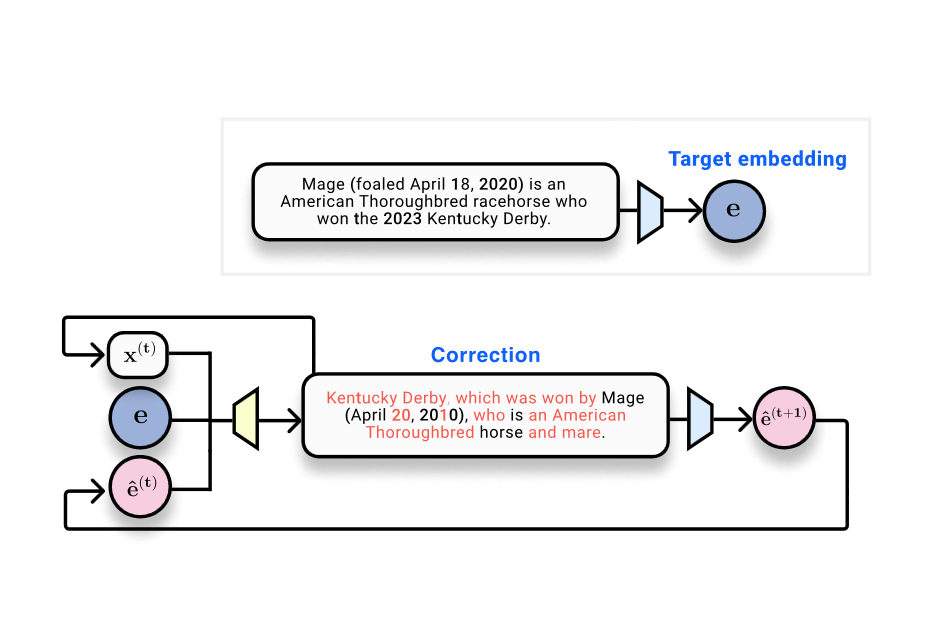

'Vec2text' can serve as a solution for accurately reverting embeddings back into text, thus highlighting the urgent need for revisiting security protocols around embedded data.

Explore the latest AI news and research tagged #interpretability — curated from top sources including OpenAI, Anthropic, Google DeepMind, and more.

'Vec2text' can serve as a solution for accurately reverting embeddings back into text, thus highlighting the urgent need for revisiting security protocols around embedded data.

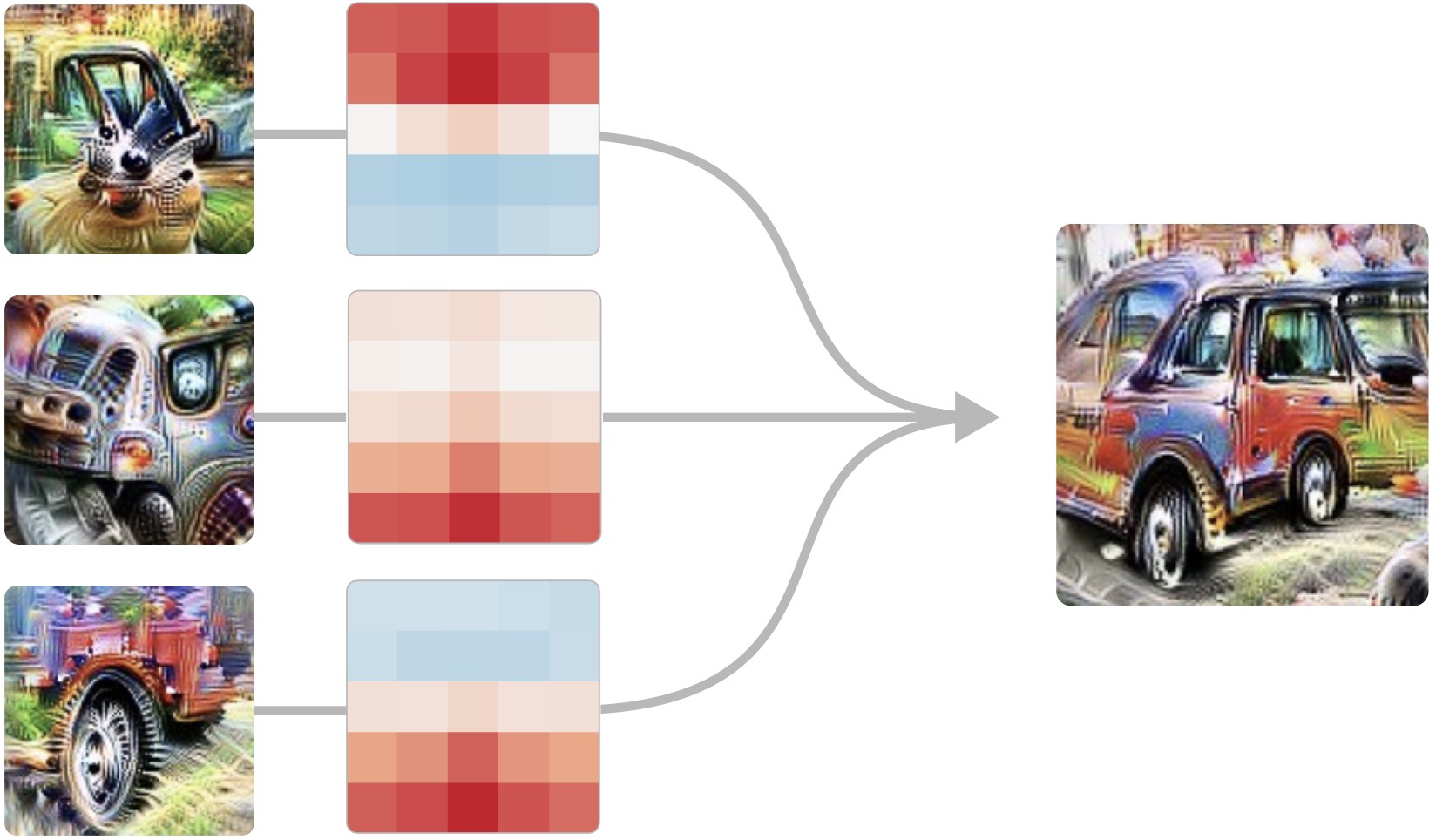

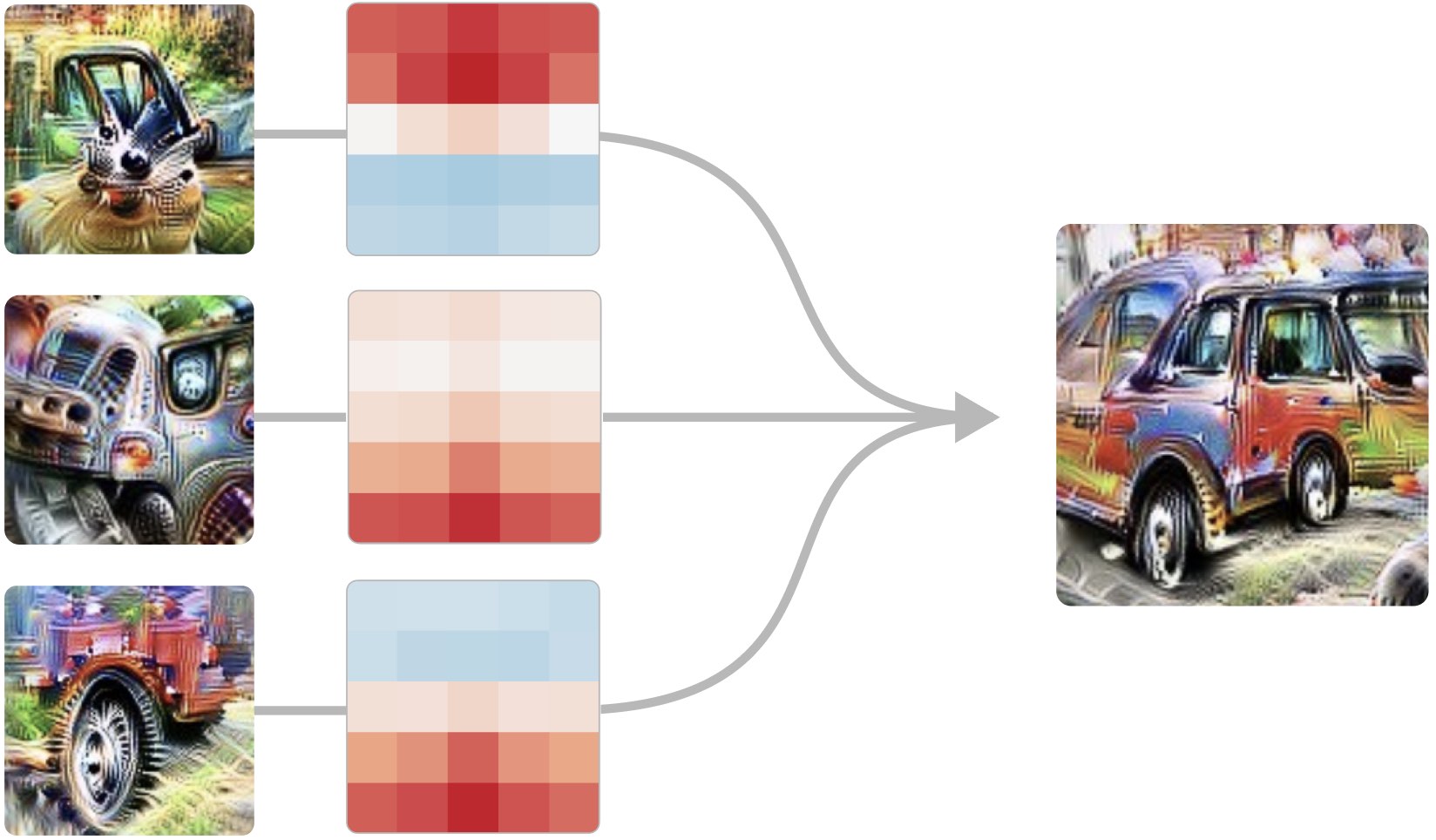

We report the existence of multimodal neurons in artificial neural networks, similar to those found in the human brain.

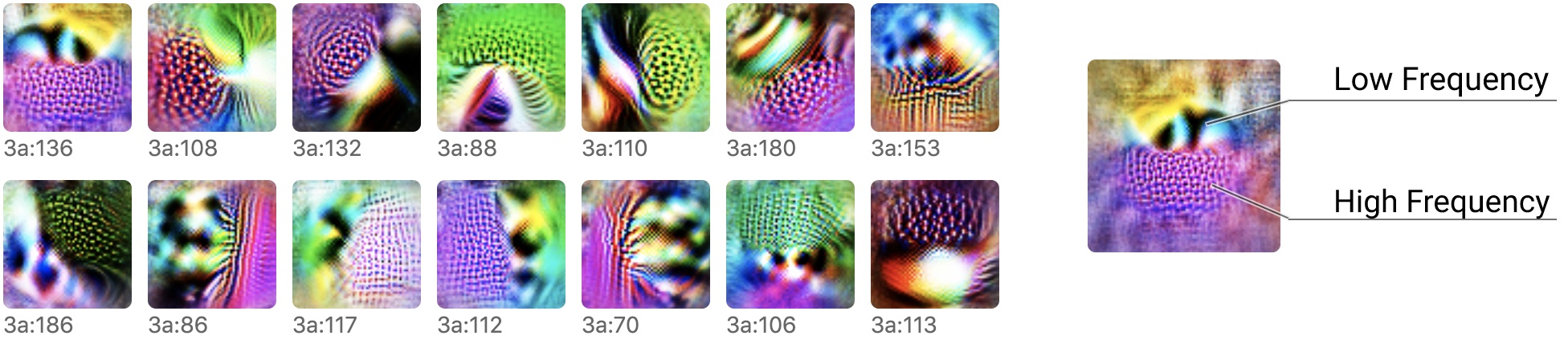

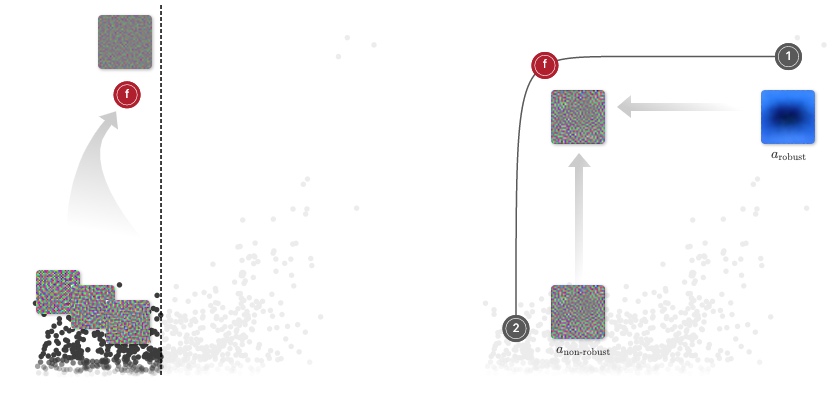

We present techniques for visualizing, contextualizing, and understanding neural network weights.

Reverse engineering the curve detection algorithm from InceptionV1 and reimplementing it from scratch.

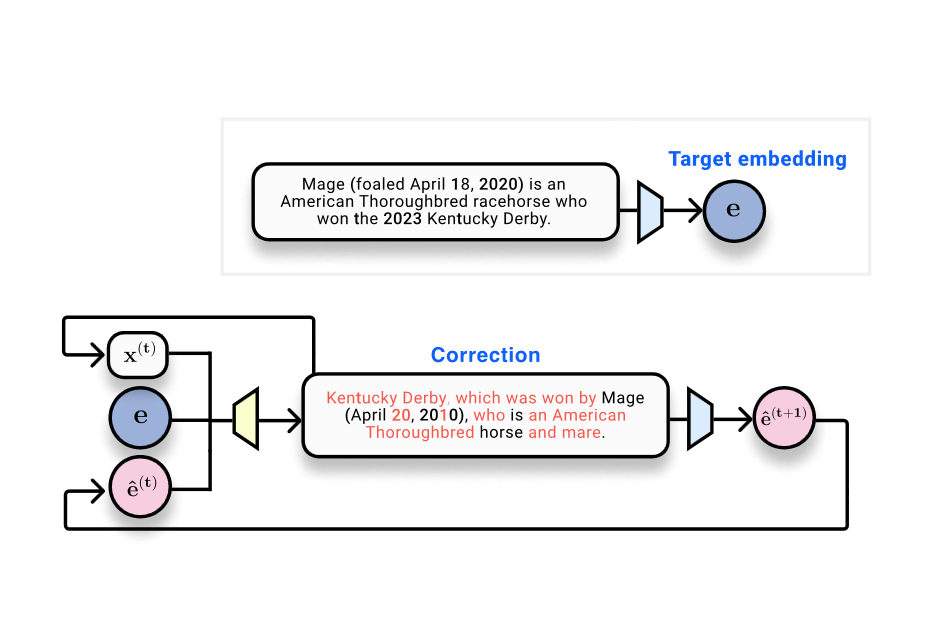

A family of early-vision neurons reacting to directional transitions from high to low spatial frequency.

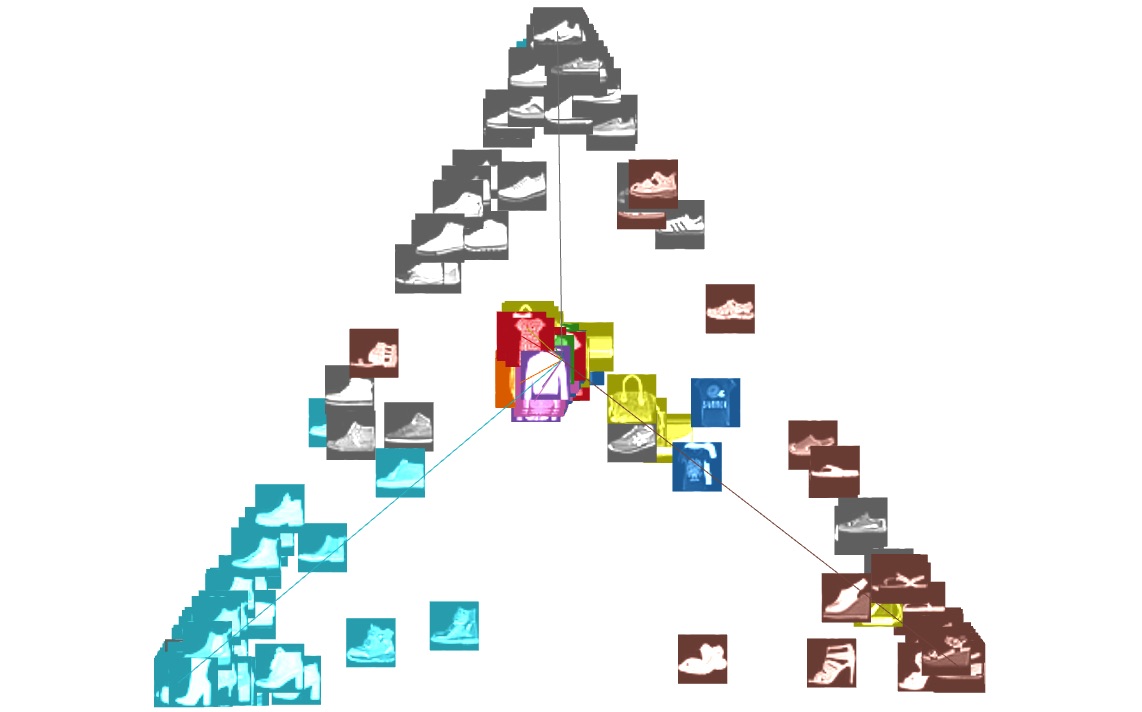

With diverse environments, we can analyze, diagnose and edit deep reinforcement learning models using attribution.

Part one of a three part deep dive into the curve neuron family.

An overview of all the neurons in the first five layers of InceptionV1, organized into a taxonomy of 'neuron groups.'

By focusing on linear dimensionality reduction, we show how to visualize many dynamic phenomena in neural networks.

What can we learn if we invest heavily in reverse engineering a single neural network?

By studying the connections between neurons, we can find meaningful algorithms in the weights of neural networks.

Six comments from the community and responses from the original authors