Playing Connect Four with Deep Q-Learning

Solving multiplayer games with function approximation The post Playing Connect Four with Deep Q-Learning appeared first on Towards Data Science .

Explore the latest AI news and research tagged #neural networks — curated from top sources including OpenAI, Anthropic, Google DeepMind, and more.

Solving multiplayer games with function approximation The post Playing Connect Four with Deep Q-Learning appeared first on Towards Data Science .

A review of the Cross-Stage Partial Network paper — and a from-scratch PyTorch implementation The post CSPNet Paper Walkthrough: Just Better, No Tradeoffs appeared first on Towards Data Science .

One scale parameter determines accuracy in rotation-based vector quantization. The post How a 2021 Quantization Algorithm Quietly Outperforms Its 2026 Successor appeared first on Towards Data Science .

An encoder (optical system) maps objects to noiseless images, which noise corrupts into measurements. Our information estimator uses only these noisy measurements and a noise model to quantify how well…

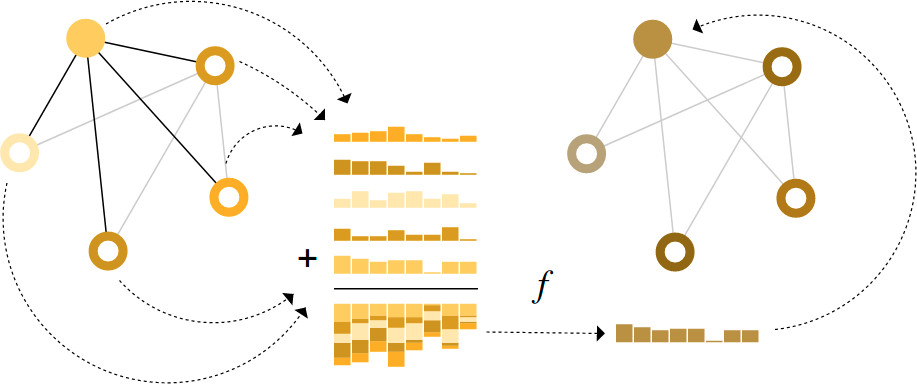

What components are needed for building learning algorithms that leverage the structure and properties of graphs?

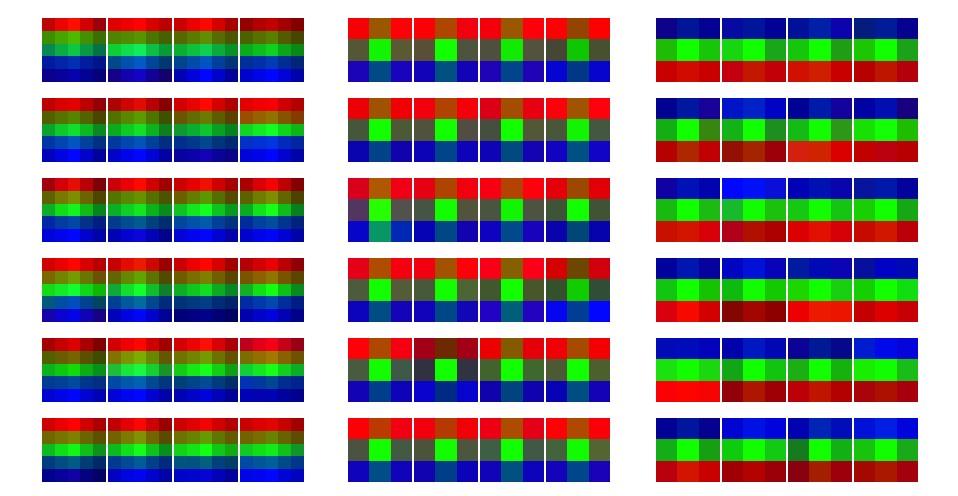

Weights in the final layer of common visual models appear as horizontal bands. We investigate how and why.

We report the existence of multimodal neurons in artificial neural networks, similar to those found in the human brain.

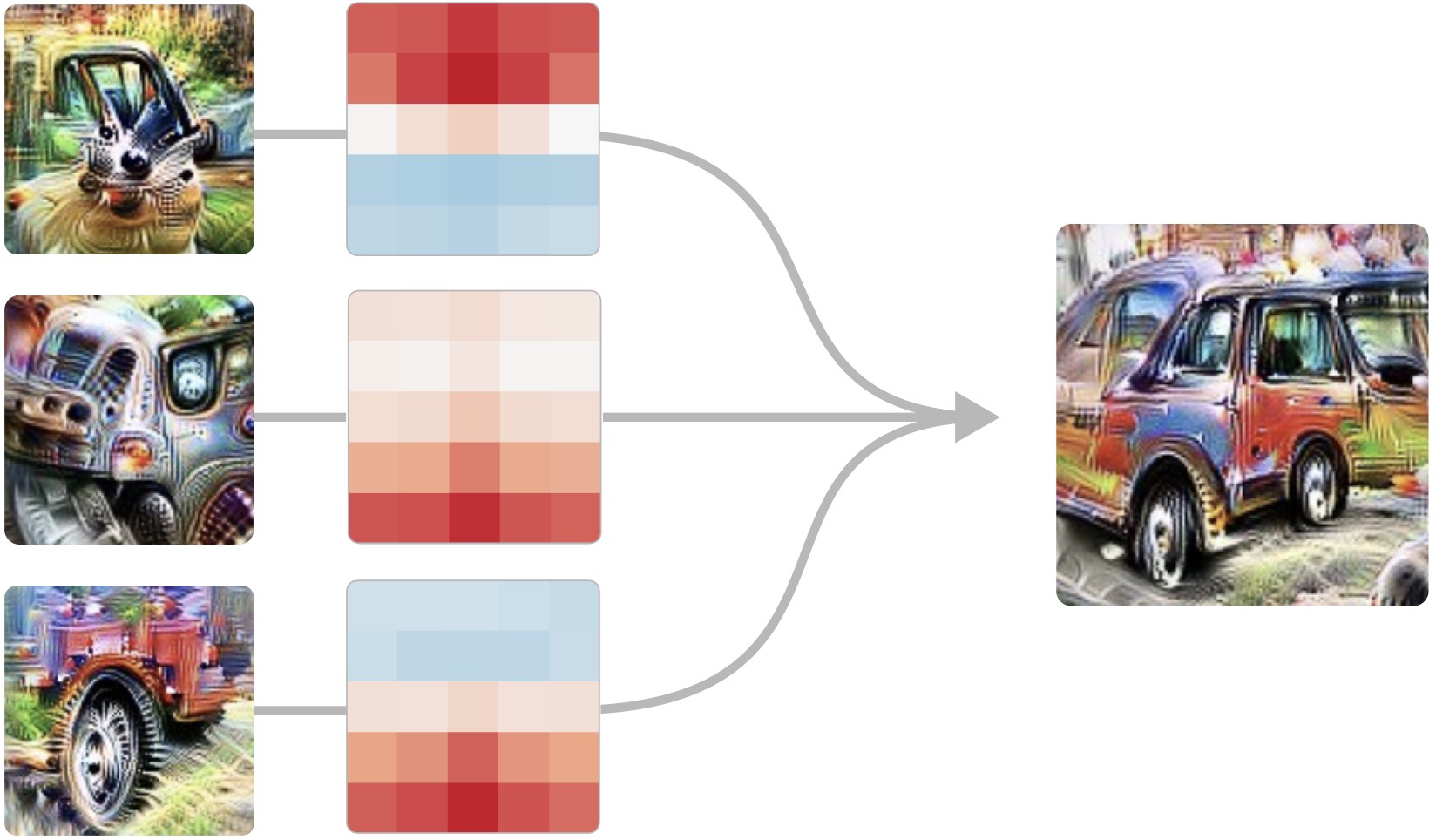

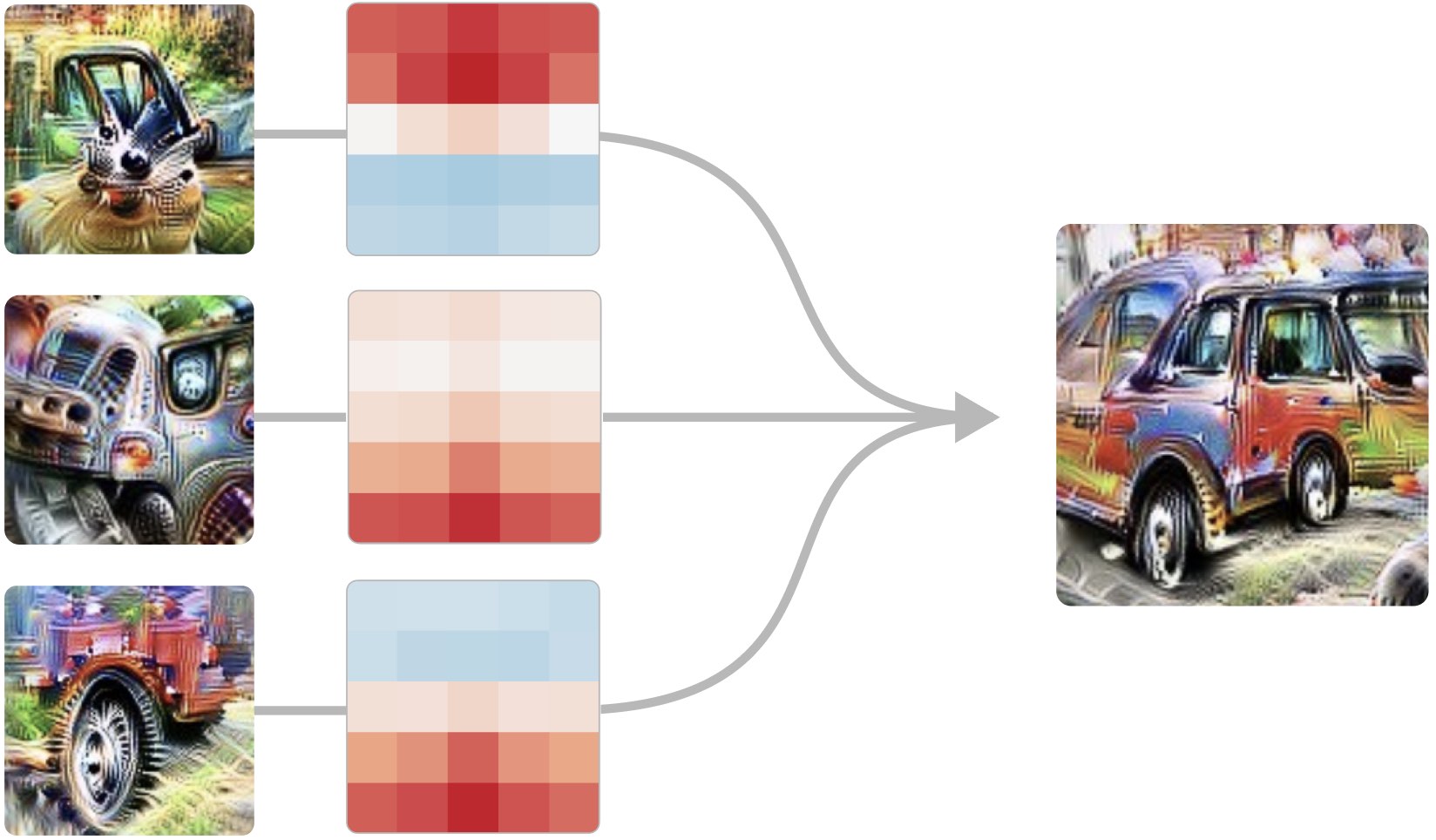

We present techniques for visualizing, contextualizing, and understanding neural network weights.

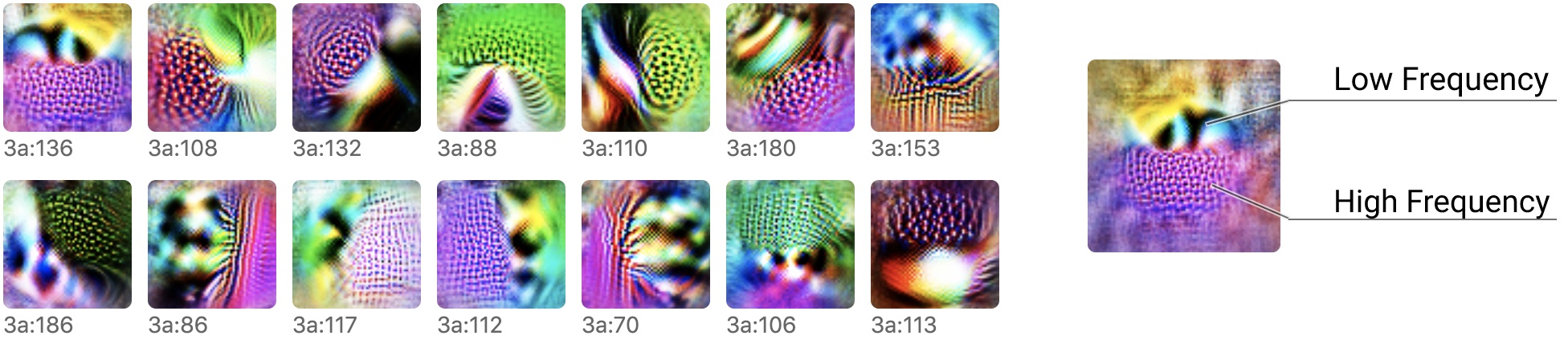

Reverse engineering the curve detection algorithm from InceptionV1 and reimplementing it from scratch.

A family of early-vision neurons reacting to directional transitions from high to low spatial frequency.

Training an end-to-end differentiable, self-organising cellular automata for classifying MNIST digits.

Part one of a three part deep dive into the curve neuron family.

An overview of all the neurons in the first five layers of InceptionV1, organized into a taxonomy of 'neuron groups.'

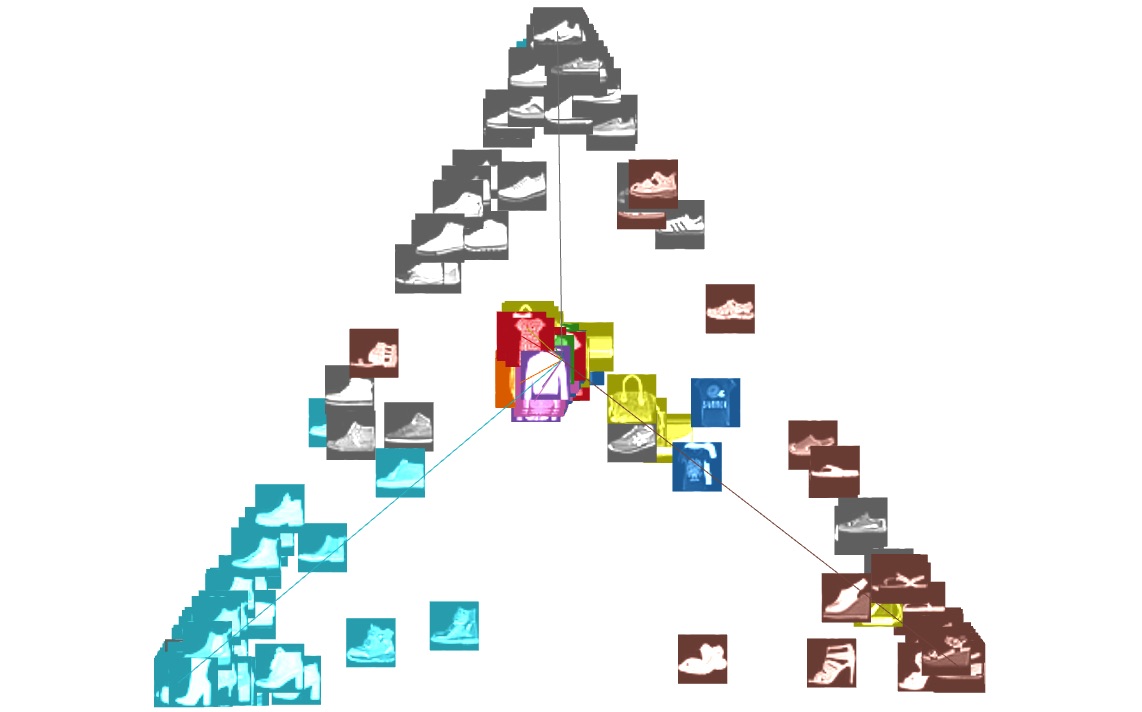

By focusing on linear dimensionality reduction, we show how to visualize many dynamic phenomena in neural networks.

What can we learn if we invest heavily in reverse engineering a single neural network?

By studying the connections between neurons, we can find meaningful algorithms in the weights of neural networks.

Six comments from the community and responses from the original authors

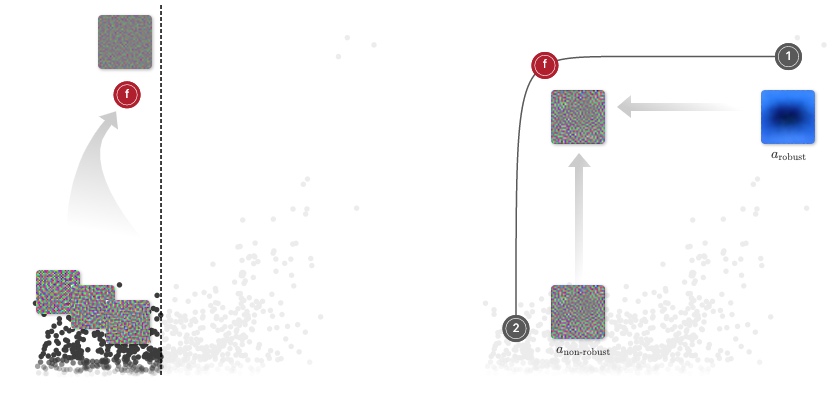

The main hypothesis in Ilyas et al. (2019) happens to be a special case of a more general principle that is commonly accepted in the robustness to distributional shift literature

An example project using webpack and svelte-loader and ejs to inline SVGs