Gemini API File Search: The Easy Way to Build RAG

Building a RAG system just got much easier. Google’s File Search tool for the Gemini API now handles the heavy lifting of connecting LLMs to your data. Chunking,…

Latest generative AI news — text, image, audio, and video generation. Coverage of diffusion models, multimodal AI, creative tools, and enterprise generative AI applications.

Generative AI refers to AI systems that produce new content — text, images, audio, video, code, 3D models — rather than merely classifying or analyzing existing content. The field exploded into mainstream awareness in 2022–2023 with the release of Stable Diffusion, DALL-E 3, Midjourney, and ChatGPT, each demonstrating that AI could produce creative and professional-quality output at scale.

The generative AI stack spans multiple modalities and model families. Text generation is dominated by large language models. Image generation is led by diffusion models (Stable Diffusion, DALL-E 3, Midjourney, Flux) and increasingly by autoregressive models. Audio generation tools (ElevenLabs, Suno, Udio) have made realistic voice cloning and music generation accessible. Video generation (Sora, Runway, Kling) is rapidly improving. The convergence of these modalities into unified multimodal models represents the next frontier.

Enterprise adoption of generative AI is the defining commercial story of 2024–2025. Every major cloud provider, software company, and enterprise vendor has launched generative AI products. DeepTrendLab tracks the full generative AI ecosystem — model releases, creative tools, enterprise platforms, copyright and legal developments, and the economic impact on creative industries.

Building a RAG system just got much easier. Google’s File Search tool for the Gemini API now handles the heavy lifting of connecting LLMs to your data. Chunking,…

It is, once again, Gemini season. Google is announcing a host of new Gemini features during its pre-I/O Android showcase, many of which aim to help use your…

Rivian's AI-powered voice assistant is rolling out today to the company's vehicle fleet. The assistant will be available through a software update to all compatible Rivian Gen 1…

Thinking Machines, the AI company founded by former OpenAI CTO Mira Murati, announced Monday that it's working on something called "interaction models." The idea behind interaction models, according…

In this post, we build a multimodal retrieval system for aerospace manufacturing documents using Amazon Nova Multimodal Embeddings on Amazon Bedrock and Amazon S3 Vectors. We evaluate the…

Image captioning is one of the most fundamental tasks in computer vision. Owing to its open-ended nature, it has received significant attention in the era of multimodal large…

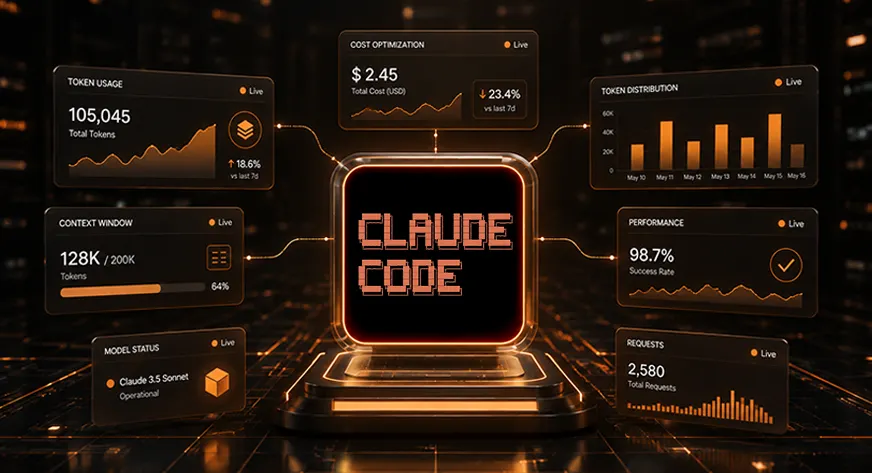

Using Claude Code in large projects can lead to skyrocketing token costs. A 2025 Stanford study reveals developers waste thousands of tokens daily, draining budgets as unchecked context…

In this post, you will learn how to implement reinforcement learning with verifiable rewards (RLVR) to introduce verification and transparency into reward signals to improve training performance. This…

True spatial intelligence for multimodal agents transcends low-level geometric perception, evolving from knowing where things are to understanding what they are for. While existing benchmarks, such as VSI-Bench,…

We’re announcing OS Level Actions for AgentCore Browser. This new capability unblocks these scenarios by exposing direct OS control through the InvokeBrowser API, so agents can interact with…

Equity management firm Carta is an example of how enterprises can use AI and agents to transform and shape their businesses.

AI agent systems today juggle separate models for vision, speech and language — losing time and context as they pass data from one model to the other. Unveiled…

The model expands the AI chip giant’s non-hardware offerings.

Gemma 4: Our most intelligent open models to date, purpose-built for advanced reasoning and agentic workflows.

Recraft V4 generates art-directed images — and actual editable SVGs — with strong composition, accurate text rendering, and what the Recraft team calls "design taste." Four models are…

Our latest Veo update generates lively, dynamic clips that feel natural and engaging — and supports vertical video generation.

Systematically evaluating the factuality of large language models with the FACTS Benchmark Suite.

A diffusion model is a type of generative AI that learns to create images by reversing a process of adding noise. During training, it learns to denoise progressively noisier versions of real images. At inference, it starts from random noise and iteratively denoises guided by a text or image prompt. Stable Diffusion, DALL-E 3, and Midjourney are built on diffusion architectures.

Traditional AI models are discriminative — they classify, detect, or predict based on input (spam filter, image classifier, fraud detector). Generative AI models produce new content — text, images, audio, video — that resembles training data. The distinction is increasingly blurred as multimodal models do both.

Generative AI has triggered significant legal uncertainty around copyright. Key questions include whether training on copyrighted data constitutes infringement, whether AI-generated outputs can be copyrighted, and the liability of AI companies for infringing outputs. Multiple lawsuits are active in the US, UK, and EU. The US Copyright Office has ruled that purely AI-generated works cannot be copyrighted; human-AI collaborative works may qualify.