Open source AI refers to AI models whose weights, architecture, and (increasingly) training data are publicly released, allowing anyone to download, run, fine-tune, and modify them. The open source AI ecosystem has become a significant counterweight to the closed frontier labs, with models like Meta's Llama series, Mistral, and the Hugging Face community producing capable alternatives that close the gap with proprietary models.

Meta's decision to open-source the Llama model family was a watershed moment. Llama 2 (2023) and Llama 3 (2024) enabled a global ecosystem of fine-tuned variants, deployment tooling, and research. Mistral AI has built a business around open models, releasing Mixtral (a mixture-of-experts model) and Mistral 7B. Microsoft's Phi series demonstrated that small, well-trained models can punch above their weight class. Hugging Face serves as the central hub for open model discovery, hosting over 500,000 models.

The open vs. closed AI debate is one of the defining tensions in the field. Open-source advocates argue that transparency, auditability, and local deployment are essential for safety, privacy, and democratization. Critics argue that releasing powerful models without safeguards accelerates proliferation of harmful capabilities. DeepTrendLab tracks open model releases, fine-tuning techniques, deployment tooling, and the ongoing policy debate around open-source AI regulation.

Latest Open Source AI News

18 recent articles

What’s the Best Way to Brainwash an LLM?

I spent a weekend trying to convince a language model it was C-3PO. Here's what actually worked. The post What’s the Best Way to Brainwash an LLM? appeared…

Adaption aims big with AutoScientist, an AI tool that helps models train themselves

Adaption's new AutoScientist tool is designed to let models adapt to specific capabilities quickly through an automated approach to conventional fine-tuning.

Navigating EU AI Act requirements for LLM fine-tuning on Amazon SageMaker AI

In this post, we show you how to set up FLOPs tracking during LLM fine-tuning using the open source Fine-Tuning FLOPs Meter toolkit on Amazon SageMaker AI. You…

MySQL 9.7: First Major LTS Since 8.4 Brings Enterprise Features to Community Edition

Oracle has announced the general availability of MySQL 9.7.0, marking the start of a new 9.7 LTS release series and the first major one since MySQL 8.4. The…

The Must-Know Topics for an LLM Engineer

From tokenisation to evaluation : how modern language models actually work in practice The post The Must-Know Topics for an LLM Engineer appeared first on Towards Data Science…

Top 10 Open-Source Libraries to Fine-Tune LLMs Locally

Fine-tuning LLMs has become much easier because of open-source tools. You no longer need to build the full training stack from scratch. Whether you want low-VRAM training, LoRA,…

Mistral Adds Remote Agents and Work Mode to Le Chat

Mistral has released Mistral Medium 3.5, a 128-billion parameter model designed to handle instruction following, reasoning, and coding within a single system, and introduced new cloud-based agent capabilities…

Agent-guided workflows to accelerate model customization in Amazon SageMaker AI

Amazon SageMaker AI now offers an agentic experience that changes this. Developers describe their use case using natural language, and the AI coding agent streamlines the entire journey,…

Month in 4 Papers (April 2026)

Last Updated on May 4, 2026 by Editorial Team Author(s): Ala Falaki, PhD Originally published on Towards AI. Month in 4 Papers (April 2026) This series of posts…

Reinforcement fine-tuning with LLM-as-a-judge

In this post, we take a deeper look at how RLAIF or RL with LLM-as-a-judge works with Amazon Nova models effectively.

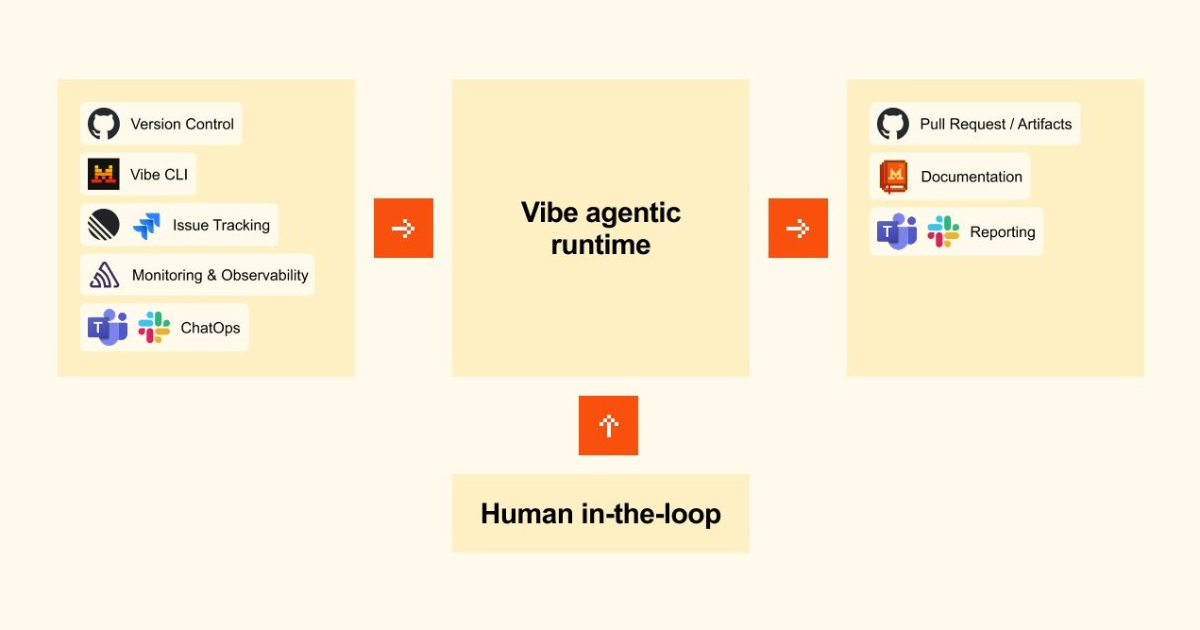

Mistral’s Model Lets You Vibe Long-Running Code in the Cloud

Mistral's approach prioritizes natural language interactions, making coding more accessible while allowing for integration with existing code repositories.

Granite 4.1 LLMs: How They’re Built

Introducing OpenAI Privacy Filter

OpenAI Privacy Filter is an open-weight model for detecting and redacting personally identifiable information (PII) in text with state-of-the-art accuracy

Seeing What’s Possible with OpenCode + Ollama + Qwen3-Coder

Run a powerful, private AI coder locally with OpenCode, Ollama & Qwen3-Coder. Free, offline, and unlimited.

Safetensors is Joining the PyTorch Foundation

Welcome Gemma 4: Frontier multimodal intelligence on device

Falcon Perception

Frequently Asked Questions about Open Source AI

What is Llama and why does it matter?

Llama is Meta's family of open-source large language models, released in 2023–2024. By releasing model weights publicly, Meta enabled anyone to run, fine-tune, and deploy capable LLMs without relying on closed API providers. Llama 3 (released 2024) with 70B and 405B parameter variants competes with GPT-4 class models, and has spawned thousands of fine-tuned variants for specific use cases.

What is the difference between open-source and open-weight AI models?

Open-weight models release the trained model weights publicly, allowing anyone to run and fine-tune them, but may not release training data or full training code. True open-source AI releases everything: weights, training data, training code, and evaluation infrastructure. Most 'open' AI models are open-weight, not fully open-source. The OSI (Open Source Initiative) has published criteria for what qualifies as genuinely open-source AI.

What is fine-tuning and how is it used with open models?

Fine-tuning adapts a pre-trained open-weight model to a specific task or domain using a smaller labeled dataset. Parameter-efficient fine-tuning techniques like LoRA (Low-Rank Adaptation) allow fine-tuning even large models on consumer hardware. This has enabled a cottage industry of specialized models: instruction-tuned assistants, coding models, medical models, and role-playing models, all built on open foundations like Llama.