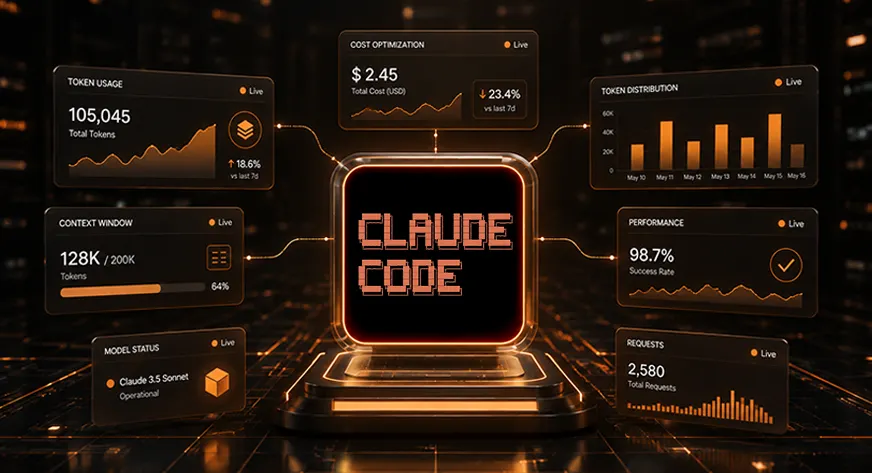

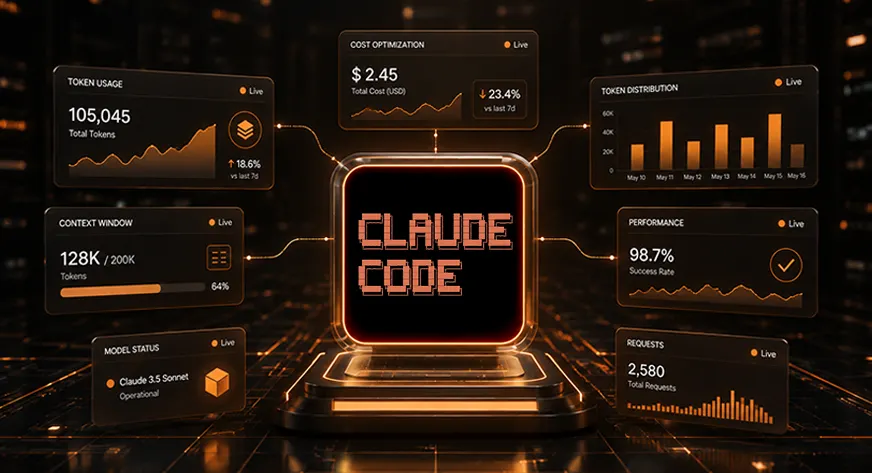

Analytics Vidhya published a practical optimization guide framing Claude Code token management as a core challenge for modern development workflows. The guide cites a 2025 Stanford study claiming developers waste thousands of tokens daily through unchecked context expansion, then proposes 23 concrete tactics ranging from session clearing to context window tuning. The message is straightforward: as AI coding assistants become production tools, treating token efficiency as an afterthought is financially reckless.

The timing reflects a market inflection point. Six months ago, most Claude Code discussions centered on capability—what the tool could do. Today, the conversation has shifted to sustainability: how to keep using it when a single complex refactoring can cost $50 and a full day of debugging can drain budgets across a team. This shift signals that Claude Code has crossed from a "nice novelty" into something developers rely on for critical work, which means cost structure now matters. The Stanford study, whether rigorous or merely illustrative, gives permission to have this conversation openly—token waste is not a personal failing but a systematic problem worth solving.

What makes this significant isn't the individual tips (clearing chat history, compacting context, tuning thresholds) but the implicit message: if you're paying per token, your interaction pattern has to change. Context management stops being a backend infrastructure concern and becomes a moment-to-moment practice. Developers must now think like they're operating under a bandwidth constraint, culling logs, dropping irrelevant discussion, and maintaining discipline about what stays in the working context. This is a different cognitive load than traditional coding. It also reveals something about how Claude's business thinks about its product: not as infinite context (the marketing promise) but as context-with-tradeoffs. Competitors positioning unlimited context or flat-rate pricing will position this as a Claude weakness; Claude's own guidance frames it as a discipline problem for the user.

The immediate impact falls on individual developers and lean teams. For a solo engineer or a startup using Claude Code for rapid prototyping, a 20-30% reduction in tokens translates directly to runway extension. For enterprises, this validates a different concern: that AI coding assistance at scale requires operational governance—not just access control, but billing controls, usage quotas, and team training on efficient patterns. It also creates a new service category: consulting firms that help companies optimize their Claude workflows, similar to how Kubernetes spawned an entire ecosystem of optimization practices.

Versus competitors, this move positions Anthropic as thinking systematically about enterprise adoption. GitHub Copilot avoids this conversation entirely by staying platform-agnostic and offering flat pricing. OpenAI's ChatGPT API ties cost to usage but doesn't create structured guidance for managing it. By publishing Stanford-backed evidence of the problem and then offering tactical solutions, Anthropic is saying: we understand your budget constraints, and we've thought deeply about how to work within them. It's a savvy form of customer education that also locks in usage patterns—developers who adopt these practices become more efficient but also more dependent on Claude specifically.

The open question is whether this guidance becomes table stakes or competitive advantage. If every major LLM provider publishes similar optimization guides within six months, this becomes background noise. If instead, Claude's efficiency advantage remains real (because the tips genuinely reflect deeper architectural thinking), then early adopters of these practices gain lasting cost advantage. Also worth watching: whether developers actually adopt these disciplines or treat them as suggestions. Context discipline only works if enforced; optional token-saving is no savings at all. The Stanford study makes clear the problem exists; the next phase is whether the market can sustain the solution.

This article was originally published on Analytics Vidhya. Read the full piece at the source.

Read full article on Analytics Vidhya →DeepTrendLab curates AI news from 50+ sources. All original content and rights belong to Analytics Vidhya. DeepTrendLab's analysis is independently written and does not represent the views of the original publisher.