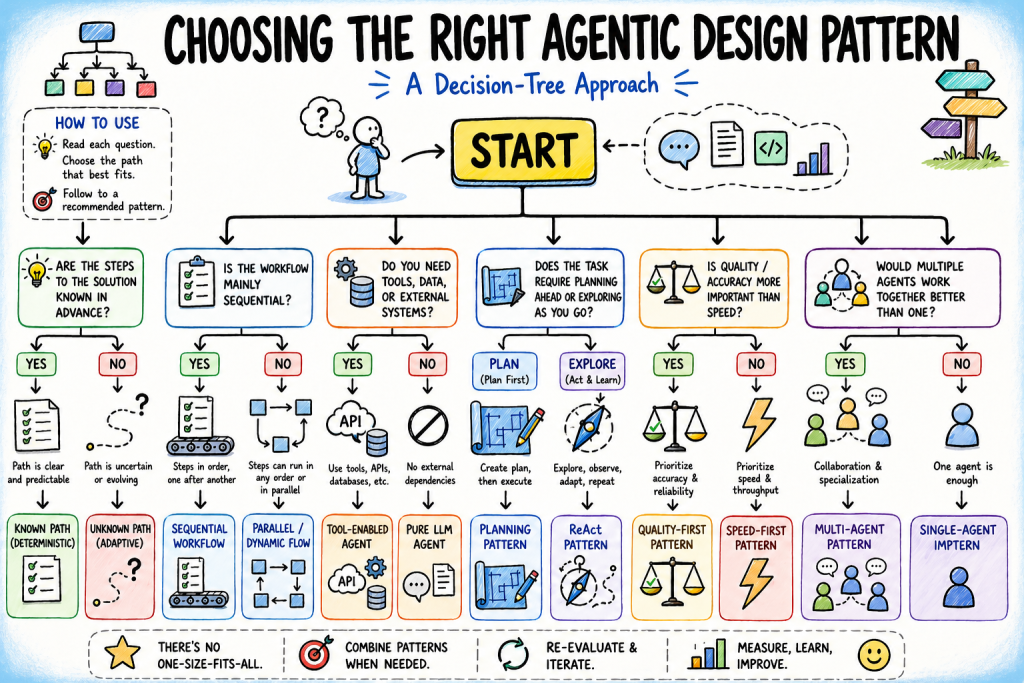

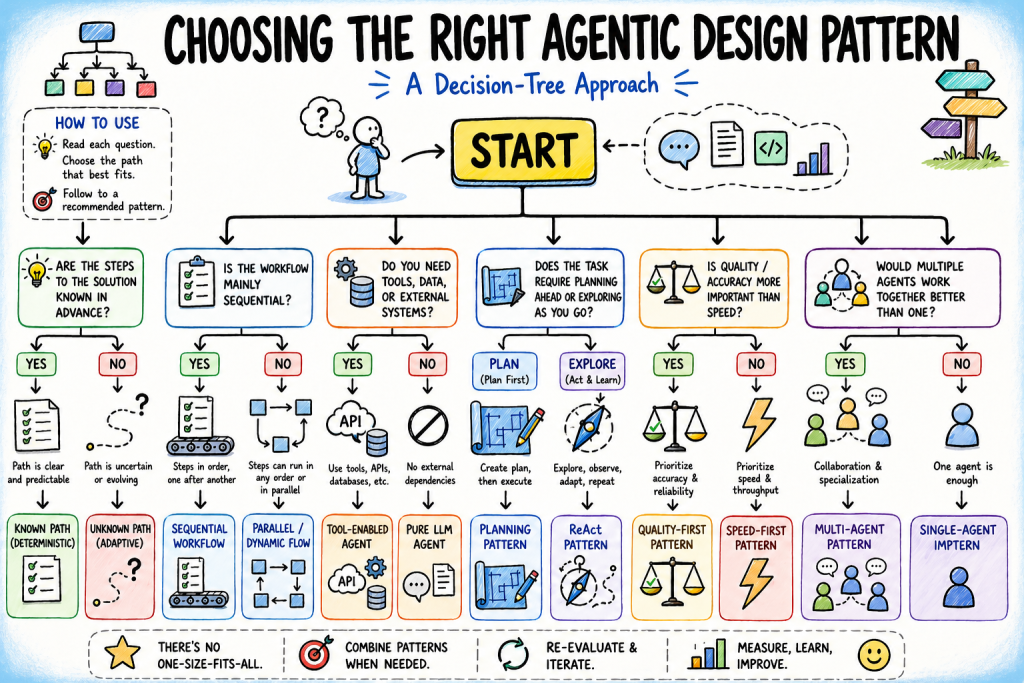

Machine Learning Mastery has published a framework for one of AI development's most critical but under-discussed challenges: choosing the right agentic architecture before building. The piece positions agentic design pattern selection—deciding whether to use ReAct, planning-based, reflection-based, or multi-agent approaches—as a decision-tree problem driven by task properties rather than aesthetic or fashionable choices. Rather than presenting patterns as abstract design philosophies, the analysis argues that each pattern encodes specific assumptions about task structure, reward, and adaptability, and that mismatches between assumed and actual task properties are the root cause of most agentic system failures.

The context here matters. Over the past eighteen months, agentic AI frameworks have proliferated rapidly—from research papers to production deployments across enterprises. Alongside this explosion, developers have faced a consistent friction point: which pattern actually fits their problem? The industry has documented individual patterns well through academic work and vendor content, but has largely skipped the meta-layer—the decision logic for choosing between them. Teams have typically resolved this by cargo-culting architectures from successful competitors, adopting whatever pattern they saw in a conference talk, or trial-and-error deployment. This article attempts to make that implicit decision-making explicit through a structured framework, treating pattern selection as a solvable design problem rather than an intuitive call.

The implications cut directly to engineering efficiency and cost. A mismatched pattern choice early in development cascades: a task that needed only a single well-prompted agent with tools gets wrapped in multi-agent orchestration overhead, burning API costs and engineering time. Conversely, a task genuinely requiring adaptive decomposition across specialized roles gets forced into a monolithic ReAct loop, which fails silently under real-world complexity. These failures don't surface until production, at which point architectural redesign is expensive and disruptive. A decision tree that maps task properties to patterns upfront short-circuits this cycle, moving the architecture choice from post-hoc damage control to informed upfront design. For enterprises with limited agentic expertise, this systematization reduces the risk premium on early architectural decisions.

The framework directly affects developers building agentic systems, particularly those outside large research labs or AI-native companies where this tribal knowledge exists in institutional memory. It matters equally to enterprise teams deploying AI agents for mission-critical workflows, where wrong pattern choices can trigger cascade failures or uncontrolled cost overruns. Product teams at AI infrastructure companies—those building orchestration platforms, agent frameworks, and LLM APIs—will find this especially relevant, as customer success depends partly on customers choosing architectures the platform can actually support. Consultants advising on agentic deployments gain a repeatable methodology rather than relying on experience-based intuition.

Systematizing agentic design decisions carries both democratizing and concentrating effects. On one hand, explicit decision logic reduces the moat of deep agentic expertise, allowing teams without PhD-level ML experience to make sound architectural choices. On the other, it risks ossifying pattern selection into a narrow set of "approved" approaches promoted by whichever vendors control the most visible frameworks and evaluation benchmarks. If a decision tree becomes industry standard, the vendors whose patterns align with that tree gain competitive advantage, while alternative approaches risk marginalization. There's also a subtle shift: as patterns become systematized, the incentive to innovate beyond them diminishes, potentially locking in architectural assumptions that may need to evolve as tasks, costs, and model capabilities change.

What matters next is whether this kind of framework actually scales into reliable practice. Does the decision tree hold across genuinely novel task types, or does it assume a narrow taxonomy of problems? How do these patterns adapt as model capabilities shift—does a task that required multi-agent reasoning become solvable by a single agent as models improve? And critically, what feedback mechanisms exist to trigger architectural evolution when initial pattern choices no longer fit? A decision tree is only useful if teams actually revisit it as circumstances change, not if it becomes dogma. The emerging challenge in agentic AI isn't pattern design anymore; it's pattern discipline—choosing systematically, measuring rigorously against assumptions, and adapting when reality diverges from the theory.

This article was originally published on Machine Learning Mastery. Read the full piece at the source.

Read full article on Machine Learning Mastery →DeepTrendLab curates AI news from 50+ sources. All original content and rights belong to Machine Learning Mastery. DeepTrendLab's analysis is independently written and does not represent the views of the original publisher.