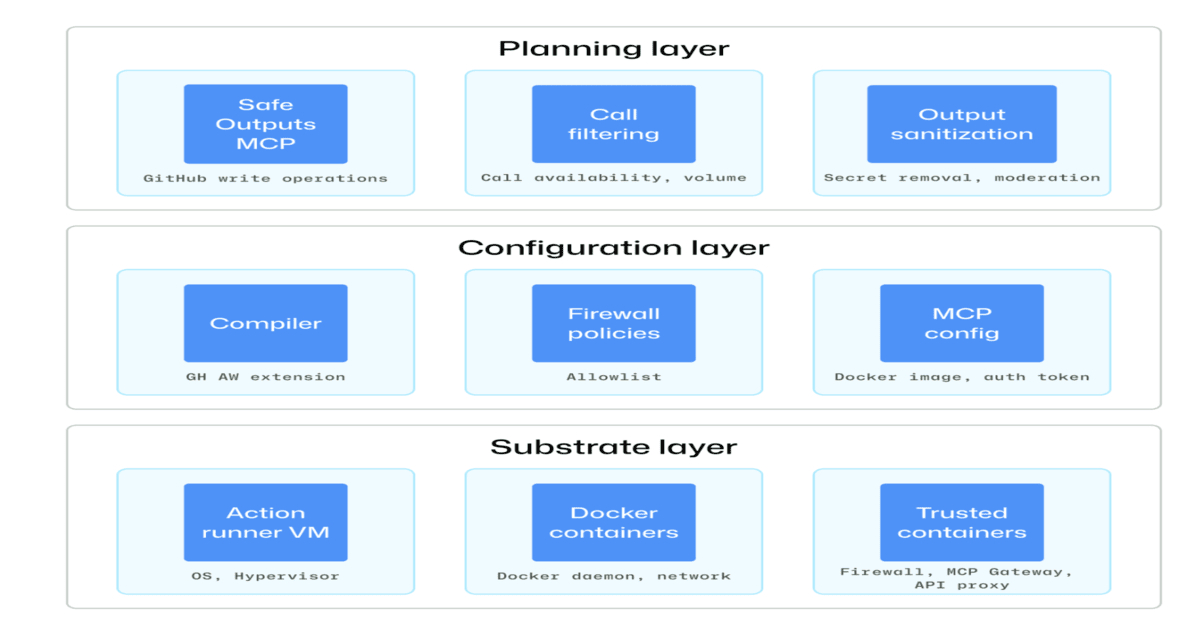

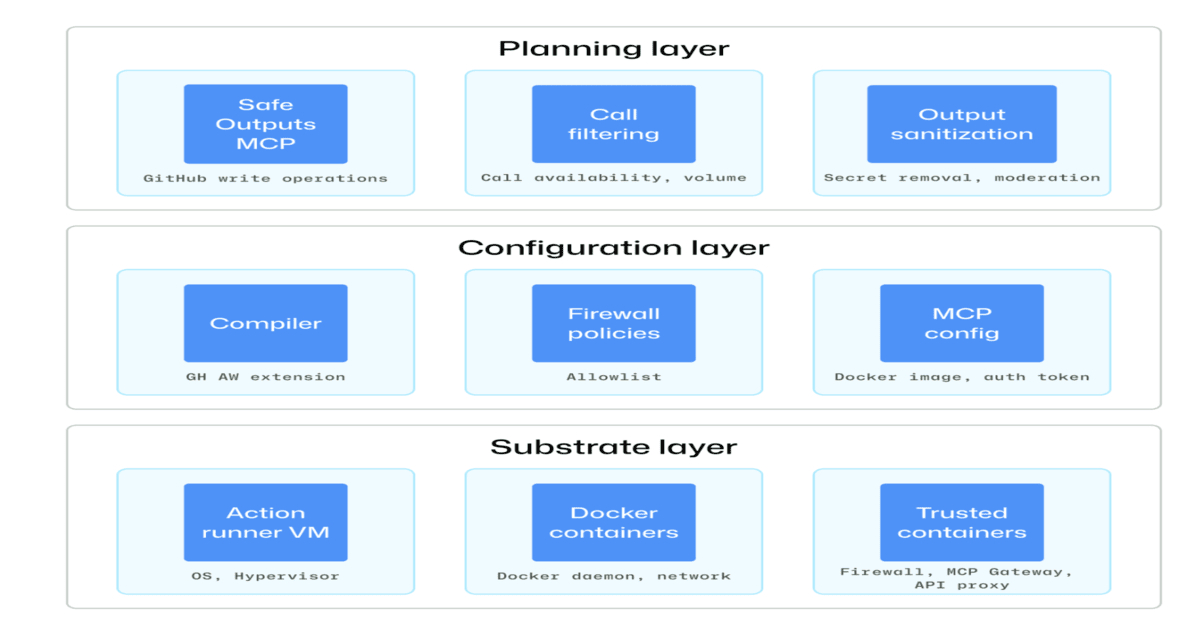

GitHub has published a detailed security architecture for running AI agents within CI/CD pipelines, establishing a layered defense model centered on isolation, constrained execution, and comprehensive auditability. The design confines agents to sandboxed, ephemeral containers with restricted permissions and no persistence between runs, defaults to read-only access, and requires all modifications to flow through human-reviewable outputs such as pull requests and issue comments. This represents GitHub's acknowledgment of a fundamental problem: autonomous agents reasoning over repositories present attack vectors that traditional CI/CD automation never did. Unlike deterministic scripts, agents can be tricked through prompt injection, manipulated into credential theft, or triggered to perform unintended mutations of live systems—threats that require security thinking beyond access control lists and environment variables.

The timing of this announcement reflects where enterprise adoption has actually landed. Throughout 2025, organizations have moved past agent prototyping and begun integrating autonomous systems into genuinely consequential workflows—code review, testing, deployment decisions. GitHub Actions occupies a uniquely precarious position in this transition: it already manages secrets, controls repository permissions, and orchestrates changes to production infrastructure. Overlaying agents onto a system already operating at the intersection of code and infrastructure multiplies the blast radius of any compromise. Previous discussions about agent safety have largely treated the problem as alignment-focused—training agents to behave correctly—but the practical reality is that agents will be operating in environments full of untrusted inputs and adversarial prompts from both malicious users and unwitting developers. Security architecture becomes as critical as training.

What makes this announcement significant is that GitHub is reframing agentic security as a systems problem rather than a training problem, and in doing so, raising the baseline for what responsible AI deployment looks like. The industry has grown accustomed to deploying large language models with minimal guardrails, trusting emergence and training to prevent misuse. GitHub's architecture rejects that assumption, acknowledging that you cannot reliably prevent misbehavior through weights alone when the system controls repository state, credentials, and deployment pipelines. This shift should cascade across the industry—competitors will face pressure to articulate equivalent guarantees, and teams on platforms that don't will operate with unacknowledged security debt. It also signals that agents in CI/CD are not aspirational use cases for future research but immediate production problems requiring mature mitigations today.

The architecture directly benefits three constituencies with distinct motivations. Enterprise development teams gain permission to experiment with agent-assisted capabilities—code review, refactoring, test optimization—without incurring catastrophic risk if an agent misbehaves. Open-source maintainers can adopt agentic workflows in their own CI/CD without inadvertently becoming security vectors for downstream users. But perhaps most consequentially, the architecture sets a new standard: organizations and platforms that don't implement equivalent safeguards become obvious liability vectors, especially for regulated enterprises in finance, healthcare, and government. The bar for "production-ready agent integration" has been raised whether competitors are ready or not, and that advantage compounds as enterprises become more security-conscious.

GitHub's move converts security from a neutral feature into a structural moat. Competitors like GitLab, CircleCI, and open-source alternatives face a choice between implementing similar architectures—architecturally expensive and time-consuming—or accepting a higher-risk positioning that will become increasingly untenable as enterprises demand guarantees. AWS and Google Cloud have been notably quiet on agent security in their CI/CD offerings, suggesting either that agent integrations remain immature there or that vendors are reluctant to surface the problem. This leaves GitHub positioned as the secure-by-default platform for agent-driven development precisely when enterprises are desperate for clear guidance on safety. The competitive advantage is substantial because security cannot be easily retrofitted after the fact—it requires fundamental architectural choices that competing platforms cannot quickly replicate.

The most critical open questions remain unresolved by GitHub's architecture, and they will define the next phase of agentic security maturity. How do you prevent adversarial prompts embedded in code repositories from manipulating an agent's reasoning? How do you audit an agent's decision-making to detect when it has been socially engineered or when its reasoning was fundamentally unsound? As agents become more capable and demand more integrations with external services, APIs, and data sources, can these containment models survive, or do they become unacceptably restrictive? GitHub's architecture essentially enforces that agents remain constrained servants of human judgment rather than autonomous decision-makers. The question for the industry is whether that constraint remains acceptable as capability gaps close, or whether enterprises will eventually demand more autonomous agents—which would require fundamentally different security models.

This article was originally published on InfoQ AI. Read the full piece at the source.

Read full article on InfoQ AI →DeepTrendLab curates AI news from 50+ sources. All original content and rights belong to InfoQ AI. DeepTrendLab's analysis is independently written and does not represent the views of the original publisher.