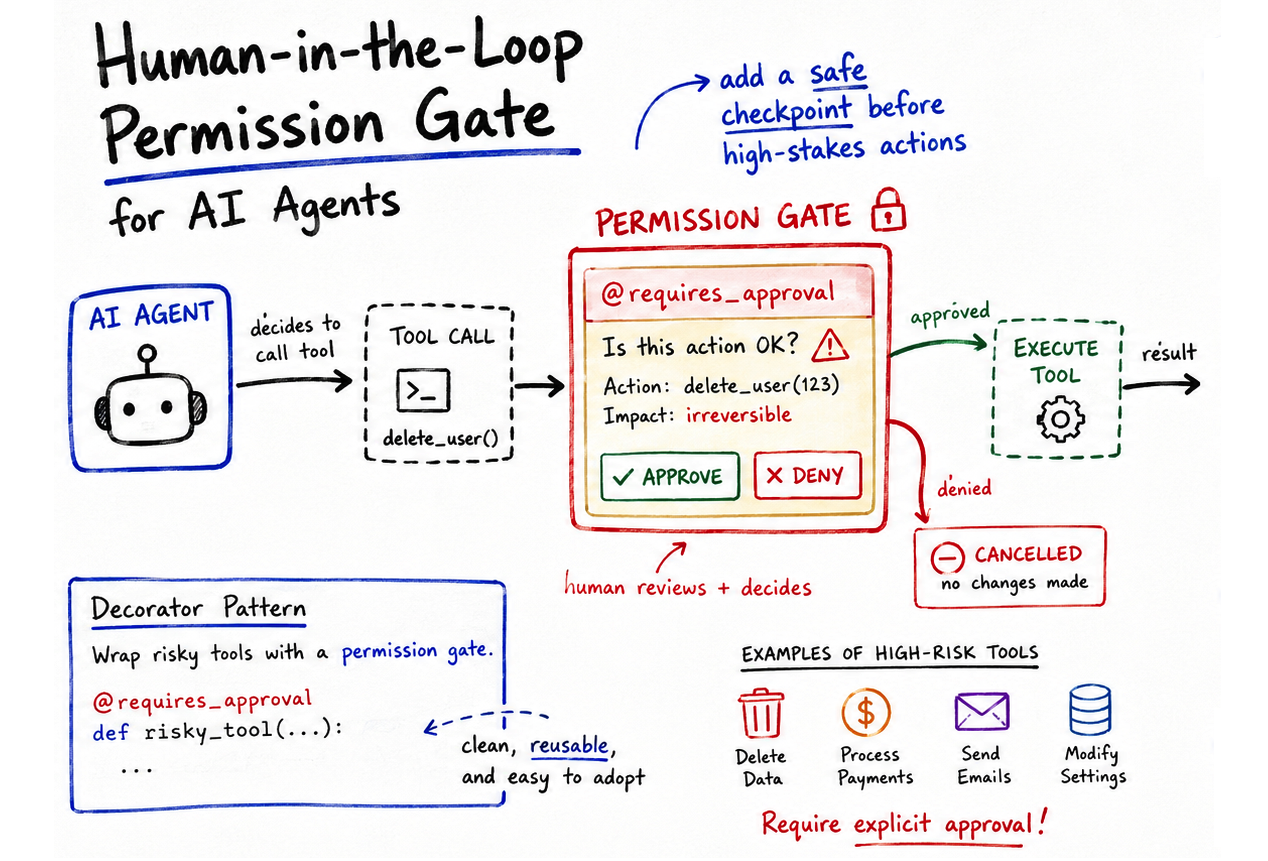

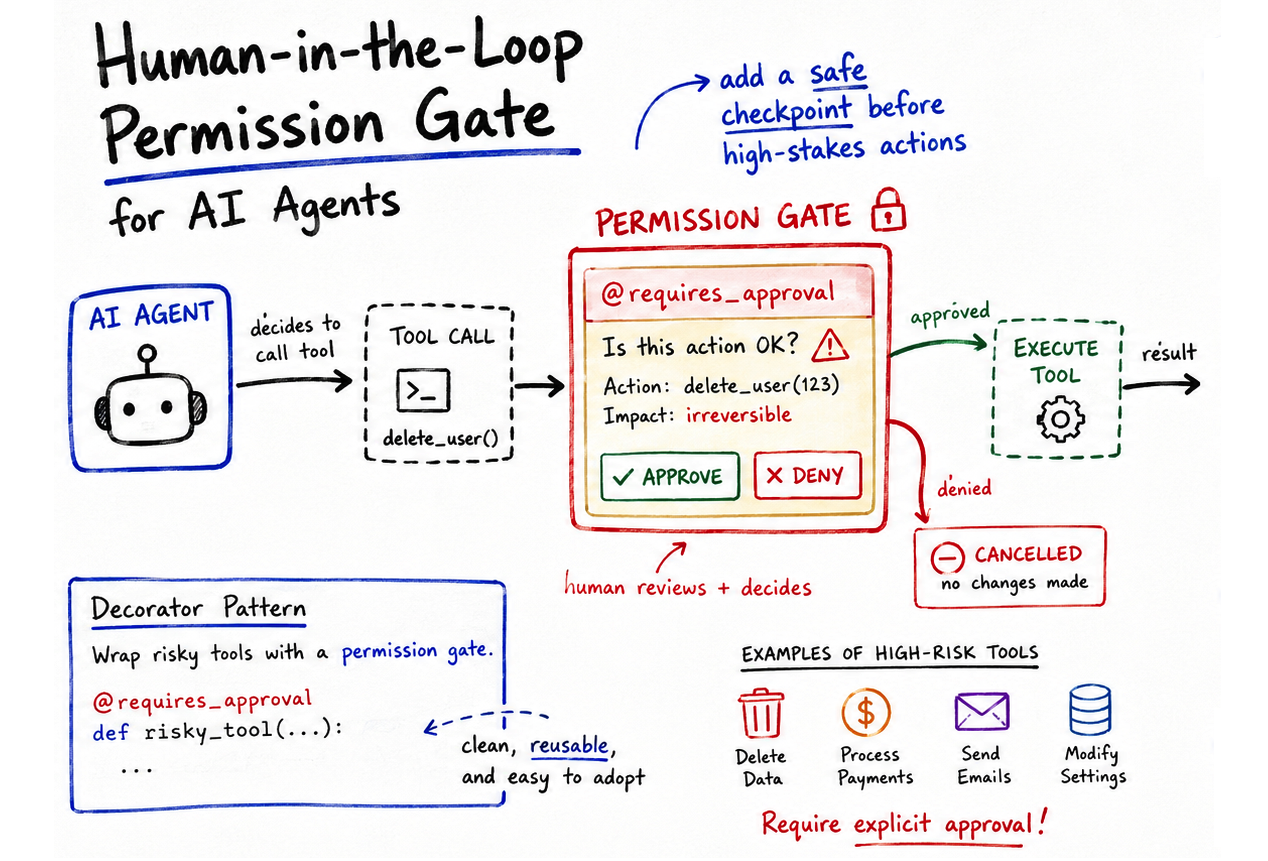

Machine Learning Mastery has published a practical guide for implementing human-in-the-loop permission gates in autonomous AI agents using a lightweight Python decorator pattern. The approach centers on a @requires_approval decorator that intercepts tool execution, displays proposed arguments to a human decision-maker, and requires explicit confirmation before proceeding. The implementation relies exclusively on Python's built-in functools library, eliminating the need for external APIs, third-party services, or specialized infrastructure. This straightforward mechanism addresses a growing pain point in agent deployment: the gap between the autonomy that makes agents useful and the oversight that makes them safe.

The timing of this guidance reflects a fundamental shift in how AI systems are being built and deployed. Twelve months ago, the conversation around agents centered on capabilities—what could they do, how quickly could they learn new tools, how many tasks could they automate. The conversation has matured. Organizations experimenting with agents at scale have begun discovering that raw autonomy without guardrails creates liability and operational risk. Incidents involving unintended API calls, erroneous data modifications, or mistakenly sent communications have made the case that agents need human oversight woven into their decision flow, not bolted on afterward. This guide arrives precisely when enterprises are moving from hackathon prototypes to production deployments where oversight transitions from optional to mandatory.

Within the broader AI landscape, this pattern addresses a gap that existing agent frameworks have largely sidestepped. Most widely-used agent libraries either assume the operator will manage permissions externally or provide permission models so coarse-grained that they offer false reassurance. A decorator-based approach is significant because it shifts the responsibility to where it belongs—at the point where autonomous action is about to occur—and does so using patterns that Python developers already understand and trust. The simplicity matters strategically: organizations are more likely to adopt oversight mechanisms that integrate cleanly into their existing codebases rather than mechanisms requiring architectural overhauls. This lowers the friction between "we want to be safe" and "we actually implemented safety."

The most immediate beneficiaries are application developers building autonomous agents for internal use and vendor companies embedding agents into products. Developers in regulated industries—financial services, healthcare, supply chain—face explicit compliance requirements around automated decision-making and transaction approval. But the impact extends far beyond compliance-driven adoption. Any team deploying agents that touch real data or perform irreversible actions suddenly has a clean, framework-agnostic mechanism for injecting human oversight. Enterprise buyers evaluating agent platforms will likely begin asking whether tools support decorator-based permission gates or similar patterns, pushing the conversation away from "does this agent work" toward "can we safely control what it does."

The competitive positioning here is subtle but consequential. Platforms promoting "fully autonomous agents" without built-in permission mechanisms are increasingly at odds with how enterprises actually want to deploy them. Frameworks that require complex permission models or specialized admin dashboards create friction and adoption barriers. The elegance of the decorator approach is that it sits between complete autonomy and heavyweight governance: it requires explicit human involvement at decision points but integrates seamlessly into existing Python codebases. This positions simpler, Pythonic solutions as more attractive than feature-heavy platforms that try to solve permission problems through configuration rather than code. For organizations already invested in Python stacks, this pattern may become the path of least resistance for adding oversight.

Looking ahead, the real test is whether this pattern scales gracefully to the complexity of production agent systems. The simplified CLI-based approval shown in the article works for single-agent prototypes but raises questions about asynchronous approval workflows, audit trails, delegation hierarchies, and distributed agent systems where multiple agents might request approval simultaneously. Will the community standardize around decorator-based permission gates, or will this remain a helpful technique that enterprises customize into more elaborate frameworks? More broadly, this guidance signals growing maturity in the agent conversation—away from "build it as fast as possible" and toward "build it in ways you can actually defend." That shift, driven by practitioners learning hard lessons in production, may be the most important signal here.

This article was originally published on Machine Learning Mastery. Read the full piece at the source.

Read full article on Machine Learning Mastery →DeepTrendLab curates AI news from 50+ sources. All original content and rights belong to Machine Learning Mastery. DeepTrendLab's analysis is independently written and does not represent the views of the original publisher.