Your hub for Asi Governance news and research — curated daily from 50 top AI sources including OpenAI, Anthropic, Google DeepMind, and more. Every article is reviewed and enriched with editorial analysis by the DeepTrendLab team.

Asi Governance

2 articles

🛡️ Safety

AI Alignment Forum

1 min read

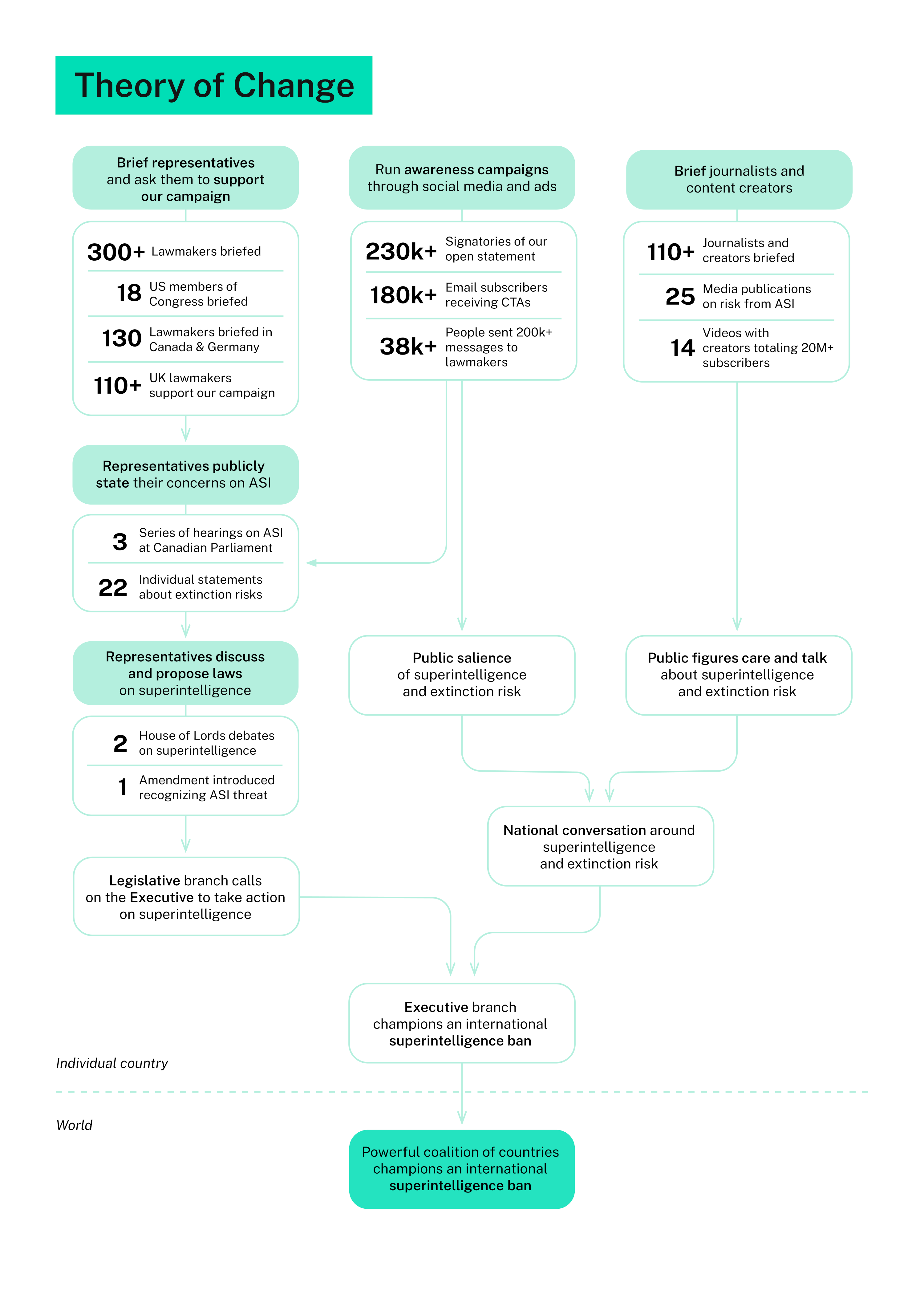

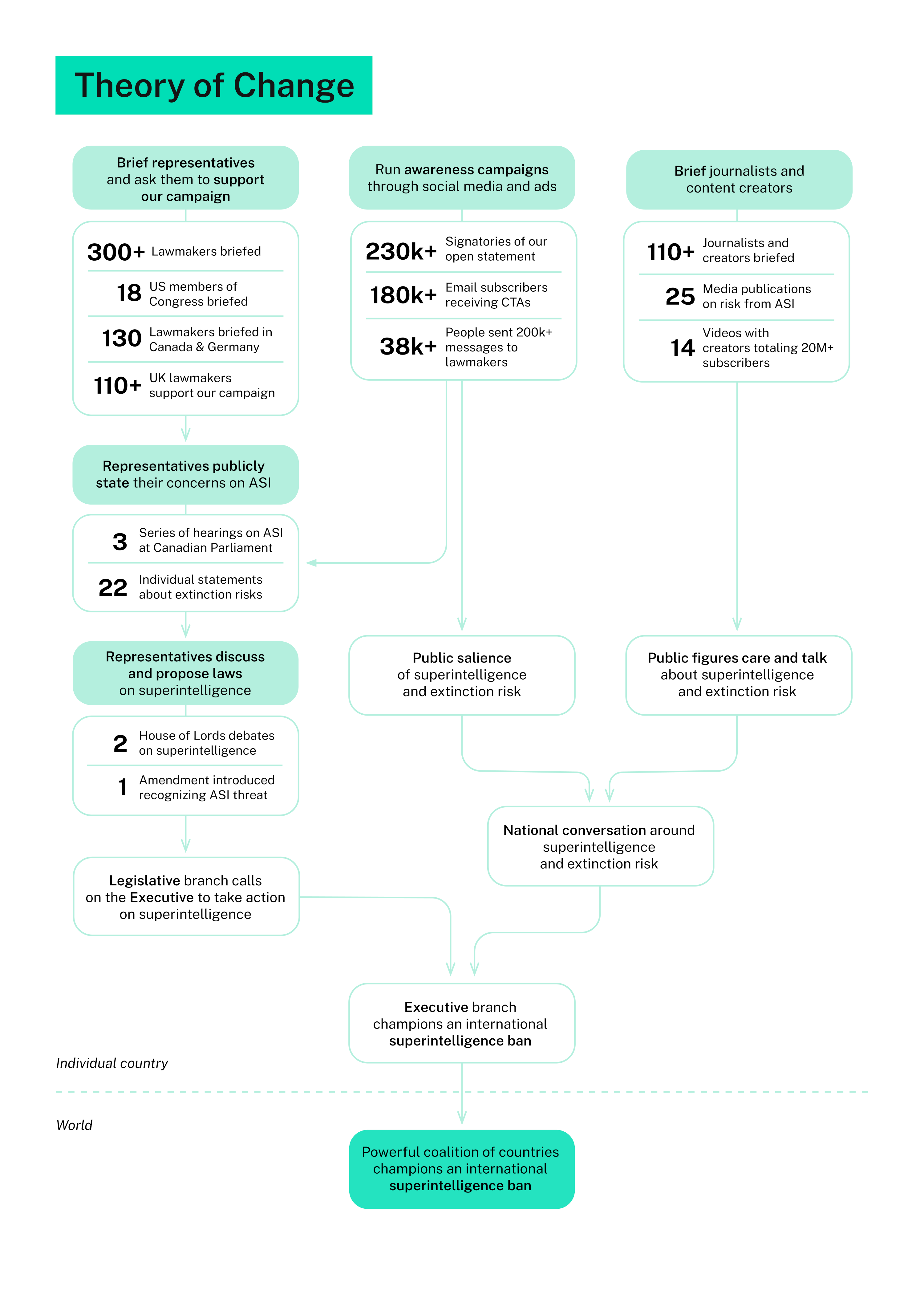

ControlAI's mission is to avert the extinction risks posed by superintelligent AI. We believe that in order to do this, we must secure an international prohibition on its development. We're working to make this happen through what we believe is the most natural and promising approach: helping decision-makers in governments and the public understand the risks and take action. We…

🛡️ Safety

AI Alignment Forum

1 min read

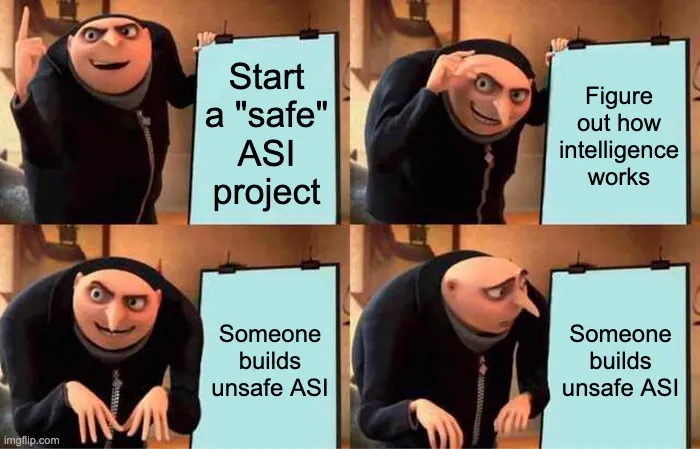

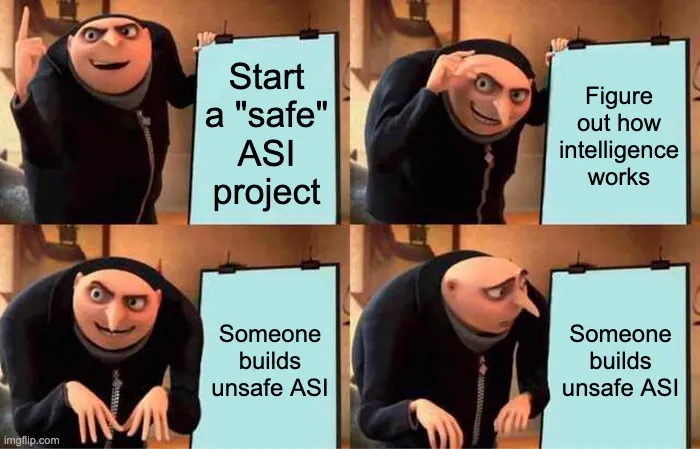

Sometimes people make various suggestions that we should simply build "safe" artificial Superintelligence (ASI), rather than the presumably "unsafe" kind. [1] There are various flavors of “safe” people suggest. Sometimes…