ControlAI has made explicit what has long been implicit in AI safety circles: preventing catastrophic outcomes from superintelligent AI systems requires not just research, but a coordinated international campaign with substantial funding and institutional backing. The organization's calculation that approximately $50 million annually could meaningfully shift the probability of securing a global prohibition on advanced AI development within the next few years represents one of the most concrete cost estimates yet attached to existential risk mitigation. This is not a request for incremental safety improvements or better alignment techniques—it is a claim that the extinction risk from advanced AI is significant enough to warrant a dedicated, government-scale policy intervention funded at levels comparable to major public health initiatives.

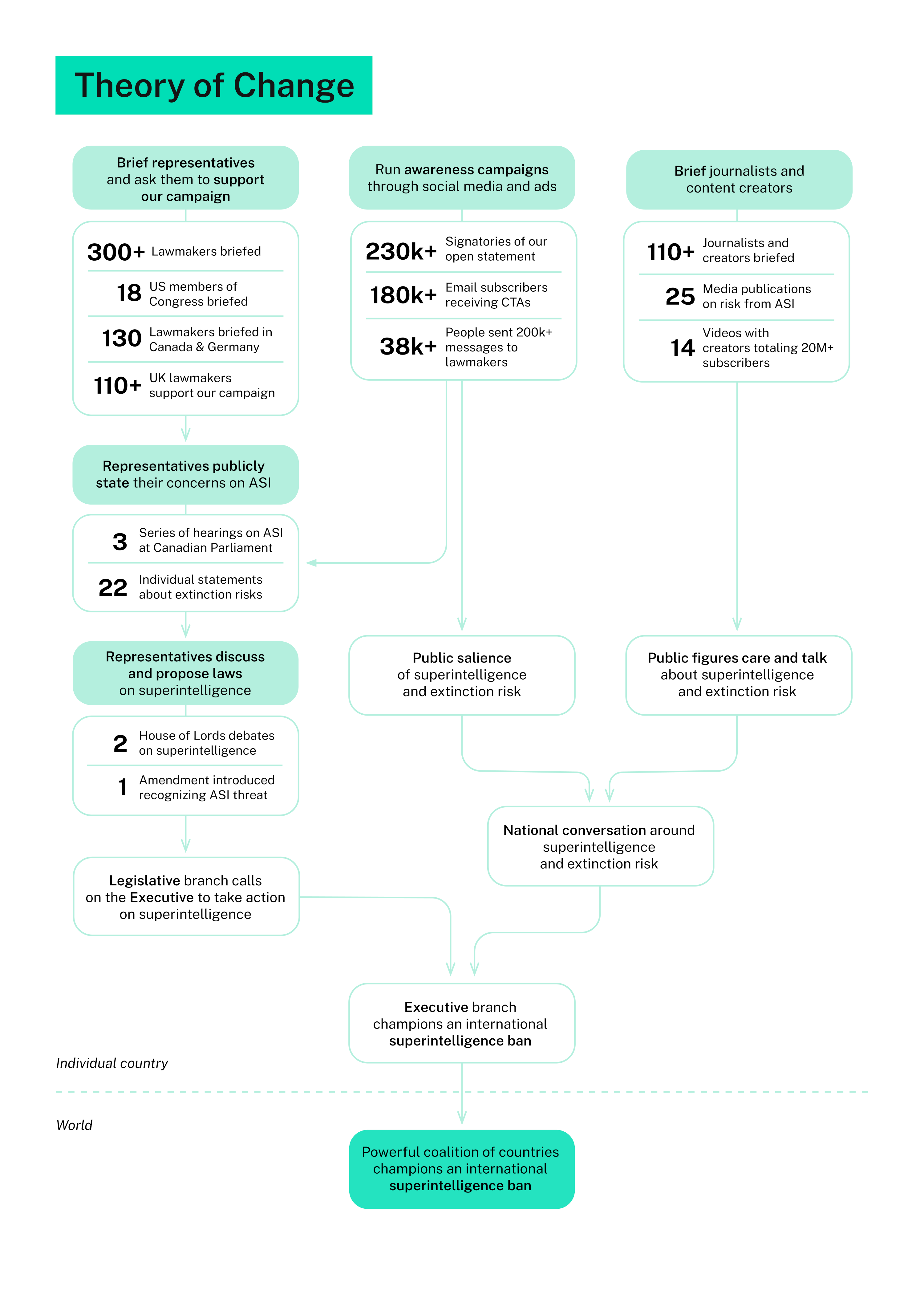

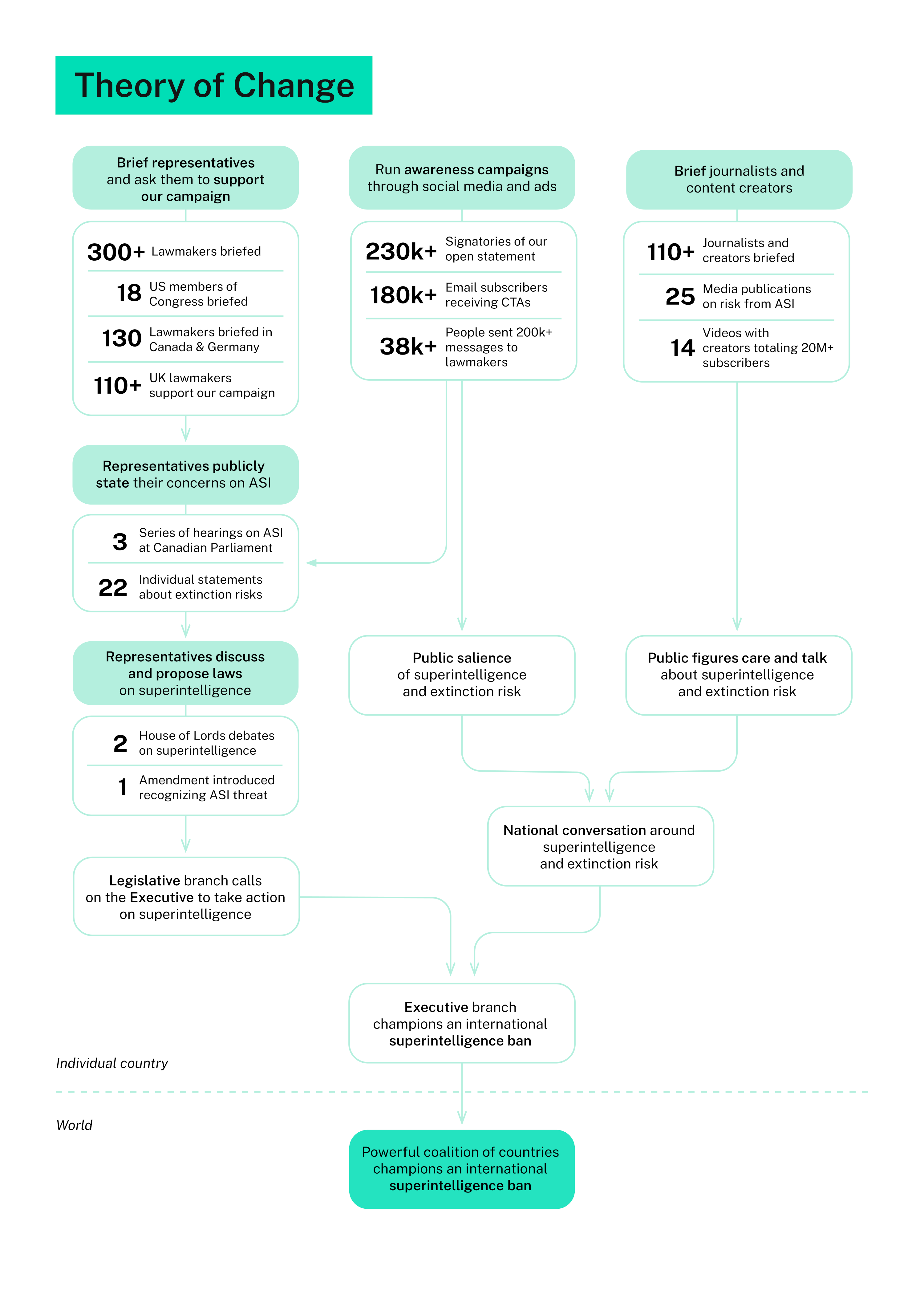

The timing of this announcement reflects a critical inflection point. For years, AI safety concerns existed primarily within academic papers and policy-adjacent think tanks. But as large language models have demonstrated unexpected emergent capabilities, as major technology companies have signaled serious work on AI safety, and as governments have begun drafting the first substantive AI regulation frameworks, the conversation has shifted. ControlAI's pitch emerges into an environment where decision-makers can actually be moved—where the question is no longer whether ASI risk is worth discussing, but how to structure governance to address it. The organization's theory of change hinges on translating technical concerns into political action, which requires precisely the kind of sustained public engagement and policy expertise that a $50 million budget would enable at scale.

What makes this announcement significant is not the mere existence of existential risk concerns, but the explicitness about what it costs to address them at a systemic level. If ControlAI's estimate is credible, it suggests that preventing superintelligent AI development is far cheaper than the alternative of developing it safely—a reframing with profound implications for how institutions allocate resources. The proposal essentially argues that international coordination around AI prohibition could be achieved more economically than the continued arms-race dynamic that currently incentivizes rapid capability development. This creates a direct challenge to the prevailing venture-backed model of AI development, which operates on the assumption that capabilities competition is not just inevitable but desirable.

The immediate effects ripple across multiple constituencies. Governments face pressure to take ASI risk seriously enough to fund advocacy and policy work, while AI researchers confront a growing faction arguing their work itself may warrant international restriction. Venture capital and major tech companies must contend with organized efforts to reshape the regulatory environment in which they operate. The broader research community, particularly those working on AI alignment and safety, see both opportunity and risk—opportunity because funding for safety work could increase, risk because the strategy of prohibition rather than control might make some safety research seem redundant or counterproductive.

This announcement exposes a fundamental tension in how the AI industry currently handles existential risk. The dominant narrative treats safety as something to be engineered into systems—better alignment, more testing, stronger safeguards. But ControlAI proposes that the real risk lies in the existence of superintelligent systems themselves, regardless of safeguards. This is not merely a funding ask; it is a direct challenge to the assumption that capabilities development and safety development can proceed in parallel. The question becomes not whether the prohibitionist strategy is right, but whether it can actually achieve traction against the powerful economic and nationalist incentives driving AI development.

The critical question now is implementation and credibility. Can a $50 million annual budget actually move international policy in a meaningful timeframe, particularly when major AI-developing nations view AI capabilities as central to strategic advantage? ControlAI's success will likely depend on whether they can convince both technologists and policymakers that ASI prohibition is technically feasible and that the alternative—continued competitive development—truly poses an extinction-level threat. The organization's trajectory will reveal whether existential risk discourse can generate sustained political action or remains confined to academic and policy margins. Watch for whether their funding materializes and how quickly, and whether their advocacy strategy begins shifting government positions on AI development governance.

This article was originally published on AI Alignment Forum. Read the full piece at the source.

Read full article on AI Alignment Forum →DeepTrendLab curates AI news from 50+ sources. All original content and rights belong to AI Alignment Forum. DeepTrendLab's analysis is independently written and does not represent the views of the original publisher.