What’s the Best Way to Brainwash an LLM?

I spent a weekend trying to convince a language model it was C-3PO. Here's what actually worked. The post What’s the Best Way to Brainwash an LLM? appeared first on Towards Data Science .

Your hub for Large Language Models news and research — curated daily from 50 top AI sources including OpenAI, Anthropic, Google DeepMind, and more. Every article is reviewed and enriched with editorial analysis by the DeepTrendLab team.

I spent a weekend trying to convince a language model it was C-3PO. Here's what actually worked. The post What’s the Best Way to Brainwash an LLM? appeared first on Towards Data Science .

When semantic search isn't enough for the RAG The post Hybrid Search and Re-Ranking in Production RAG appeared first on Towards Data Science .

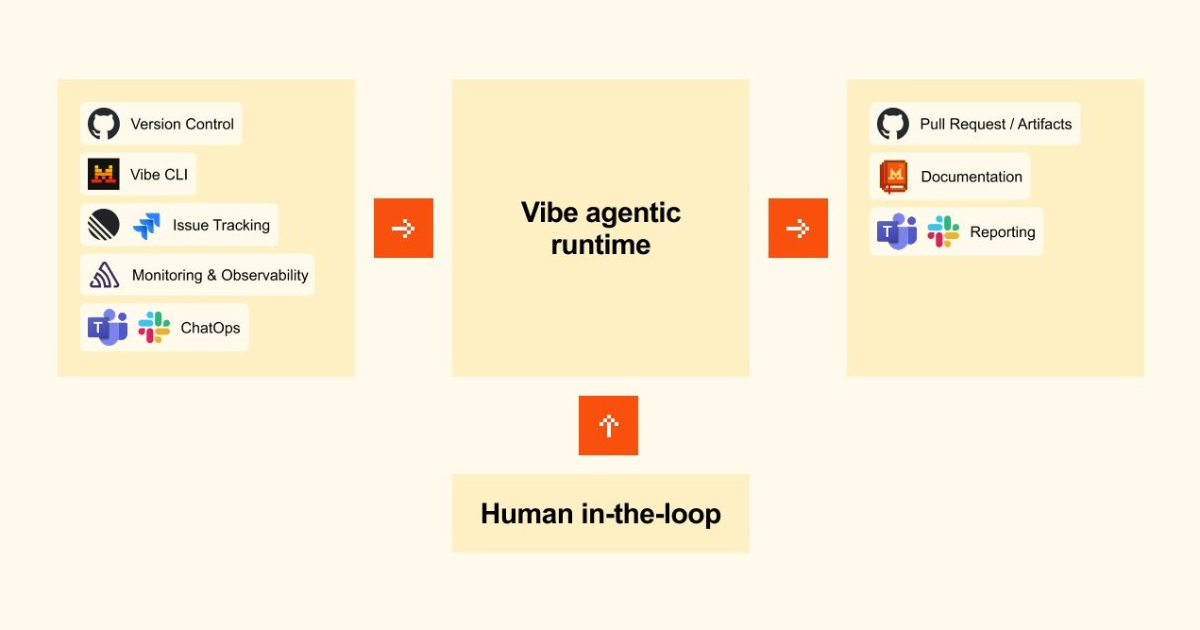

Coder Agents is a model-agnostic platform designed to let organizations run AI coding agents on their own infrastructure, rather than relying on cloud-based services. This allows teams to maintain full…

From tokenisation to evaluation : how modern language models actually work in practice The post The Must-Know Topics for an LLM Engineer appeared first on Towards Data Science .

Three weeks into testing, a learner told me my AI tutor gave her the wrong answer. Not obviously wrong — just outdated enough to mislead. That was the moment I…

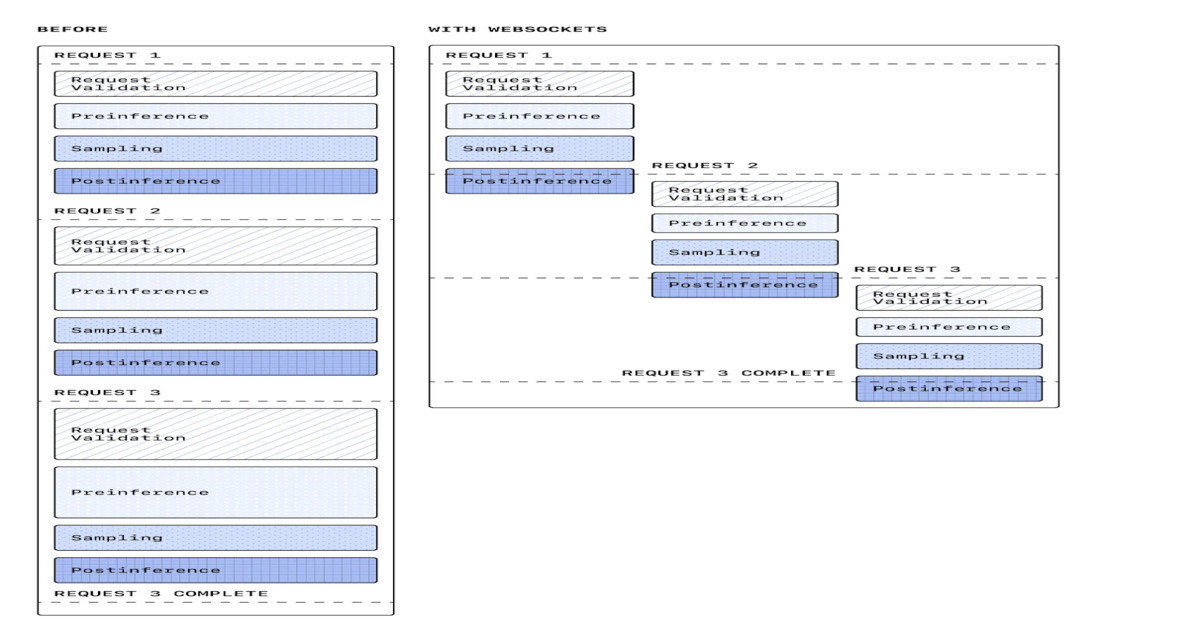

OpenAI introduces a WebSocket-based execution mode for its Responses API to improve agentic workflow performance in coding agents and real-time AI systems. The update reduces latency by up to 40…

A physicist's approach to building production-grade agents The post Why I Don’t Trust LLMs to Decide When the Weather Changed appeared first on Towards Data Science .

Google has unvelied a new generation of Tensor Processing Units (TPUs), featuring two specialized chips designed to accelerate model training and agent workflows, which require continuous, multi-step reasoning, and action…

Your RAG system isn’t failing at retrieval — it’s failing at reasoning. This article shows how I built a lightweight self-healing layer that detects and corrects hallucinations before they reach…

Mistral has released Mistral Medium 3.5, a 128-billion parameter model designed to handle instruction following, reasoning, and coding within a single system, and introduced new cloud-based agent capabilities in its…

Why reasoning models dramatically increase token usage, latency, and infrastructure costs in production systems The post Inference Scaling (Test-Time Compute): Why Reasoning Models Raise Your Compute Bill appeared first on…

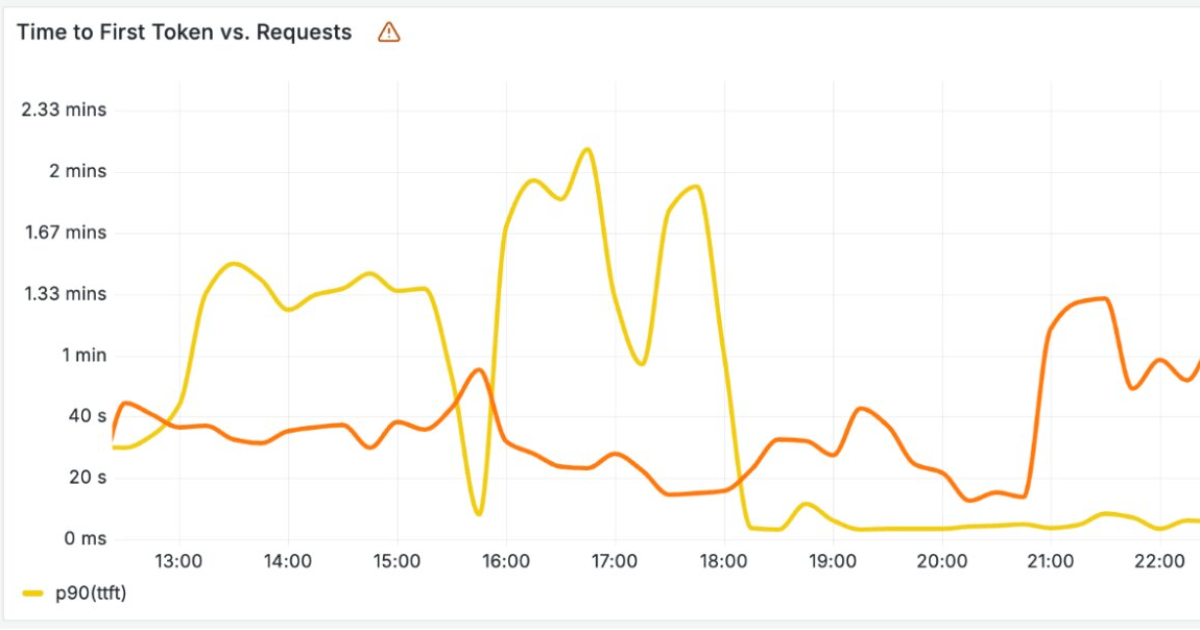

Cloudflare has recently announced new infrastructure designed to run large AI language models across its global network. As these models rely on costly hardware and must handle large volumes of…

DeepSeek says both models are more efficient and performant than DeepSeek V3.2 due to architectural improvements, and have almost "closed the gap" with current leading models, both open and closed,…

The latest set of open-source models from DeepSeek are here. While the industry anticipated the dominance of “closed” iterations like GPT-5.5, the arrival of DeepSeek-V4 has ticked the dominance in…

OpenAI's latest model delivers powerful results but sometimes ignores simple directions, creating a tension between intelligence and control.

These ares seven unconventional uses of LLMs that go far beyond usual chat interface and conversations.

Last Updated on April 23, 2026 by Editorial Team Author(s): DrSwarnenduAI Originally published on Towards AI. GPT-4 Has 1.8 Trillion Parameters. It Uses 2% of Them Per Token. DeepSeek-R1: 671…

When ChatGPT launched as an experimental prototype in late 2022, OpenAI’s chatbot became an everyday everything app for hundreds of millions of people. LLMs like ChatGPT were the new future:…

The move is part of VW’s broader automotive AI strategy.

Large language models (LLMs) have improved so quickly that the benchmarks themselves have evolved, adding more complex problems in an effort to challenge the latest models. Yet LLMs haven’t improved…

The rise of generative AI has spurred demand for AI workstations that can run or train models on local hardware. Yet modern PCs have proven inadequate for this task .…

Welcome to Import AI, a newsletter about AI research. Import AI runs on arXiv and feedback from readers. If you’d like to support this, please subscribe. A somewhat shorter issue…