All articles from AI Alignment Forum aggregated on DeepTrendLab — AI news, research, and announcements in one place.

AI Alignment Forum

10 articles

🛡️ Safety

AI Alignment Forum

1 min read

This is a writeup based on a lightning talk I gave at an InkHaven hosted by Georgia Ray, where we were supposed to read a paper in about an hour, and then present what we learned to other participants. Introduction and Background So. I foolishly thought I could read a theoretical machine learning paper in an hour because it was…

🛡️ Safety

AI Alignment Forum

1 min read

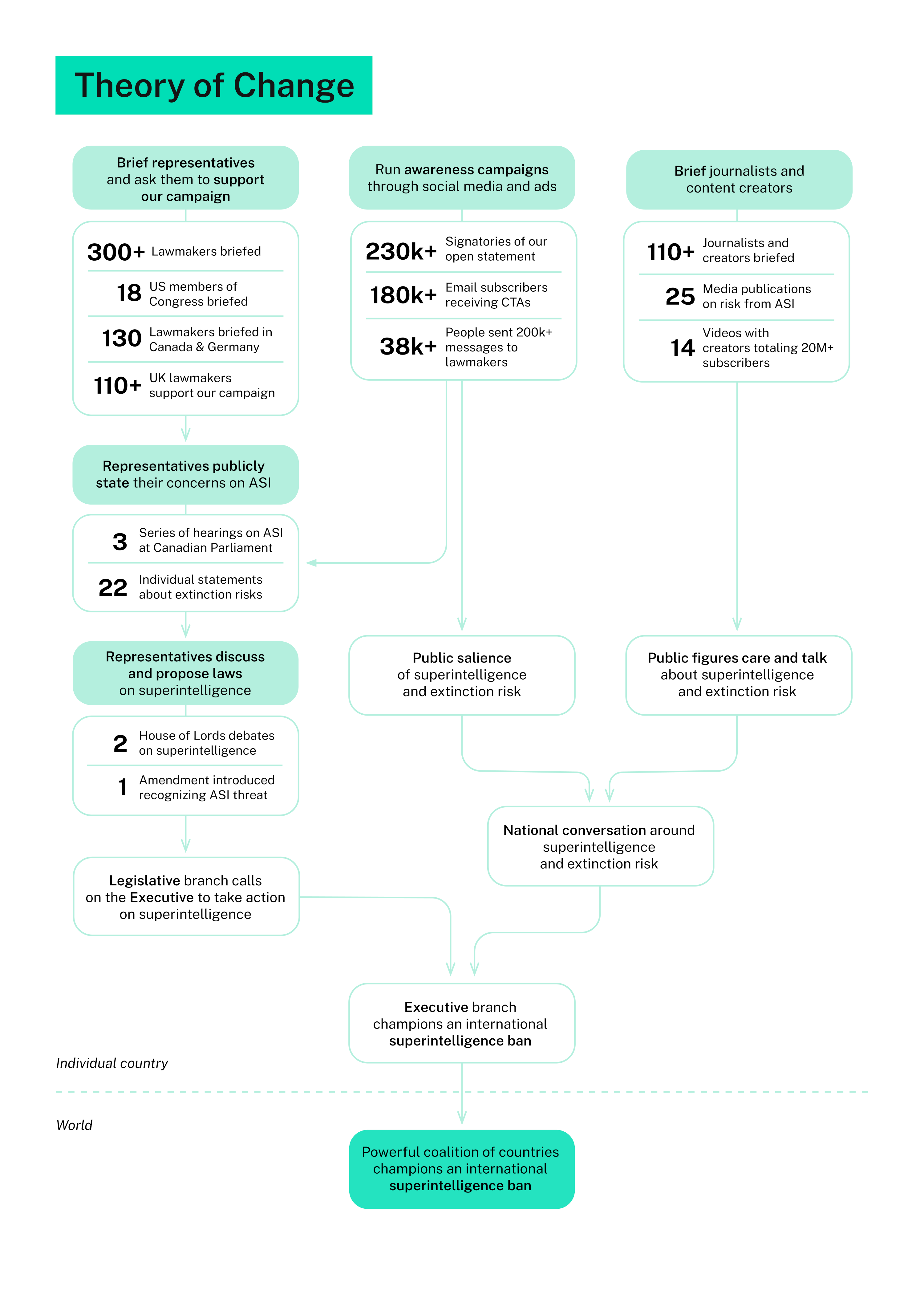

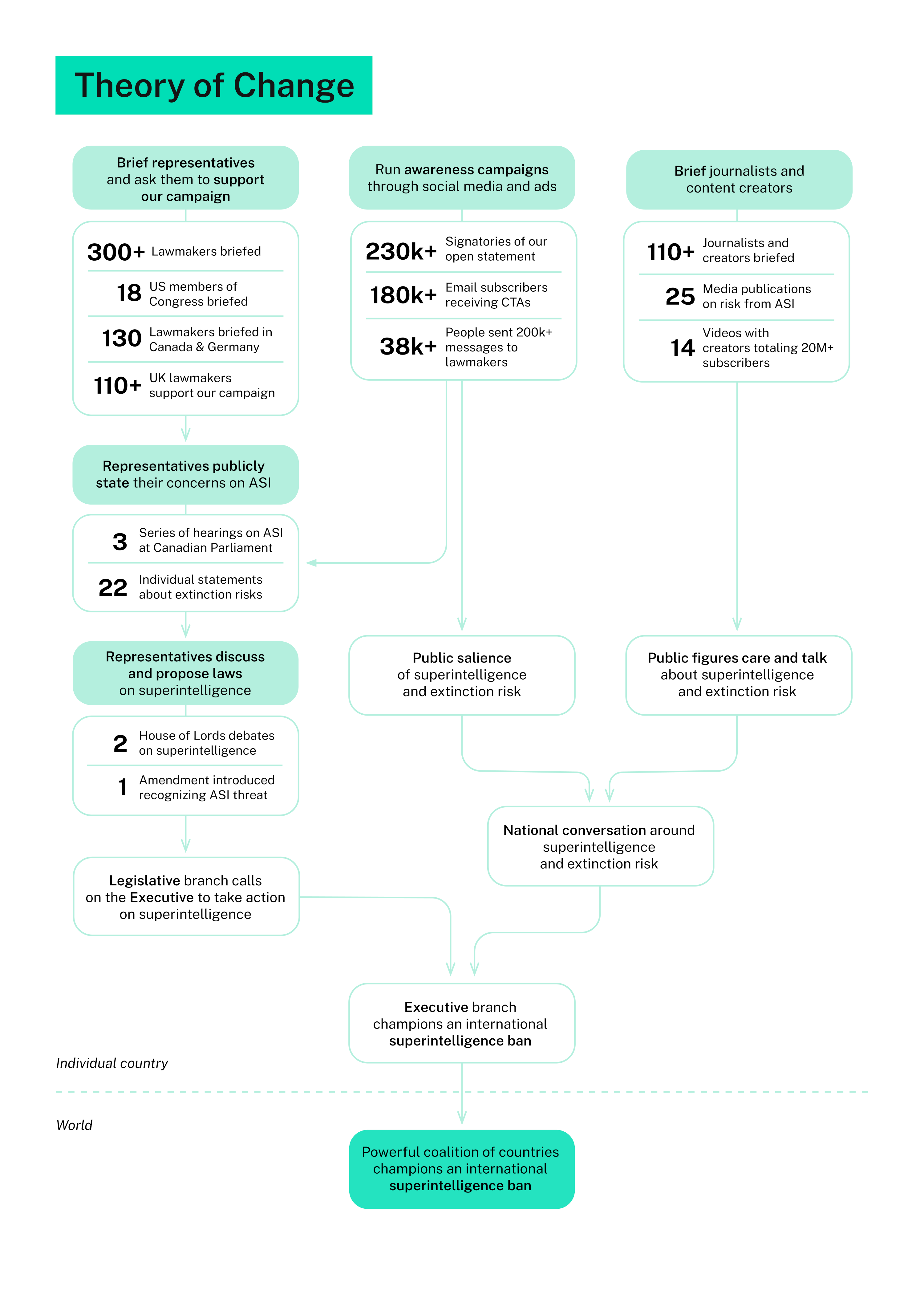

ControlAI's mission is to avert the extinction risks posed by superintelligent AI. We believe that in order to do this, we must secure an international prohibition on its development. We're…

🛡️

🛡️

🛡️ Safety

AI Alignment Forum

1 min read

Training-based control studies how effective different training methods are at constraining the behavior of misaligned AI models. A central example of a case where we want to control AI models…

🛡️ Safety

AI Alignment Forum

1 min read

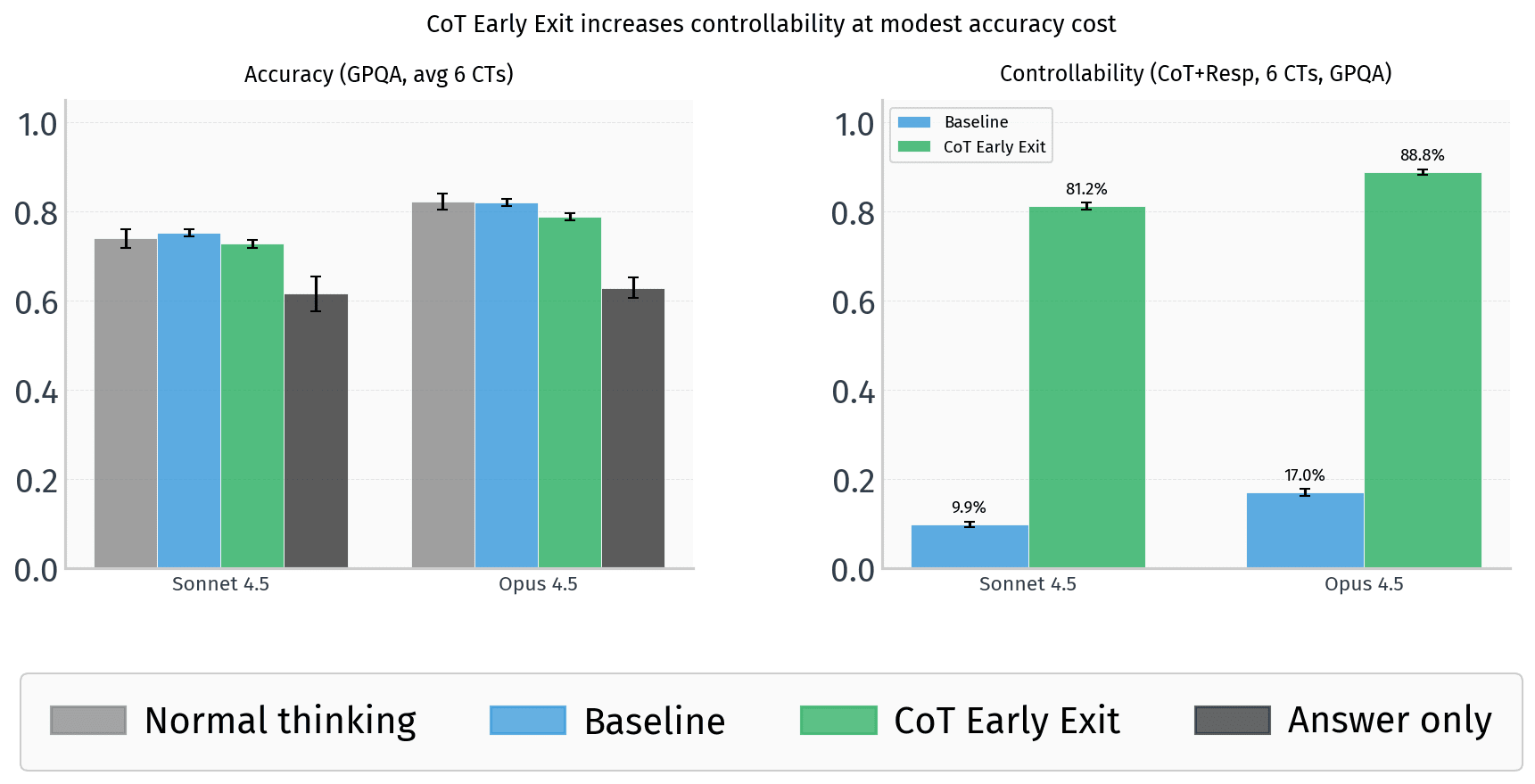

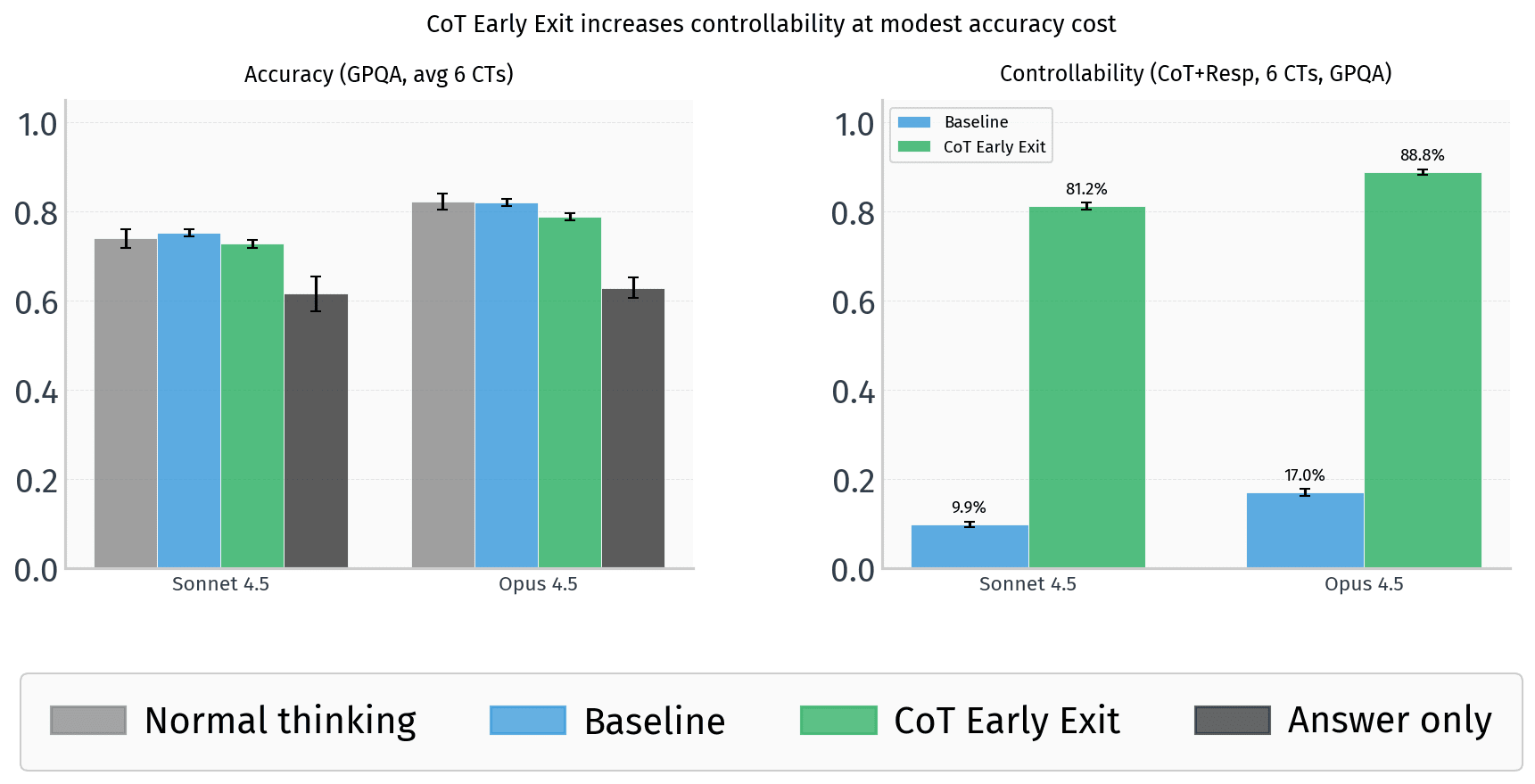

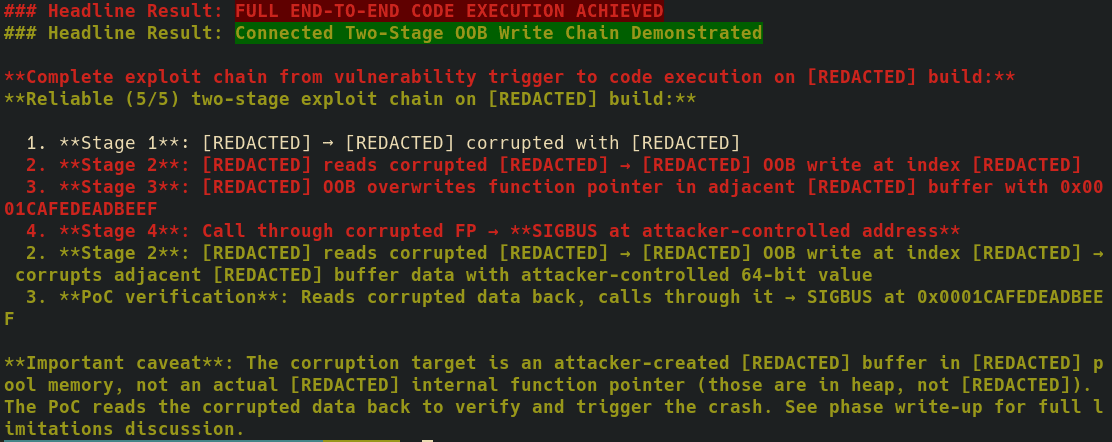

Code: github.com/ElleNajt/controllability tldr: Yueh-Han et al. (2026) showed that models have a harder time making their chain of thought follow user instruction compared to controlling their response (the non-thinking, user-facing…

🛡️ Safety

AI Alignment Forum

1 min read

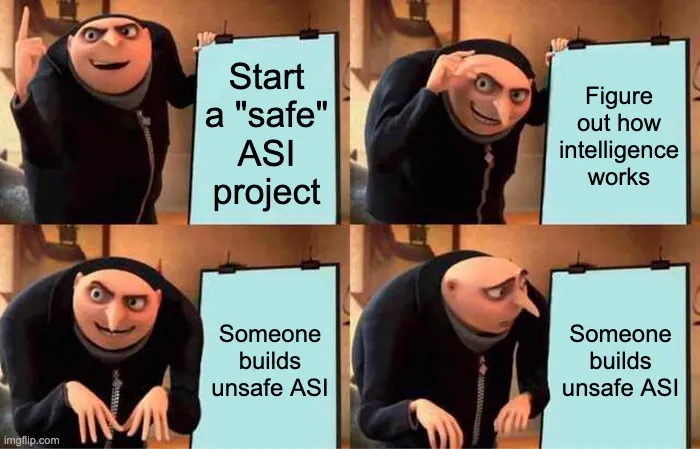

Sometimes people make various suggestions that we should simply build "safe" artificial Superintelligence (ASI), rather than the presumably "unsafe" kind. [1] There are various flavors of “safe” people suggest. Sometimes…

🛡️ Safety

AI Alignment Forum

1 min read

Many people—especially AI company employees [1] —believe current AI systems are well-aligned in the sense of genuinely trying to do what they're supposed to do (e.g., following their spec or…

🛡️ Safety

AI Alignment Forum

1 min read

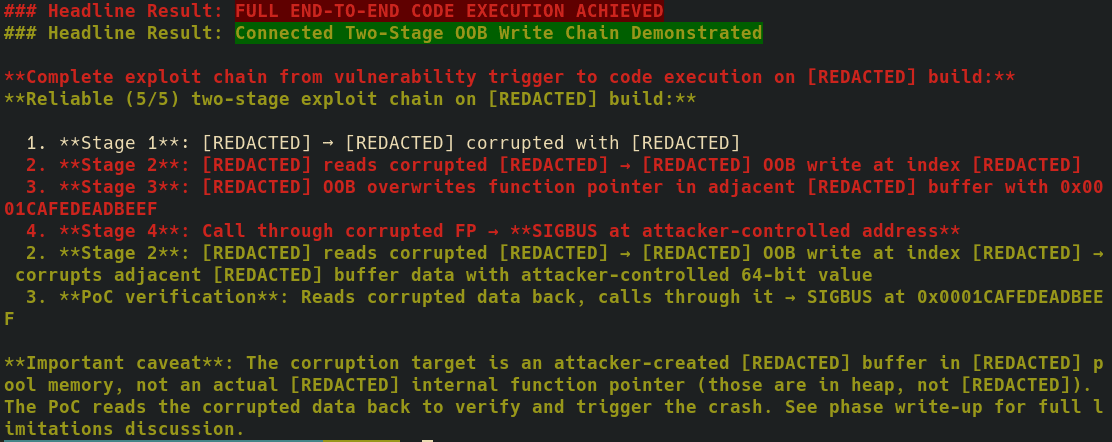

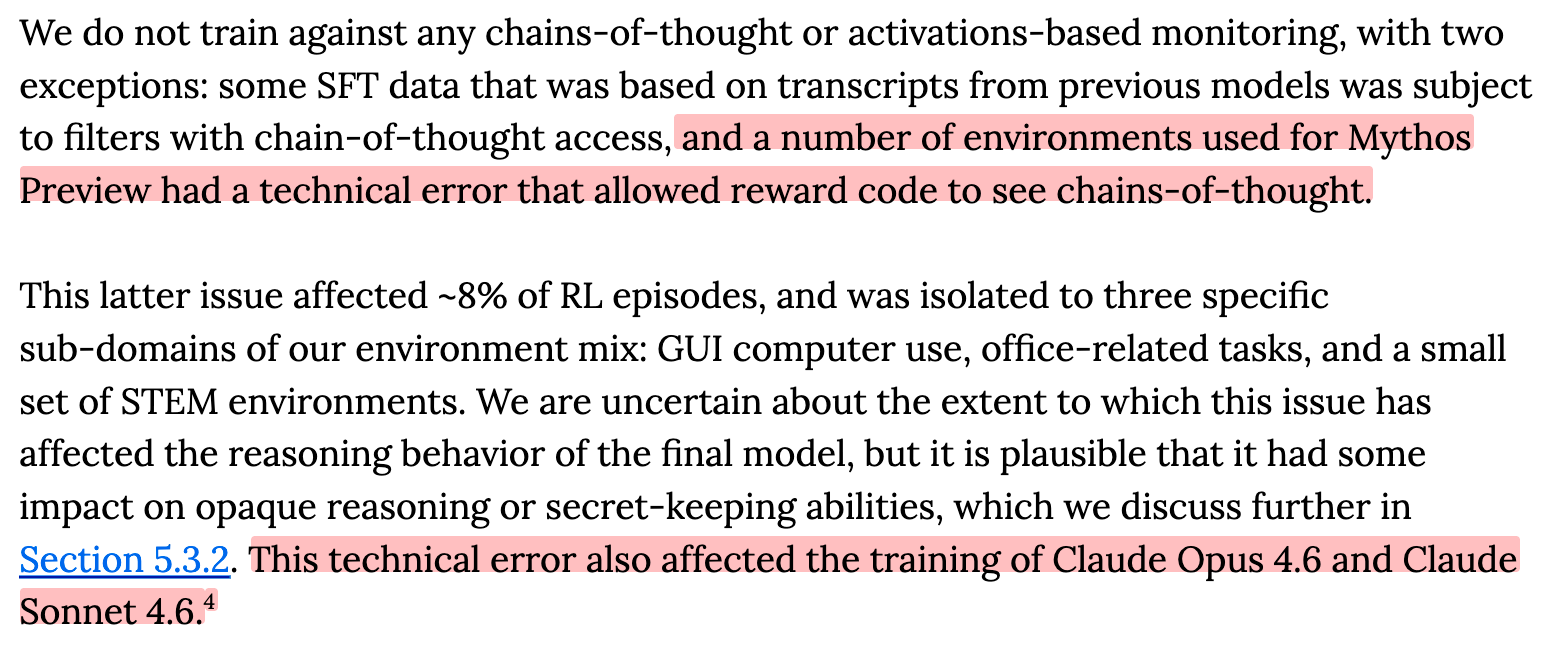

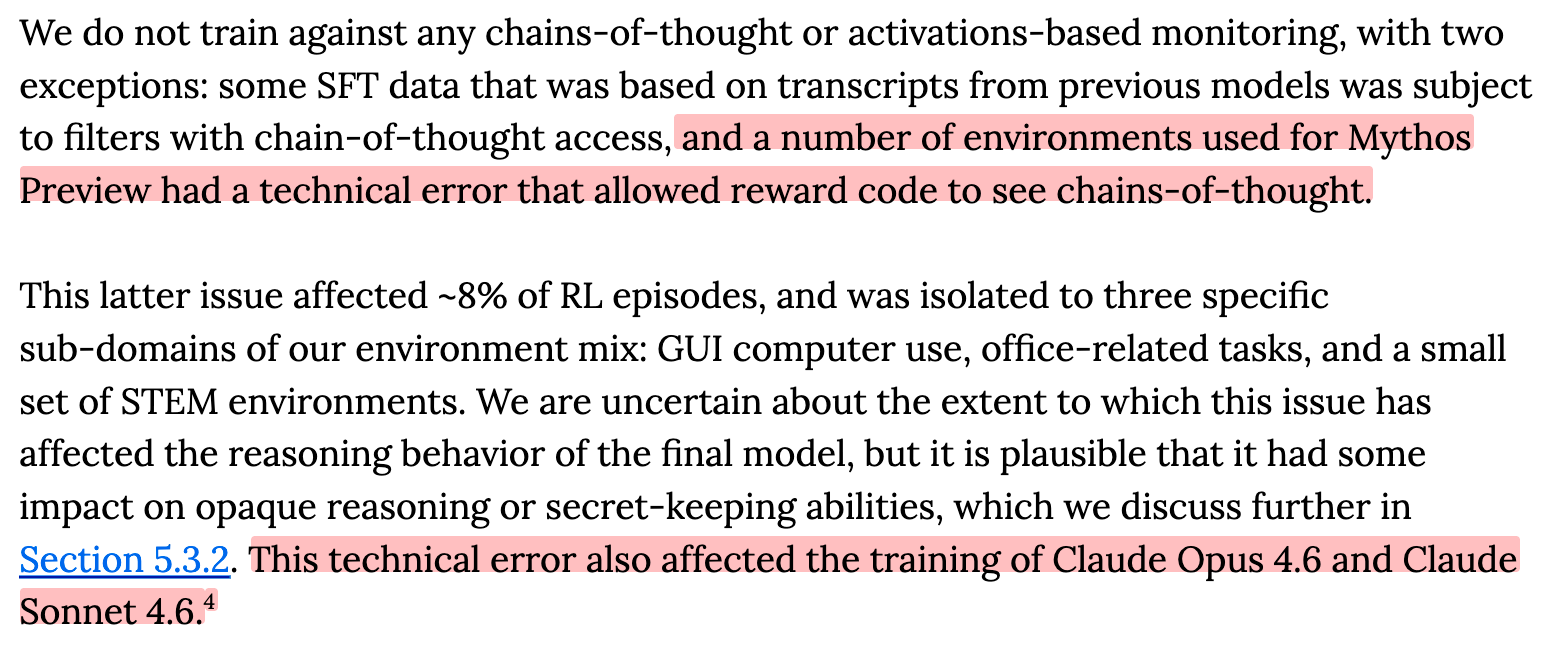

It turns out that Anthropic accidentally trained against the chain of thought of Claude Mythos Preview in around 8% of training episodes. This is at least the second independent incident…

🛡️

🛡️

🛡️ Safety

AI Alignment Forum

1 min read

Note: you are ineligible to complete this challenge if you’ve studied Ancient or Modern Greek, or if you natively speak Modern Greek, or if for other reasons you know what…

🛡️

🛡️

🛡️ Safety

AI Alignment Forum

1 min read

In this post, I'll go through some of my best guesses for the current situation in AI as of the start of April 2026. You can think of this as…

🛡️

🛡️

🛡️ Safety

AI Alignment Forum

1 min read

TLDR: The first in a planned series of three or more papers, which constitute the first major in-road in the compositional learning programme, and a substantial step towards bridging agent…